Read summarized version with

Introduction

Retailers updated prices an average of every 10 minutes on major platforms in 2024. Whether your data keeps pace is not a technology question — it is a competitive one.

Pricing analytics converts raw market data into decisions: which SKUs to reprice today, where you are losing margin to competitors, which promotions your rivals are running, and when. The foundation for all of it is current, accurate external competitor data.

Web scraping services are the standard infrastructure for collecting it systematically, at any scale. The seven core techniques below cover what data each requires, where infrastructure breaks at scale, and how GroupBWT has built these pipelines in production across retail, e-commerce, beauty, hospitality, and telecom.

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment

Why Pricing Analytics Matters for Revenue, Margin, and Market Positioning

A 1% improvement in price realization typically produces 3–5× the margin impact of an equivalent cost reduction. Make the adjustment on incomplete data, and you either surrender margin or lose volume unnecessarily. Across thousands of SKUs, that gap compounds fast.

Pricing analytics provides the structured framework that makes those adjustments evidence-based rather than intuitive. According to E-commerce statistics published by Eurostat (European Commission, 2026), 23.59% of EU enterprises now sell online (up from 18.93% a decade ago). Large enterprises (48.48% participation) compete directly against mid-sized businesses (21.38%), often through shared retail channels. In that environment, pricing analytics models that track competitor behavior are not optional infrastructure — they are how you protect margin.

“Most pricing teams underestimate the data quality problem. A pricing model built on incomplete competitor data does not give you wrong answers — it gives you confident wrong answers, which is far more damaging.”

— Oleg Boyko, Chief Operating Officer at GroupBWT.

Why Web Scraping Is the Foundation of Modern Pricing Data

What Pricing Data Can Be Collected from Public Web Sources

Competitor websites, marketplaces, and retail platforms publish more pricing data publicly than most pricing teams actually use. Prices are the obvious layer — current, promotional, sale — but stock status, ratings, review counts, and bundle configurations are equally accessible on most major platforms. No API agreement needed. Platform terms govern the collection method; the data itself is visible.

A structured web scraping pipeline collects this data systematically — by product, retailer, and geography, on whatever schedule the business requires.

One European cosmetics group (a top-ten global player in color cosmetics with close to €1B in revenue) has run weekly competitive monitoring on a GroupBWT-operated pipeline for more than three years. Not one missed delivery cycle. The system covers 300,000+ SKUs across 13 retailers and 30+ localized storefronts — Douglas (8 locales), Notino (17), Sephora, Rossmann, Flaconi, Boots, among them — producing 1.2 million+ records a month for regional pricing teams.

What started as a defined scraping contract has since become a strategic data partnership. GroupBWT engineers now build Data Vault 2.0 pipelines (a data warehouse architecture for long-term traceability and auditability) alongside the client’s in-house analytics team. As the brand enters the US, India, and the Middle East, each new market extends the footprint.

How Continuous Data Delivery Closes the Competitive Data Gap

A scraping pipeline’s real test is not coverage. It’s whether data arrives on time and in a form the business can act on. Consider what proactive price-match guarantees actually require. Nordic telecom operators running direct-to-consumer device stores monitor six competitors every 30 minutes across approximately 1,000 SKUs, with snapshots delivered to an event queue (a buffer that holds incoming data until the pricing engine processes it) every 10 minutes. At that cadence, data quality stops being about parsing accuracy. It becomes about event consistency. Two snapshots ten minutes apart that disagree on stock status can trigger an incorrect price-match and erode margin in real time.

Not a dashboard with competitor prices. A data pipeline reliable enough to act on automatically. That is what the business actually needs.

Also Read: Competitor Price Monitoring: How to Build a System That Protects Your Margin

Pricing Analytics That Improve Pricing Strategy

The table below maps each pricing analytics technique to the data it requires and the business question it answers.

| Technique | Core Data Required | Business Question |

| Historical Price Analysis | Time-series price data by SKU | How do competitor prices move, and when? |

| Dynamic Pricing Optimization | Real-time prices + demand signals | What should my price be right now? |

| Promotion & Discount Analysis | Sale prices, coupon data, promo calendars | What promotions are competitors running? |

| Availability & Stock-Out Analysis | In-stock/out-of-stock signals by SKU | When do competitors run out, and how does that affect demand? |

| Review & Sentiment Analysis | Review text, ratings, and review volume | How do customers perceive price vs. quality? |

| Category-Level Positioning | All prices across a category | Am I cheaper or more expensive at the category level? |

| Market-Driven Strategy Optimization | All of the above, combined | What does the full market picture say about my pricing position? |

Historical Price Analysis

This technique tracks how competitor prices have moved over time, by product, category, retailer, and region. Crucially, this data must be deliberately and continuously collected, as historical pricing records are usually not stored or maintained by default. Done well, it surfaces patterns invisible in daily snapshots. Seasonal price corridors. How promotional timing clusters across a category. Where prices recover after a sales event, and at what pace.

A UK fashion resale platform built this capability into a database of 50M+ records drawn from six resale marketplaces. The historical dataset lets their B2B clients answer questions like: What did resale prices for this category look like last autumn? Did prices recover after the holiday markdown period? The same dataset also validates strategy assumptions. If you believe you are priced competitively in a category, the data either confirms it or surfaces a pattern you have been missing.

Dynamic Pricing Optimization

Dynamic pricing optimization means adjusting prices in response to real-time market signals — competitor moves, stock depletion, and demand spikes. It needs two pieces working in sync. A data feed that updates fast enough to matter. A decision layer that knows what to do when a competitor moves.

The bottleneck is rarely the model. It is the data.

“The bottleneck in dynamic pricing is almost never the model. It is the data. A 24-hour-old price in electronics or travel is not a data point — it is a liability. The teams that win on dynamic pricing are the ones who solved freshness first.”

— Alex Yudin, Head of Data Engineering at GroupBWT.

Strategies for real-time dynamic pricing analytics begin not with algorithm selection, but with infrastructure: what is the minimum refresh cadence your category requires, and can your pipeline reliably deliver it? Production dynamic pricing systems route continuous price feeds into decision engines that operate on seconds- or minutes-wide windows. The sophistication of the model is irrelevant if the input data is stale.

Promotion and Discount Analysis

Promotions are where most pricing teams operate with incomplete information. You know your own promotional calendar. You rarely know your competitors in advance.

Web scraping fixes that. By tracking promotional prices, bundle offers, and discount depth across competitors daily, you can identify which retailers run promotions on which categories, at what depth, and on what cadence. A top-tier Asian e-commerce marketplace (one of the largest publicly listed online retailers in the region) ran this at scale: the pipeline GroupBWT built grew its throughput by 1,000,000% to become a five-workstream production system covering coupons, card promotions, and vendor-funded discounts. The 14-month development path involved four architecture generations. On the client’s automated vendor scorecard (evaluated across cost, success rate, and speed of fix), the GroupBWT team consistently ranks in the top tier among six global vendors.

Product Availability and Stock-Out Analysis

Stock-out is a pricing signal. When a competitor runs out of inventory in a category, you have a window to capture demand, sometimes at a premium. When they restock, aggressive competitor repricing can rapidly close that window.

Tracking availability data alongside prices converts stock-outs from reactive problems into strategic opportunities. The same signals that flag an out-of-stock event can trigger an automated pricing response, provided the infrastructure is built to handle it. Businesses that connect competitor price scraping to inventory monitoring gain a meaningful advantage in volatile categories.

Review and Sentiment-Based Price Perception Analysis

Price perception is not just the number on the page. Customers read reviews, and a product with hundreds of 5-star reviews can sustain a premium that an equivalent product with mixed ratings cannot, even when the retail price difference is marginal.

GroupBWT built this capability for a premium mattress brand: collecting 800,000+ reviews from 50+ platforms across 22 competing brands. The NLP pipeline translated reviews, deduplicated them, and extracted sentiment signals about how price was perceived relative to quality across the competitive set. The output gave the brand’s category team defensible evidence of where its premium positioning could be sustained or extended, directly informing the following season’s pricing strategy across key markets. The engagement continues as an ongoing monitoring service as the competitor set evolves.

Category-Level Price Positioning Analysis

Most pricing decisions happen at the SKU level. But SKUs exist in categories, and category-level positioning often matters more for margin management than individual product decisions.

Category-level analysis answers: Are you systematically more expensive in this category? Is the gap consistent across regions? Does it change during promotional periods? A European grocery retailer GroupBWT runs weekly scraping against a competitor’s full catalog of approximately 10,000 products. EAN-based matching handles branded items; a manually maintained equivalence table covers private-label equivalents. The price-position report goes directly to the CEO and senior management every week as a standing input to category-level pricing and promotional planning. New product categories are added as the client’s private-label range expands.

Market-Driven Pricing Strategy Optimization

Each data stream individually answers a tactical question. Competitor prices tell you where you stand today. Promotional calendars show when rivals are about to move. Stock signals flag windows for demand capture. Customer sentiment explains why a price hold might work or fail. Combined, they answer a strategic question: where is your pricing position in the market right now, and where should it be?

A global shared-mobility operator, present in 200+ cities across roughly 30 countries and preparing for a 2026 IPO, needed a competitive-intelligence capability its team could not scale in-house. The pipeline GroupBWT built delivers daily data on fleet, pricing, and geozones across 24 competitor operators, with 33 fields per record, into the client’s Snowflake environment by 12:00 AM ET every day. The output maps onto the client’s internal market polygons, letting data-science and global-expansion teams evaluate pricing position, competitor density, and market saturation simultaneously from a single source.

What market-driven strategy optimization produces at scale is a continuous, structured view of the competitive landscape. Not just faster reactions to individual price changes — a foundation for decisions no human team could make manually.

How Historical Pricing Data Supports Forward Planning

Identifying Seasonal Price Trends

Seasonal pricing patterns repeat. Electronics prices drop before product launches. Apparel markdowns follow a discount calendar that hasn’t changed much in decades. Travel spikes around school holidays. Historical data surfaces these patterns and gives pricing teams a reference point for decisions made before the season begins.

Planning analytics pricing around seasonal patterns is more precise than reacting to them in real time. A business that knows (from 18 months of historical data) that a competitor reliably marks down a category in weeks 3–5 of Q4 can plan its own promotional timing and margin targets before the markdown begins, not after.

Forecasting Price Changes Before They Occur

Planning analytics pricing models that incorporate historical price trajectories improve forecast accuracy across categories. Rather than reacting to a competitor’s price change after the fact, teams that track price history can anticipate when a change is likely. The same competitor has made the same move before, in the same category, on a predictable cadence. This is the competitive edge that competitive intelligence data analysis builds over time: the longer the historical dataset, the more reliable the pattern.

What Data Businesses Need for Effective Pricing Analytics

Prices, Promotions, Availability, and Product Attributes

Core pricing data is straightforward to define but complex to collect consistently. Building an effective pricing data program starts with a minimum viable dataset. The floor is current price, sale price, promotion type, stock status, and product identifiers — EAN, GTIN, or UPC depending on the category. Without consistent attribute data, price comparisons break down. What looks like a competitive gap is sometimes a size variant or a bundle configuration difference.

| Data Type | Source | Use in Pricing Models |

| Current prices | Product pages | Price gap analysis, positioning |

| Promotional prices | Sale pages, coupon sources | Promotion analysis, timing |

| Stock availability | Product pages | Stock-out opportunity detection |

| Product identifiers | Structured data, PDPs | Accurate cross-retailer SKU matching |

Analytics pricing models require this data to be normalized and matched at the product level before any analysis begins. That normalization is where the real engineering work lies, and where off-the-shelf tools most frequently fall short.

Customer Reviews, Ratings, and Sentiment Signals

Review data is a proxy for price elasticity. Products with strong, consistent positive sentiment can support premium pricing. Products with deteriorating reviews are vulnerable to downward price pressure even when their market position looks stable from price data alone.

Data analytics pricing models that incorporate sentiment signals alongside price data produce more accurate positioning recommendations than those built from price data in isolation. The signal is especially valuable in Beauty & Personal Care and premium consumer categories, where purchase decisions are review-driven, and digital shelf ecommerce analytics provides the full competitive picture.

Common Challenges in Pricing Analytics Projects

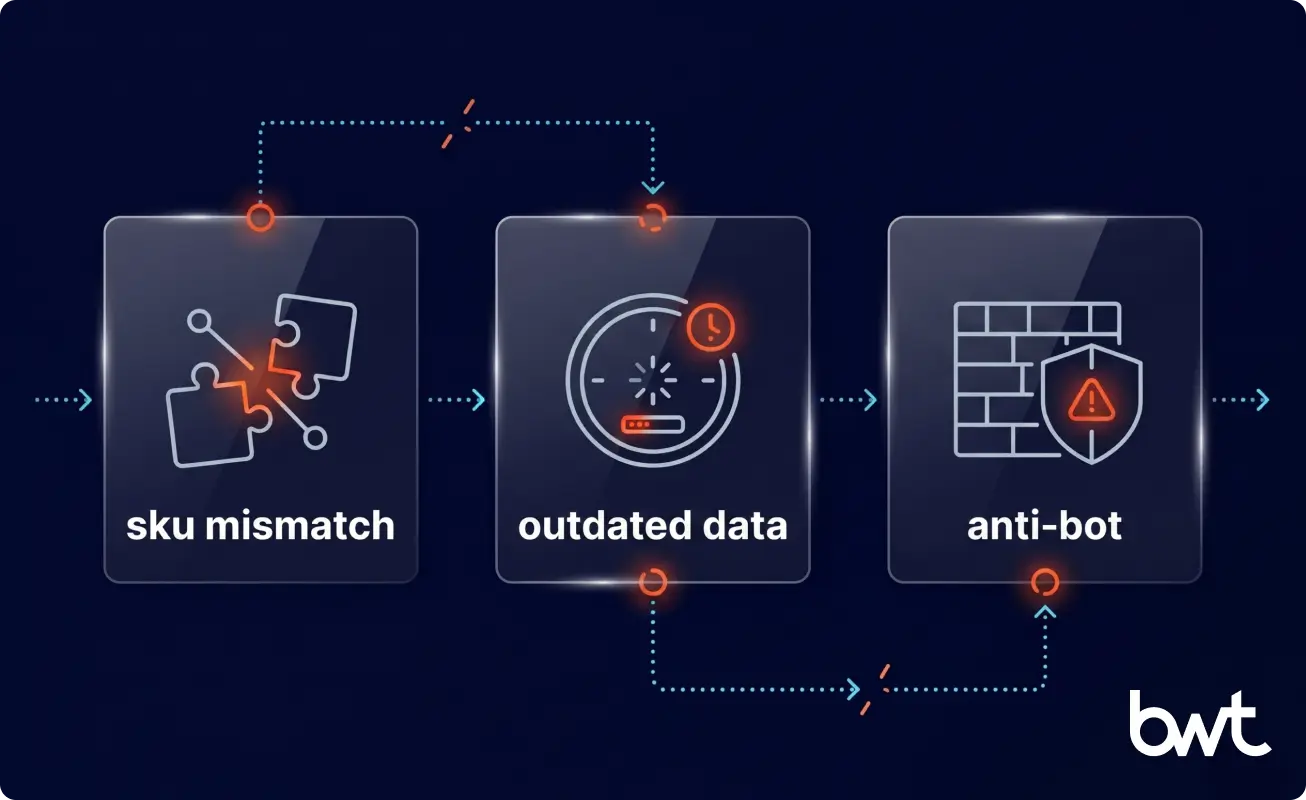

Inconsistent Product Data and SKU Matching Issues

Matching products across retailers is harder than it appears. The same product might carry different descriptions, package sizes, brand name formatting, or no shared identifier at all. Without reliable matching, the output compares incompatible products — technically precise, practically useless.

EAN/GTIN-based matching solves this for branded products. For private label, where no shared identifier exists, the fallback is fuzzy matching on product descriptions, increasingly augmented with AI-powered validation that flags low-confidence matches before they enter the dataset.

Data Freshness and Accuracy Under Operational Pressure

To evaluate pros on pricing analytics models fairly, freshness and accuracy need to be weighted alongside coverage. In volatile categories (electronics, travel, fast fashion), prices change multiple times per day. A pipeline running once daily is not collecting current market data; it is collecting this morning’s pricing history.

Higher refresh frequency means more infrastructure, more proxy bandwidth, and more exposure to anti-bot detection. The right collection cadence depends on how often prices actually change in your specific category. It is itself a data question that needs answering before the pipeline is built.

Scalability and Anti-Bot Defenses in Data Collection

At scale, pricing data collection runs into two compounding problems. Infrastructure requirements grow with volume. Separately, major platforms deploy behavioral fingerprinting (analyzing the technical signature of each request against known browser patterns) to identify and block automated collection, and those defenses grow more sophisticated as the scraping footprint becomes commercially significant.

GroupBWT has achieved up to 99% collection success rates against major anti-bot platforms in specific client deployments, using request fingerprinting, residential proxy rotation, and session patterning calibrated to each target site. The same infrastructure approach was projected to cut proxy costs for a digital shelf analytics client from $6,000 per month to $300–600 through a delta scraping strategy, collecting only changed data rather than full catalog refreshes on every cycle.

How Custom Web Scraping Delivers Actionable Pricing Intelligence

Building a Pricing Data Pipeline That Holds Under Load

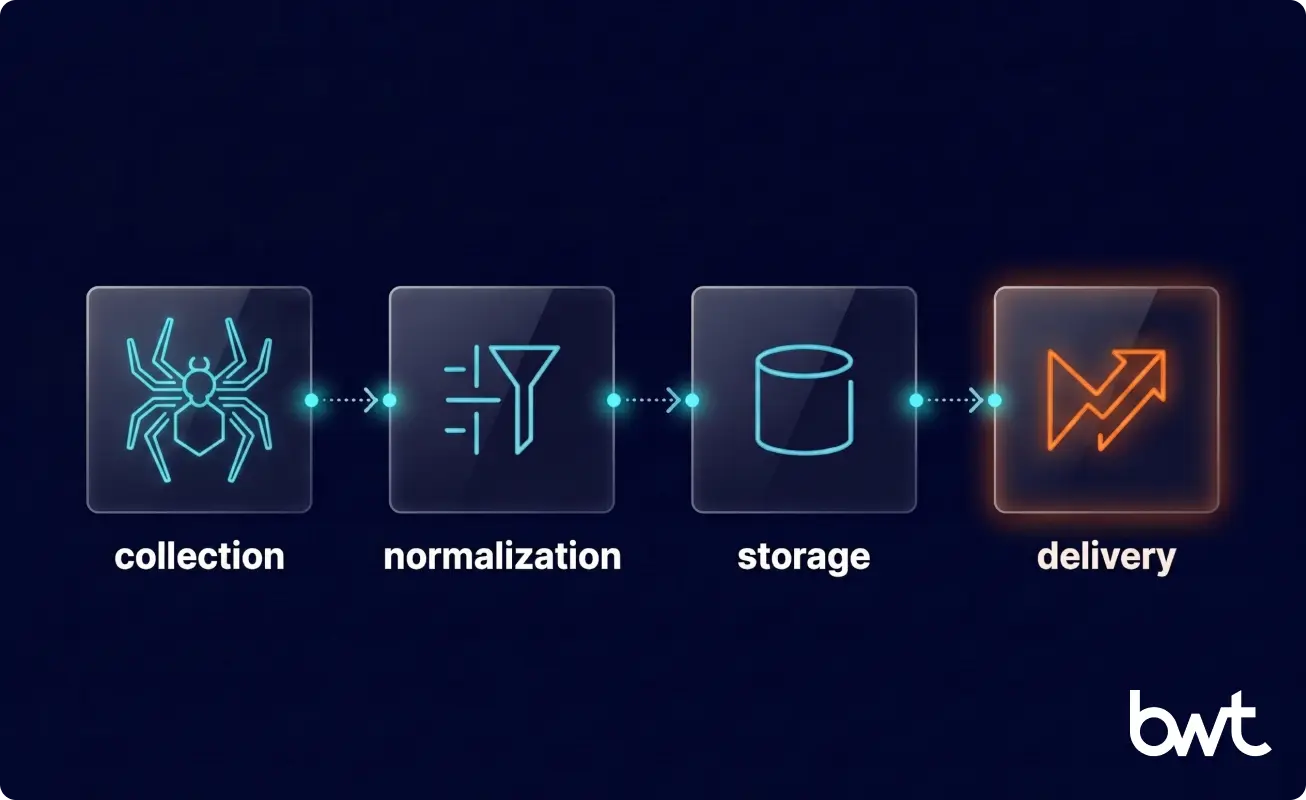

A production pricing data pipeline is not a scraper. It is an orchestrated system. The collection layer handles spiders, proxies, and anti-bot routing. Normalization covers attribute matching, deduplication, and format standardization. Storage holds the structured data warehouse. Delivery connects to dashboards, APIs, and the downstream systems pricing teams actually use.

“Proof of concept is the easy part. A scraper that works for 100 products a day will fail at 100,000 products per hour. The difference is not engineering effort — it is architecture. Most of the real work happens in the layers between the raw data and the dashboard.”

— Alex Yudin, Web Scraping Lead and Head of Data at GroupBWT.

Each layer introduces its own failure modes. A spider that works today may break when a retailer updates their site structure. A matching algorithm that handles branded SKUs correctly may fail on private label. Production infrastructure anticipates these failures and has automated recovery, rather than surfacing them in production dashboards after decisions have already been made on bad data.

Turning Pricing Data Volumes Into Strategy-Ready Outputs

Raw pricing data answers no business question on its own. A million price records in a database requires normalization, matching, visualization, and connection to the specific questions your pricing team needs to answer.

GroupBWT connects pricing data to dashboards (Metabase, ThoughtSpot) backed by data warehouses (Snowflake, MySQL) via transformation pipelines. A European hospitality-software platform serving tens of thousands of properties uses GroupBWT’s competitive-pricing feed to power its AI dynamic-pricing engine. The pipeline processes hundreds of millions of pricing records monthly from major booking sites across hundreds of global locations, maintaining near-perfect accuracy and year-long forward pricing horizons. The delivery runs in a mature bi-weekly cadence, and the client is already scoping an additional comp-set module on top of the same pipeline.

When to Build Custom Pricing Infrastructure

Signs Off-the-Shelf Pricing Tools Are Hitting Their Limits

Standard pricing tools perform well within their design parameters. They typically fall short when:

- Your competitor’s set includes platforms that the tool does not cover

- Required refresh rates exceed the tool’s scheduled collection intervals

- SKU matching requirements go beyond the tool’s taxonomy handling

- The data needs to feed into internal systems, but the tool does not integrate with

- Coverage needs extend to localized storefronts across multiple countries

Each of these is a sign that the business has outgrown what subscription-tier infrastructure was designed to handle — not a sign that the problem is unsolvable.

When Custom Infrastructure Earns Its Cost

Custom infrastructure justifies its cost in three situations. First: when the scale exceeds the SaaS pricing tier limits. A 3.5M-product price comparison database was built for a specific business problem, not a subscription feature. Second: when platform coverage is non-standard. Monitoring prices across 17 localized country storefronts requires custom per-site development regardless of tool selection. Third: when real-time requirements are strict. A 30-minute refresh SLA with 24/7 uptime is an engineering commitment that off-the-shelf tools rarely guarantee at commercial pricing.

How GroupBWT Builds Pricing Analytics Workflows

Custom Pipeline Engineering for Competitive Pricing Data

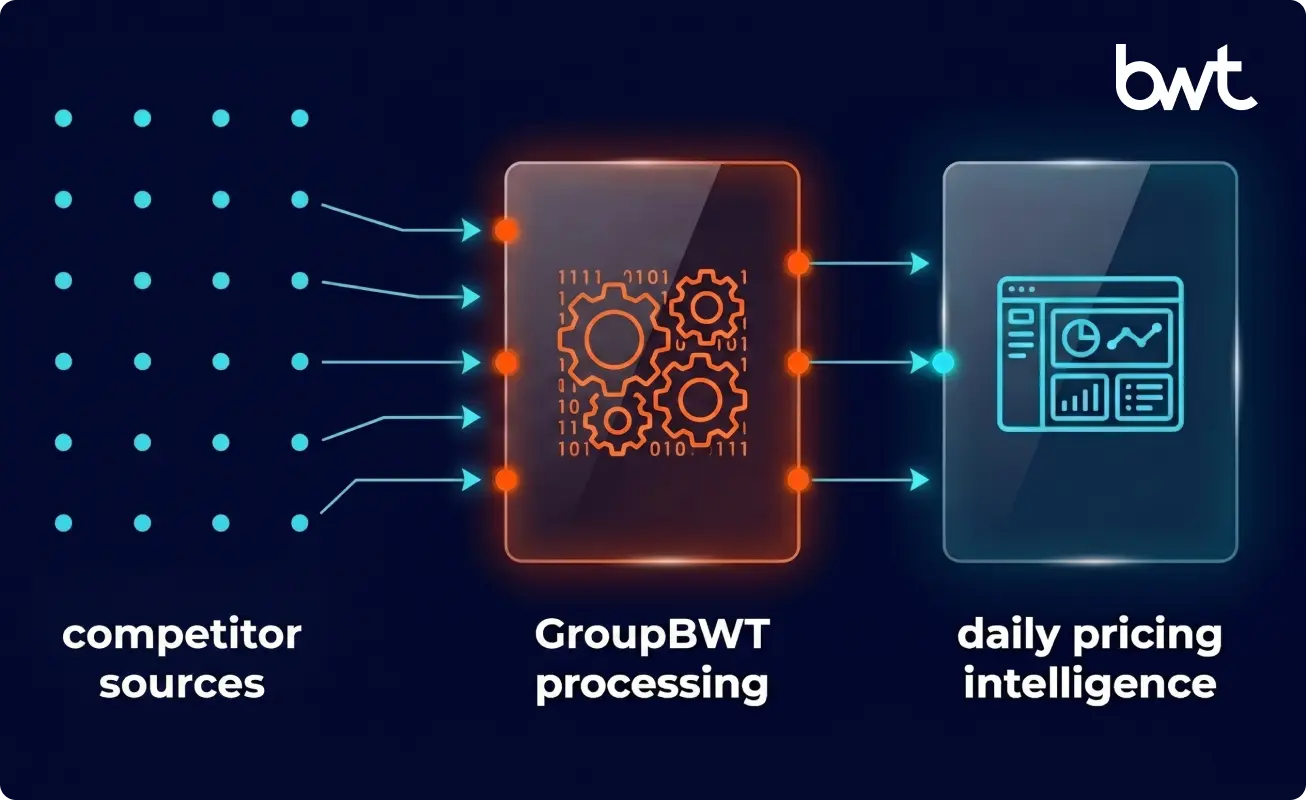

GroupBWT builds custom web scraping services infrastructure for pricing projects across E-Commerce, Retail, Beauty & Personal Care, Travel, and Telecom, from single-retailer competitive monitoring to multi-region deployments covering millions of SKUs daily.

A leading retailer benchmarking AI-powered pricing optimization found double-digit sales uplift through dynamic pricing adjustments enabled by a unified competitive data view, according to Google Cloud’s 2025 NRF retail AI analysis on empowering retailers with AI for commerce, marketing, supply chains, and more. The prerequisite for any of that is clean, current, matched pricing data. The infrastructure work is where it starts.

From Raw Data Collection to Decision-Ready Output

Scraping is the start of the pipeline, not the end. GroupBWT’s data analytics service covers normalization (EAN/GTIN matching, attribute reconciliation), analytics delivery (Metabase, Snowflake, ThoughtSpot), and pipeline maintenance (anti-bot updates, schema change handling, spider maintenance as retailer sites evolve).

The web scraping and data analytics services are designed to work together, from raw data collection through to the dashboards your pricing team uses daily. GroupBWT has run this end-to-end for clients at scales from 1,000 SKUs to 959,000 products per day.

If your current pricing data is incomplete, delayed, or failing to match products accurately across competitors, that is the problem we solve.

Conclusion

The seven pricing analytics techniques covered here — from tracking price history through market-driven strategy optimization — all depend on the same foundation. Accurate, current, matched competitor data, collected at the cadence your category requires.

Teams that invest in data infrastructure first, before model complexity, make better pricing decisions faster. The gap between what public competitor data reveals and what most pricing teams actually act on compounds over time. It doesn’t stay level. Competitors who collect and use this data make progressively better calls, and the lead widens.

GroupBWT has built this infrastructure across retail, e-commerce, beauty and personal care, hospitality, and telecom. If your pricing data is the constraint — coverage, freshness, or matching accuracy — that is where the work starts.

Pricing analytics is the structured process of collecting, normalizing, and analyzing pricing data (internal and competitor) to support pricing decisions. It matters because price is one of the highest-leverage business variables: a 1% improvement in price realization typically produces 3–5× the margin impact of an equivalent cost reduction. Without structured pricing analysis, teams rely on intuition and periodic manual checks that miss the pace and complexity of a market that changes continuously.

Web scraping provides continuous, structured access to competitor pricing data that would otherwise require manual collection. It covers product prices, promotional pricing, stock availability, and review signals from retailer sites and marketplaces at a frequency and scale no manual process can match, updated on a schedule the business controls: daily for most categories, every 30 minutes in price-volatile ones. The output feeds directly into the pricing models that drive decisions rather than arriving too late to act on.

Historical price analysis and dynamic pricing optimization deliver the highest impact for most E-Commerce businesses. Historical analysis reveals pricing patterns and seasonal trends that inform planning; dynamic optimization enables real-time responses to competitor price moves. Promotion and discount analysis adds significant value in categories where promotional timing drives volume. Businesses with mature data infrastructure that combine all seven pricing analytics aproaches, including category-level positioning and sentiment-based analysis, get the most complete picture of competitive pricing dynamics.

Collection frequency should match price volatility in your specific category. Electronics and travel prices can shift multiple times per day; grocery staples move on slower cycles. The practical starting point for most Retail and E-Commerce businesses is daily collection, with the option to increase frequency for high-priority SKUs. Real-time dynamic pricing operations (like the price-match guarantee architecture common in telecom retail) require 30-minute refresh cycles with 24/7 SLA coverage to be reliable enough to act on automatically.

Off-the-shelf tools handle standard use cases well: monitoring a defined competitor set on common platforms at a fixed schedule. Custom infrastructure becomes the stronger choice when competitor set, platform coverage, refresh requirements, SKU volume, or data integration needs exceed what the available tools were built for. Businesses monitoring 100,000+ SKUs across localized regional storefronts, or requiring sub-hourly refresh rates with strict accuracy SLAs, almost always need custom development. The cost is justified when the alternative is making pricing decisions on data that is incomplete, delayed, or mismatched at the product level.

Read summarized version with

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment