Read summarized version with

Introduction

A contract notice arrives from a Romanian national portal. The title is in Romanian. The description is in Romanian. The CPV code field is empty. A classification model trained on English government tenders has never seen this text before.

This is a routine event in any procurement data classification system that covers EU sources. It isn’t an edge case.

Multilingual tender classification is not the only challenge in tender aggregation, but it becomes critical the moment a system expands beyond a single-language dataset. Most systems hit that point quickly, because the EU procurement opportunity is exactly what drives the expansion in the first place.

GroupBWT is a custom software development company that builds procurement data infrastructure for clients operating across EU jurisdictions. This article covers the architecture decisions we’ve made on production systems: which approaches to multilingual classification hold up at scale, how CPV codes work as a language-neutral unification layer, and what monitoring practices keep the data clean as sources grow.

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment

Why Multilingual Tender Data Aggregation Is Hard

How Language Fragmentation Limits Tender Visibility

The EU has 24 official languages. Each member state publishes procurement notices in its own language or languages. A supplier searching for contracts in their sector cannot run a single keyword query and get complete EU-wide results — unless the underlying system has already resolved the language barrier at the data level.

Multilingual procurement data is more than text in different languages. It is different grammar, different procurement terminology, and different levels of description detail by portal. A buyer searching for “electrical installation services” in English gets results from English-language sources. The Italian, Polish, and Bulgarian contracts for the same service category are invisible — not because they don’t exist, but because the classification layer hasn’t connected them.

Why EU Procurement Data Stays Decentralized

TED publishes above-threshold EU contracts. Below-threshold contracts — the majority of procurement volume by notice count in most member states — sit in national portals. Each portal has its own schema, update cadence, and language conventions. There is no EU-wide registry that covers both tiers. The eForms transition that went live in 2023 standardized the above-threshold notice format on TED, but it didn’t change the fragmentation of national portals or the language variation within them.

The Impact of Below-Threshold Tenders on Coverage

Below-threshold contracts are published with fewer mandatory fields. CPV codes, which are required for above-threshold notices, are often missing or filled in loosely in below-threshold publications. That makes classification harder precisely where the data is most incomplete — the system has no structured anchor and no language match to its training data. Solving either problem alone isn’t enough.

Approaches to Multilingual Tender Data Aggregation Classification

There are four practical approaches to multilingual classification. Each one trades accuracy against operating cost and language coverage in a different way. The question of how to classify tenders in multiple languages comes down to which of these approaches fits the operational context — informational browsing tolerates a different answer than compliance reporting or automated supplier alerts.

Comparison: Four Approaches at a Glance

| Approach | Classification accuracy | Operating cost | Language coverage |

| Single English model | Low for non-English text | Lowest | Effectively English only |

| Translate then classify | Medium, varies by domain | Medium (translation API + model) | Wide |

| Per-language models | Highest per language | Highest (one pipeline per language) | Limited by labeled data |

| Single multilingual model (mBERT / XLM-R) | High on common languages, lower on rare ones | Medium (one model, several inference paths) | Wide, with fallback for rare languages |

Using a Single English-Based Model

The most straightforward option: train a classification model on English-language tender records, then run all incoming text through it. The baseline GroupBWT used was trained on roughly 500,000–800,000 UK government tender records going back to 2015 — a substantial dataset for a single-language model.

The limitation is obvious once you test it. A model trained on English text has no useful representation of Romanian procurement vocabulary. Accuracy for non-English text drops sharply, and the errors cluster in sectors where terminology differs most between languages — medical equipment, construction, legal services. These are exactly the categories where misclassification has real downstream cost.

Machine Translation as a Layer, Not a Strategy

Option two: translate every incoming tender to English with Google Cloud Translation, AWS Translate, or DeepL, then run the translated text through the existing English model.

This is fast to build. It is also where the accuracy problems are most predictable. Procurement text doesn’t translate cleanly. CPV category names, references to national procurement directives, and technical specifications fluently translate to wrong meanings. A translation that produces fluent English from procurement German may still map the wrong concept to the wrong CPV branch.

The right way to use translation is as an auxiliary layer for rare languages where labeled training data is too thin to justify a dedicated model — not as the primary classification strategy. In production systems GroupBWT has built, translation is a fallback that fills coverage gaps, not the main path.

Per-Language Models

Approach three: train a separate classification model for each target language using procurement-domain data in that language.

The accuracy advantage is real. A model trained on French procurement understands how French contracting authorities describe electrical installation services without recovering from a mistranslation first. The cost is also real: procurement-domain training data is limited in many EU languages, model maintenance scales linearly with language count, and a routing layer is needed to identify the source language before classification runs.

This is why per-language models are not the universal answer. They work where labeled data is thick and the language matters commercially. For a long tail of EU languages, training data is too sparse to justify a dedicated model — and that gap is where the fourth approach earns its place.

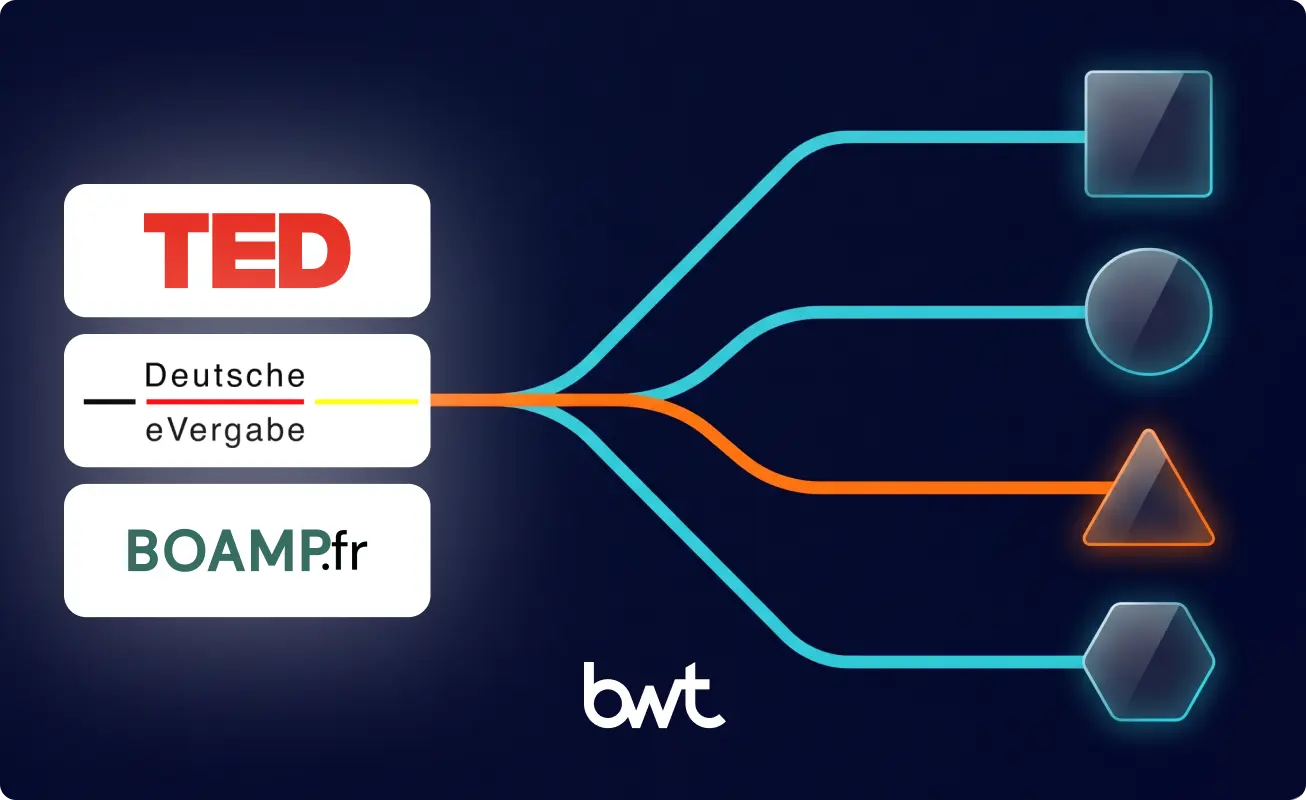

Single Multilingual Model with Refinement

The approach GroupBWT runs in production combines a single multilingual classifier with downstream refinement. A multilingual transformer (the family includes mBERT and XLM-R) is trained once on procurement data across all target languages and assigns the first-level CPV class — there are 45 of them, covering the entire CPV taxonomy at the broadest division level. A second-stage classifier — implemented with XLinearPECOS, an extreme multi-label classification library from Amazon’s libpecos package — refines that class to a specific code inside the full ~9,000-code CPV vocabulary.

This is the architecture that closes the gap the other three approaches leave open. One model handles language coverage. The two-stage design keeps inference tractable across thousands of categories. Translation is held in reserve for the languages where the multilingual model has the least training signal. The result is a single pipeline that produces production-grade accuracy on common EU languages and degrades gracefully on rare ones, instead of failing silently or requiring N parallel models.

“The instinct is always to solve multilingual with translation first — it feels like a shortcut to an existing solution. What we’ve seen is that translation artifacts compound through the classification layer, and by the time the error reaches a supplier alert or a compliance report, tracing it back to a mistranslated procurement term is hard. The architecture that holds up in production is a multilingual classifier on the front end, refinement underneath, and translation only where the training data runs out.”

— Alex Yudin, Head of Data Engineering at GroupBWT

Choosing the Right Strategy

The right answer depends on what the classification output is used for. If classification is informational — analysts browsing tender categories — translation plus a single English model may be good enough. If classification drives search relevance, compliance filtering, or automated supplier alerts, the multilingual classifier with refinement is the production answer. Per-language models are worth the investment in narrow cases where one or two language markets dominate commercially and labeled data is abundant.

Also Read: How We Build Tender Data Aggregation Systems: Architecture, Failure Modes, and Lessons from 100+ Portals

How CPV Codes Solve the Language Problem

What CPV Codes Are and Why They Matter

The Common Procurement Vocabulary is the EU’s standard classification system for public procurement. Every CPV code is nine digits plus a check digit, organized in a hierarchy: division (the 45 first-level classes), group, class, category, subcategory. The code 45310000-3, for example, identifies electrical installation work regardless of which language the contract notice is written in.

CPV codes are what make cross-language tender search possible. A supplier looking for electrical installation contracts doesn’t need to know how to describe their service in Romanian, Polish, and Swedish — they query by CPV code and the system returns relevant notices regardless of source language. For a system covering multilingual procurement data, CPV is the language-neutral anchor.

How CPV Supports Cross-Language Tender Search

A record from a Bulgarian national portal, classified to CPV 45310000-3 and stored in a normalized schema, appears in the same search results as an equivalent UK contract — without any translation step at query time. Organizations monitoring procurement across multiple jurisdictions don’t want to maintain separate search strategies per language. They want one query, one result set, one dataset. CPV is what makes that possible — but only if the classification is accurate and consistent across sources.

Using CPV for Tender Classification and Data Unification

Where source portals provide CPV codes, the classification task is validation and gap-filling. Where they don’t — common for below-threshold notices — the system has to predict the appropriate code from the tender text.

In systems GroupBWT has built, the CPV prediction model returns ranked code candidates with associated probability scores. The output is stored in the OCDS Augmentation Extension using the standard structure: method (model prediction, source-provided, or manual), candidates[] (the ranked predictions), and matchValue (the confidence score for the top candidate). That metadata is what makes audit queries possible — “show me every record where the top CPV candidate had a matchValue below the deployment threshold” is a valid compliance question, and it’s only answerable if the candidates and confidence travel with the record. OCDS tender data classification is the structural reason this audit trail survives long-term: without an OCDS schema and its augmentation extension, the same metadata has no standard place to live and gets lost the first time the storage layer is migrated.

Limits of CPV and Additional Vocabulary

CPV isn’t perfect. The taxonomy has categories that are too broad for meaningful search in some sectors, and it doesn’t cover every procurement type with the specificity that some clients need. Heavy equipment rental and specialized laboratory services, for example, fall into CPV branches shared with adjacent service types — the code alone doesn’t fully distinguish them.

For systems where CPV isn’t sufficient, a secondary vocabulary layer extends classification specificity. Procurement taxonomy mapping — using free-text features from tender title and description in combination with CPV — produces more precise classification than CPV alone. The architecture choice is whether to run this as a post-CPV refinement step or as a parallel classification track.

“CPV is a foundation, not a complete solution. We treat it as the first classification layer — the one that enables cross-border search and cross-language comparison — and then add domain-specific refinement on top for clients who need finer granularity. The two-layer approach is more maintainable than trying to extend CPV itself.”

— Dmytro Naumenko, CTO at GroupBWT

Building a Tender Classification Model

Training Data Requirements for CPV Code Prediction

A CPV prediction model needs procurement-domain training data in the target language, labeled with correct CPV codes. For English, large labeled datasets exist — UK government procurement data going back to 2015 provides substantial coverage. For other EU languages, labeled datasets are smaller and less consistent.

The practical implication: classification accuracy varies by language, and that variance correlates directly with training data volume. A multilingual model trained on hundreds of thousands of records across the most common EU languages outperforms a sparse-language model on those same languages, but accuracy still drops on languages where labeled data is thin. Designing the system honestly means acknowledging this variance, not presenting one accuracy figure across all supported languages.

CPV Prediction and Confidence Scoring

A classification model that outputs a single CPV code without a confidence score is less useful than one that outputs ranked candidates with probabilities. The single-output model forces a binary decision: accept or reject. The confidence-scored model enables routing — high-confidence predictions go straight into the normalized record; low-confidence predictions go to a human review queue.

In production, the prediction score threshold for auto-acceptance is a single configurable parameter, tuned per deployment based on the use case. Search and filtering tolerate a lower threshold; compliance reporting and automated supplier alerts require a stricter one. The threshold is part of the deployment configuration, not a property of the model.

Adding Classification Metadata to OCDS Extensions

OCDS provides a standard classification object with scheme, id, and description fields. CPV prediction needs more than that: the system has to record whether a code came from the source or from a model, the ranked alternatives the model considered, and the confidence the model had in the top result.

The OCDS Augmentation Extension supports exactly this. The standard classification object carries the assigned CPV code; the augmentation extension stores method (the source of the assignment), candidates[] (the ranked predictions), and matchValue (the confidence). With that metadata in place, the provenance of every classification travels with the record. Without it, “why is this contract in this category?” is only answerable by querying logs that may or may not still exist.

Balancing Accuracy and Scalability in Classification

The multilingual-classifier-with-refinement approach scales differently from per-language models. Adding a new EU language to a per-language pipeline means training data, a new model, and a new monitoring path. Adding the same language to a multilingual classifier means more training data feeding one model, plus translation-based fallback if labeled data is thin. The maintenance load grows on a flatter curve.

Prioritization still matters. Improvement effort goes where the procurement volume justifies it — high-volume markets get more labeled data and more aggressive accuracy targets, long-tail languages get fallback and acceptance of higher review-queue rates. Not every language warrants the same engineering investment on day one.

Monitoring, Data Quality, and Source Management

Tender data monitoring is the operational layer that keeps a production system honest. Classification decisions and normalization rules produce a certain quality level at launch. Monitoring is what prevents that quality from degrading over time as sources change and volume grows.

Source-Level Monitoring and Fault Isolation

Every source in the system needs independent health tracking. When a national portal changes its authentication flow, the collection for that source fails — and only that source. A monitoring architecture that aggregates all sources into a single health metric obscures this: the overall system appears healthy while one source has been dark for 48 hours. Tender source monitoring at the per-portal level is what makes that isolation operational rather than theoretical — the failure is attributed to one source on the dashboard before anyone has to dig through logs.

In GroupBWT-built systems, Grafana tracks per-source metrics: daily collection runtime, HTTP error rate, and expected daily release count against actual releases collected. When actual falls below expected by a defined threshold, an alert fires. The source is isolated for investigation without affecting the rest of the pipeline. Sentry handles exception tracking at the scraper level — structured errors with source identifiers, so when a parser fails on an unexpected field, the failure is attributed to a specific source and a specific input format.

Tracking Missing Fields and Data Completeness

Tender data classification and monitoring tracks the presence of critical fields across incoming records: title, description, CPV code, release date, and contracting authority identifier. These aren’t the only fields in the schema — they are the fields whose absence makes a record operationally useless.

Missing-field rates per source are a leading indicator of classification problems. A source that starts missing CPV codes at a higher rate than usual has either changed its publication behavior or is receiving records from a new sub-portal that doesn’t enforce the same required fields. Catching this early prevents a backlog of unclassified records from accumulating in production.

Schema validation runs at ingestion: records that fail completeness checks on critical fields go to a quarantine queue rather than directly to production. This isn’t a rejection — it’s a deferral. Quarantined records are reviewed by QA specialists who either fix the issue or document why the record is structurally incomplete and flag it accordingly.

Tender data validation at this stage is what separates records that can move directly into the classification layer from records that need human review before they enter the production dataset. Tender data completeness monitoring runs against the same critical-field set on every ingestion cycle, so drift in any of those fields surfaces as a per-source trend rather than as scattered one-off failures.

Using QA Teams to Preserve Procurement Data Quality

Automated validation catches what automation can catch. It misses cases where the data is structurally complete but semantically wrong — a CPV code that is present but incorrect, a description that doesn’t match the procurement category, a contracting authority identifier that doesn’t resolve to a known entity.

QA specialists who work on procurement data quality control handle these cases. Their role is to identify patterns — not to fix individual records manually at scale. When a QA specialist notices that a specific source consistently misclassifies contracts in a certain CPV division, that observation feeds back into the parser rules or the model training pipeline, not into a queue of one-off manual fixes. This feedback loop is what keeps tender aggregation data quality from degrading over time.

“Data quality problems split into two categories: structural issues that automated validation catches immediately, and semantic issues that only become visible when someone looks at whether the classification makes sense in context. The QA team’s job is to find the semantic issues and turn them into rules — not to manually correct individual records.”

— Alex Yudin, Head of Data Engineering at GroupBWT

How to Add New Tender Sources Efficiently

Source growth is constant in production procurement systems. Clients add countries. Portals consolidate. New regional platforms appear. The system’s ability to absorb new sources without disrupting existing coverage is an architectural property — one that has to be designed in from the start.

GroupBWT’s standardized workflow has five phases. Source analysis covers the portal’s technical characteristics: format, authentication, pagination behavior, update cadence, and the fields it publishes. Data mapping documents how source fields correspond to the target OCDS schema — which fields are equivalent, which are missing, which require inference. Scraper implementation builds the collector. Testing validates against real source data, including edge cases from source analysis. Production deployment adds the source to the live pipeline with its own monitoring configuration.

For a portal type GroupBWT has integrated before, the full cycle typically takes less than one working day. For a genuinely new platform with undocumented behavior, several days of source analysis are needed before implementation begins.

The architectural requirement that makes this safe is isolation. Each source’s collector runs independently. A new source going through testing doesn’t share infrastructure with production sources. A source that fails in production fails at the source level, not the pipeline level. That isolation is what makes it possible to add sources continuously without scheduling maintenance windows.

Tender Aggregation System Architecture for Multilingual Data

A system built for multilingual procurement data across EU sources has discrete components: per-source collectors, a normalization layer with OCDS schema support, a classification layer with the multilingual model and refinement, a monitoring layer with per-source metrics, a QA routing layer, and a delivery layer with client-specific output formats. Each runs as a separate service. Each fails independently. Centralized tender data is the output of this pipeline, not its starting point — every component upstream of the delivery layer exists to turn many heterogeneous portals into one normalized, classified, source-traceable dataset that downstream consumers can query as a single surface.

Client-specific logic — OCID encoding rules, threshold configuration, custom OCDS extensions, output format — lives in configuration that’s specific to each client deployment. Two clients on the same underlying pipeline get systems that behave differently because their business rules differ. Generic procurement data feeds can’t replicate this: a feed designed to serve many clients with one configuration can’t expose CPV prediction confidence in a custom field, can’t honor a client’s threshold, and can’t carry the OCDS extensions that compliance teams depend on.

For clients whose requirements include handling multilingual and classification challenges in tender data classification across many EU languages, the classification layer is the most client-specific component — training data, confidence thresholds, and acceptable accuracy levels all vary by use case.

Architecture Choices That Support SLA Requirements

SLA commitments in procurement data systems are about source coverage, not just system uptime. A system that is technically running but has had a source dark for 36 hours is failing its SLA — the missing records from that source are not recoverable after the portal’s publication window closes.

Architecture choices that support SLAs: per-source monitoring with alerting latency under one hour, re-ingestion logic that fills gaps when a source recovers, fault isolation so a failing source doesn’t degrade the pipeline for other sources, and a support team with source-specific knowledge that can investigate portal behavior changes faster than a generalist operations team.

Common Mistakes in Multilingual Tender Aggregation

Treating every source as an instance of the same pattern. Each portal has its own schema, its own failure modes, its own update cadence. A monitoring and parser architecture that treats all sources as identical produces persistent edge cases for the portals that don’t fit. The maintenance cost of per-source specificity is lower than the quality cost of forcing non-standard sources into a standard mold.

Choosing translation as the multilingual strategy. Translation belongs in the system as a fallback for languages where labeled data is too thin to train on directly. Using it as the primary classification path pushes accuracy down across every language at once and makes errors hard to trace. The compounding effect — a translation artifact becomes a misclassification, which becomes a wrong supplier alert — only shows up after the system has been running for months.

Treating CPV as the complete classification answer. CPV is the right first layer because it’s language-neutral and standard. It is not granular enough for every commercial use case. Skipping a secondary classification layer for clients who need finer categorization leads to a system that returns “technically correct” matches with low practical relevance — and to support tickets that have no good answer.

Building monitoring after launch. Monitoring that tracks CPV assignment rates per source, not just collection success, surfaces classification drift before it accumulates. A source that quietly starts producing records with lower CPV assignment rates is either changing its publication behavior or sending records the model handles poorly. Catching this at the monitoring layer is faster than catching it in a quarterly data quality review.

Storing only the assigned CPV code, not the prediction context. Without method, candidates[], and matchValue in the OCDS extension, every classification looks the same — model output, source-provided, and manually corrected records become indistinguishable. The day an audit asks “where did this classification come from?”, that distinction matters.

If your procurement data scope extends across EU jurisdictions and you need classification that holds up at scale, the next step is a scoping conversation. Bring the list of source portals you need covered and a sample of 5–10 tender records. We return a written assessment that covers source feasibility, expected coverage by language, the architecture proposal, and a delivery plan with timeline. The scoping is free.

Our data aggregation services cover the full pipeline — from source coverage and multilingual classification through delivery into your existing data stack.

Improve Multilingual Tender Aggregation Accuracy — Contact GroupBWT

EU tender aggregation needs three layers working together: a per-source collection layer that handles each national portal on its own terms, a normalization layer that maps source fields onto a shared schema (OCDS in most production systems), and a classification layer that makes records comparable across languages by anchoring them to CPV codes. The fourth piece — often skipped at first and added under pressure later — is per-source monitoring, because the difference between a system that aggregates EU tenders and a system that aggregates most EU tenders is whether you notice when one portal goes dark.

Automation predicts CPV codes for records where the source didn’t provide them. In production, this is a two-stage classifier: a multilingual model assigns one of the 45 first-level CPV classes, and a second-stage model — typically an extreme multi-label classifier such as XLinearPECOS — refines that class to a specific code in the ~9,000-code vocabulary. The output is a ranked list of candidates with confidence scores, stored in the OCDS Augmentation Extension as method, candidates[], and matchValue. Records below a configurable confidence threshold are routed to human review.

Generic procurement APIs cover above-threshold TED data and a small number of high-volume national portals. Below-threshold coverage almost always requires custom collection because the long tail of national and regional portals don’t expose APIs at all — collection is built per source. A multilingual procurement data delivery layer (Snowflake share, S3, SFTP, or REST) can sit on top of that custom collection and present the unified, CPV-classified output as a single API surface. The API is the delivery; the collection underneath is what determines the actual coverage.

Tender data is public — published by contracting authorities to attract suppliers. The GDPR-relevant material is mostly limited to named contact persons in contract notices. The compliant practice is collecting only data published in the public notice, not enriching with personal data from other sources, and treating named contacts under a documented lawful basis (typically legitimate interest for B2B procurement contact). Storage and access controls follow the same standards any GDPR-regulated dataset requires. None of this is unique to procurement; it’s standard practice for public-data scraping in regulated markets.

For search and browsing where rough categorization is acceptable, machine translation into English before classification works. For systems where classification drives compliance reporting, supplier contract alerts, or award analytics, translation artifacts compound — a term that translates fluently but maps to the wrong CPV category creates errors that propagate downstream. The production answer is a multilingual model handling classification directly, with translation reserved as a fallback for languages where labeled training data is too thin to support a dedicated training path.

Tender data quality degrades when monitoring is treated as a launch-time task rather than an operational discipline. Per-source tracking of collection success, CPV assignment rates, and critical field completeness catches quality problems at the source level before they spread through the dataset. QA specialists who review classification edge cases and translate their findings into updated parser rules or model training data keep the system calibrated as source behavior changes. The systems GroupBWT builds include both — automated monitoring with per-source alerting, and a QA process that handles what automation can’t. See our guide to big data pipeline architecture for how monitoring fits into the broader pipeline design.

Read summarized version with

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment