Read summarized version with

Introduction

The web scraper software market sat at USD 814.4 million in 2025. By 2033 the same forecast lands at USD 2.21 billion, a 13.29% CAGR (Web Scraping Software Market Size Report, Grand View Research, 2025).

That growth comes from a widening gap between what market intelligence tools publish and what decision-makers can actually use.

GroupBWT has been engineering production scraping systems since 2009. Across 140+ engagements, our pipelines process up to 335 million records per month for clients in 7 primary industries (E-Commerce, Retail, Beauty & Personal Care, Travel, Real Estate, Automobile, Telecom) plus financial services, healthcare, sports technology, and nonprofits.

The web scraping use cases we encounter most solve a problem purchased feeds, manual exports, or platform APIs cannot, at the speed and accuracy real business decisions demand.

This guide covers 15 proven applications, an ROI framework for sizing the investment, and a direct answer to the question most teams hit eventually: when does a custom-built solution actually make sense?

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment

Why Bought Data Stops Working When Decisions Speed Up

In competitive E-Commerce categories, repricing engines adjust SKU prices dozens of times per day. Purchased market reports reflect conditions from 30 to 90 days ago. Internal CRM data tells you what your own customers did. What everyone else is doing right now is not in it.

Three structural problems define the modern data gap.

Speed deficit. Most commercial providers update quarterly or monthly. The window in which pricing, inventory, or reputation data drives a tactical decision is often measured in hours.

Granularity gap. Aggregated reports tell you a category grew 12%. They cannot tell you which competitor SKU triggered the shift, or which review theme is driving a rating decline on a particular platform.

Scope limitation. APIs give access only to the data a platform chooses to share. Structured web data (prices, descriptions, reviews, vacancy counts, regulatory filings) exists at a scale no API program covers.

This is not an argument against commercial data. It is an argument for filling the gaps with real-time collection at the cadence business decisions actually require.

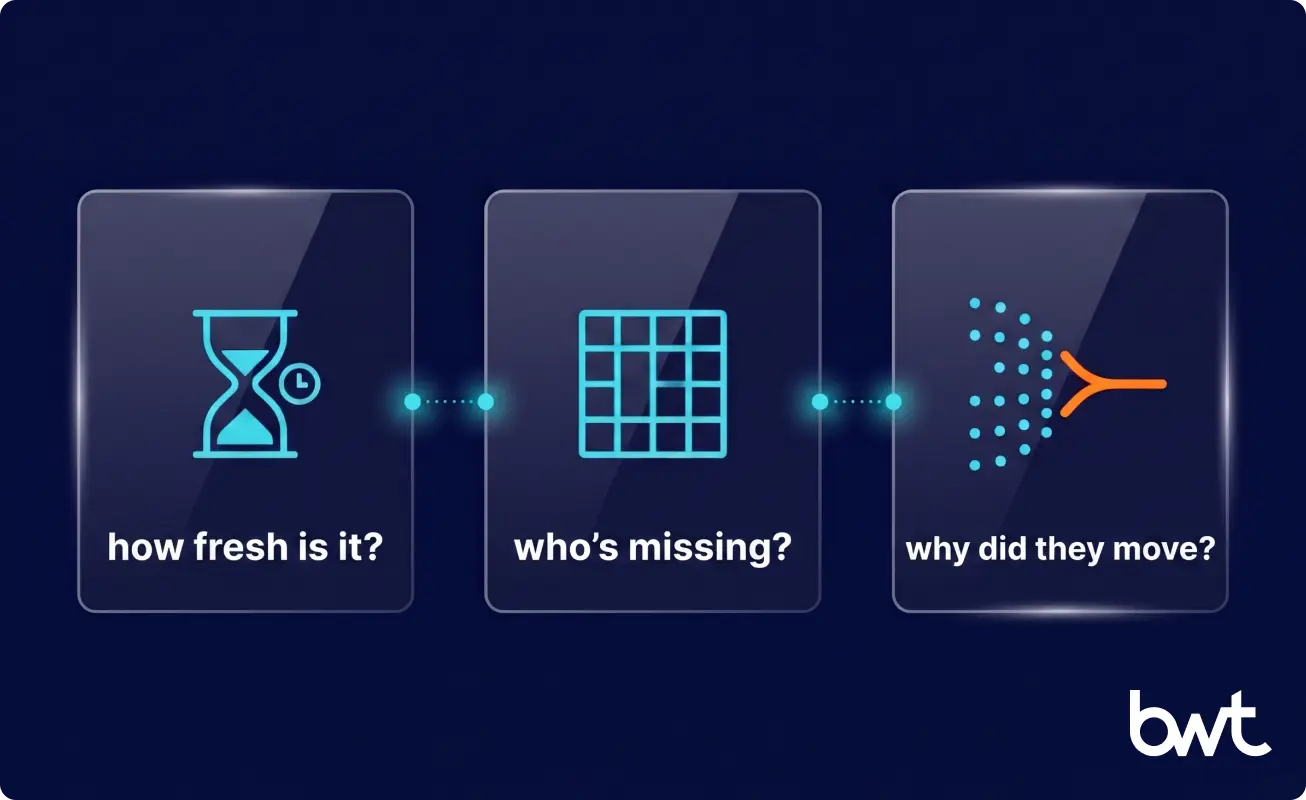

What Breaks First in Bought Data: Recency, Coverage, Pattern Reading

The use cases of web scraping expand every time a procurement team discovers what a data vendor actually delivers versus what the contract implied.

Recency goes first. By the time aggregated pricing data reaches a pricing team, source conditions have shifted. One Automobile client received weekly fleet pricing reports from a vendor; by Monday, weekend price movements across 11 European marketplaces had already made the report obsolete.

Coverage breaks next. Vendors cover only the sources they have indexed. If a critical competitor is missing, the gap stays invisible.

Last is interpretability. Aggregated data cannot tell you why a competitor changed pricing last Tuesday at 2 PM. Raw structured data collected continuously can. The pattern lives in the sequence of moves; a quarterly summary flattens it into one number.

The floor requirement is real-time source-level collection, and that means scraping.

Mapping 15 Web Scraping Use Cases to the Teams That Own Them

The web scraping use cases we have built across 140+ engagements fall into four business functions. Different organizational owners have different data requirements, and scoping the infrastructure correctly starts with knowing which function is driving the need.

Competitive Intelligence (1–4)

| Use Case | What Gets Scraped | Business Outcome |

| Price monitoring | Product pages, marketplace listings | Same-day repricing decisions |

| Digital shelf monitoring | E-Commerce listings, content compliance | Reduced out-of-spec listings at scale |

| Brand protection | Ad placements, bidding patterns, reseller pages | Unauthorized activity caught before it damages spend |

| Competitive landscape mapping | Provider profiles, service descriptions | Live market context for sales targeting |

1. Price monitoring is the most common entry point. An Automobile client tracking a rental fleet across 11 European marketplaces ran a manual process that needed two analysts and three days. A daily scraping pipeline cut acquisition costs 14% and increased selling prices 6%. See the vehicle price analysis software for the rental company case for technical detail.

2. Digital shelf monitoring runs at a different complexity level. A large manufacturer needed to track listings, pricing, and content compliance across 100+ marketplaces. The pipeline gave them visibility into 959,139 monitored product records per day. Listing deviations got flagged before they became audit issues. See the digital shelf data analytics for a large manufacturer case.

3. Brand protection. A Beauty & Personal Care brand fighting counterfeit listings kept losing time. By the time legal caught a violation, the listing had already gathered reviews and search-rank momentum. Daily scraping of seller pages, paid ad placements, and trademark-adjacent search snapshots brought flags within hours, and takedowns dropped from weeks to days.

4. Competitive landscape mapping. Sales teams in fragmented B2B markets often walk into deals where competitor packaging and pricing have shifted since the last quarterly battlecard. A SaaS client wanted continuous observation of competing service and pricing pages. Weekly scraping replaced a months-long battlecard cycle with one measured in days.

Market and Lead Intelligence (5–7)

Use cases for web scraping extend well into market and lead intelligence, often deeper than teams initially expect.

5. Sales lead intelligence. A sports technology company had a stale-prospect problem with a list that aged out within months. We built a continuous pipeline pulling from job boards, company directories, and industry event registries. The output was a live signal feed of hiring patterns, funding rounds, and tech-stack shifts. Qualified leads climbed 25%. Conversion climbed 20%.

6. Consumer review analysis tends to be underbuilt. A Beauty & Personal Care manufacturer scraping reviews across major marketplaces detected a rising ingredient concern six weeks ahead of any purchased sentiment report. That lead time fed a planned reformulation, not a reactive scramble.

7. Regulatory and rate aggregation. For insurance and fintech teams, public filings, rate schedules, and policy documents sit spread across regulators and competitor portals. A fintech client replaced quarterly manual sampling with daily aggregation across dozens of pages, compressing compliance-prep cycles from weeks to days.

Operational Efficiency (8–12)

These applications often produce the fastest measurable ROI because the manual baseline is expensive, slow, and brittle in ways teams don’t notice until something breaks.

8. CRM data migration. A healthcare organization replacing a legacy CRM needed to migrate patient-related records from a system with no export API. A targeted extraction tool cut migration time by 50% and reduced manual effort by 35%.

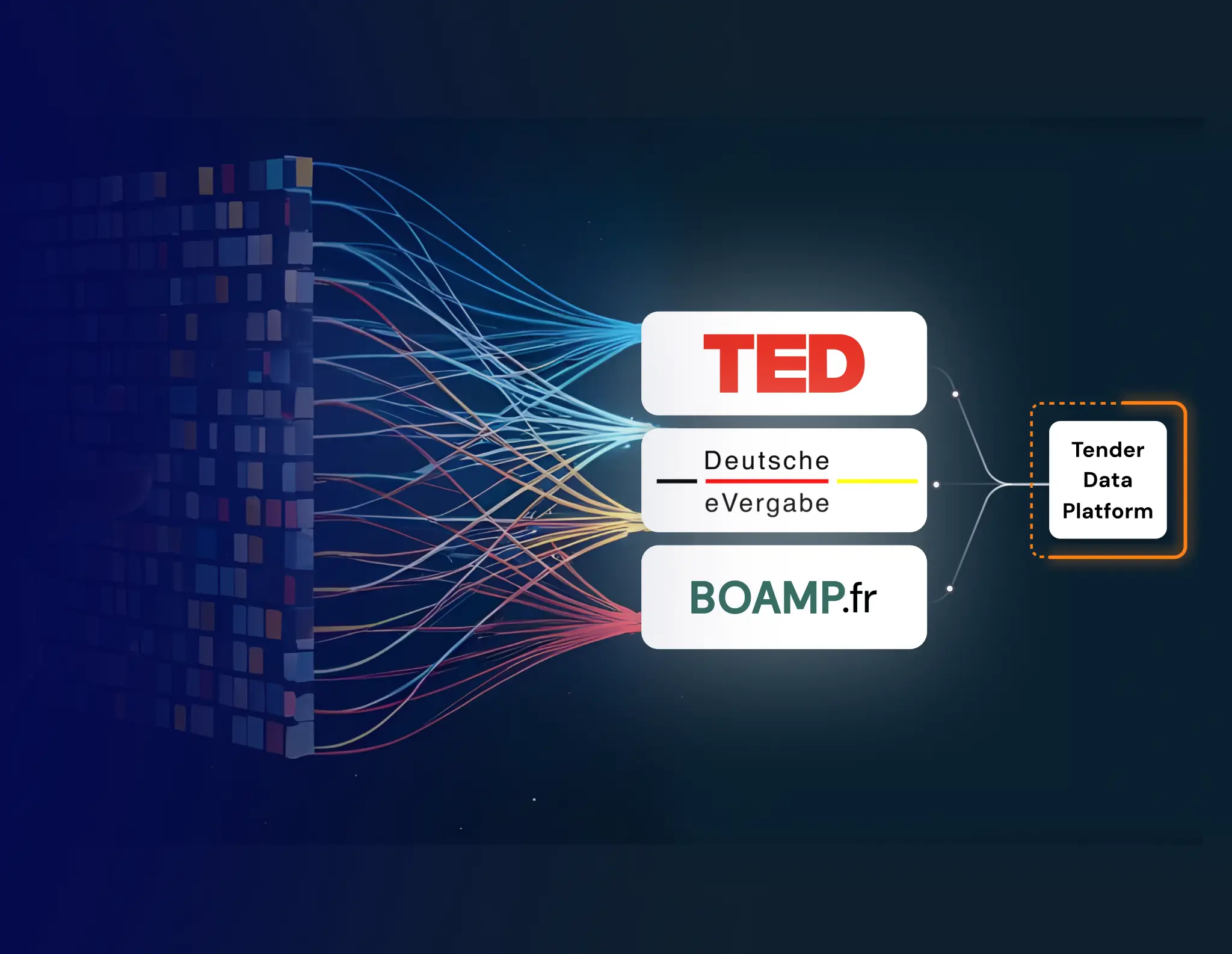

9. Tender aggregation. Monitoring 50+ procurement portals manually needs dedicated headcount and still misses entries. Automated collection let a client increase tender capture volume by 25% — not because more tenders existed, but because they stopped missing them.

10. Employment records extraction consolidates verification and background-check sources that legal and HR teams previously pulled by hand.

11. Recruitment pipeline automation keeps candidate sourcing live across job boards and professional directories.

12. Nonprofit data unification brings grant databases, donor records, and program directories into a single reporting layer for organizations without large engineering teams.

Real-Time and AI-Ready Pipelines (13–15)

The fastest-growing demand in 2026 is scraping built for automated decisioning. Whether the downstream consumer is an AI training pipeline, a pricing engine, or a rate-monitoring service, the requirement is the same: clean structured data refreshed fast enough to act on.

13. AI training and retrieval data. LLM fine-tuning, RAG pipelines (retrieval-augmented generation systems that pull live external data into model responses), and real-time AI agents all need clean, continuously refreshed external data. Most teams underestimate how much scraping infrastructure is needed before the AI layer is even relevant.

14. Travel and hospitality dynamic pricing. Hotel rates, flight availability, and dynamic offer construction have been scraping applications for years. The 2026 version pushes refresh cycles much tighter, since AI-driven pricing engines act on far shorter intervals than the daily cadence of five years ago.

15. Real estate and mortgage rate monitoring follows the same logic. A financial services client aggregating mortgage rates from dozens of lender pages cut analyst workload by more than half while moving freshness from weekly to daily.

GroupBWT builds production scraping infrastructure across the 7 primary industries listed above. If your team is ready to scope a data pipeline, our web scraping services run from discovery through production delivery.

Also Read: Best Web Scraping Companies in 2026: Top 8 Compared

The Pipeline Layer AI Models Quietly Depend On

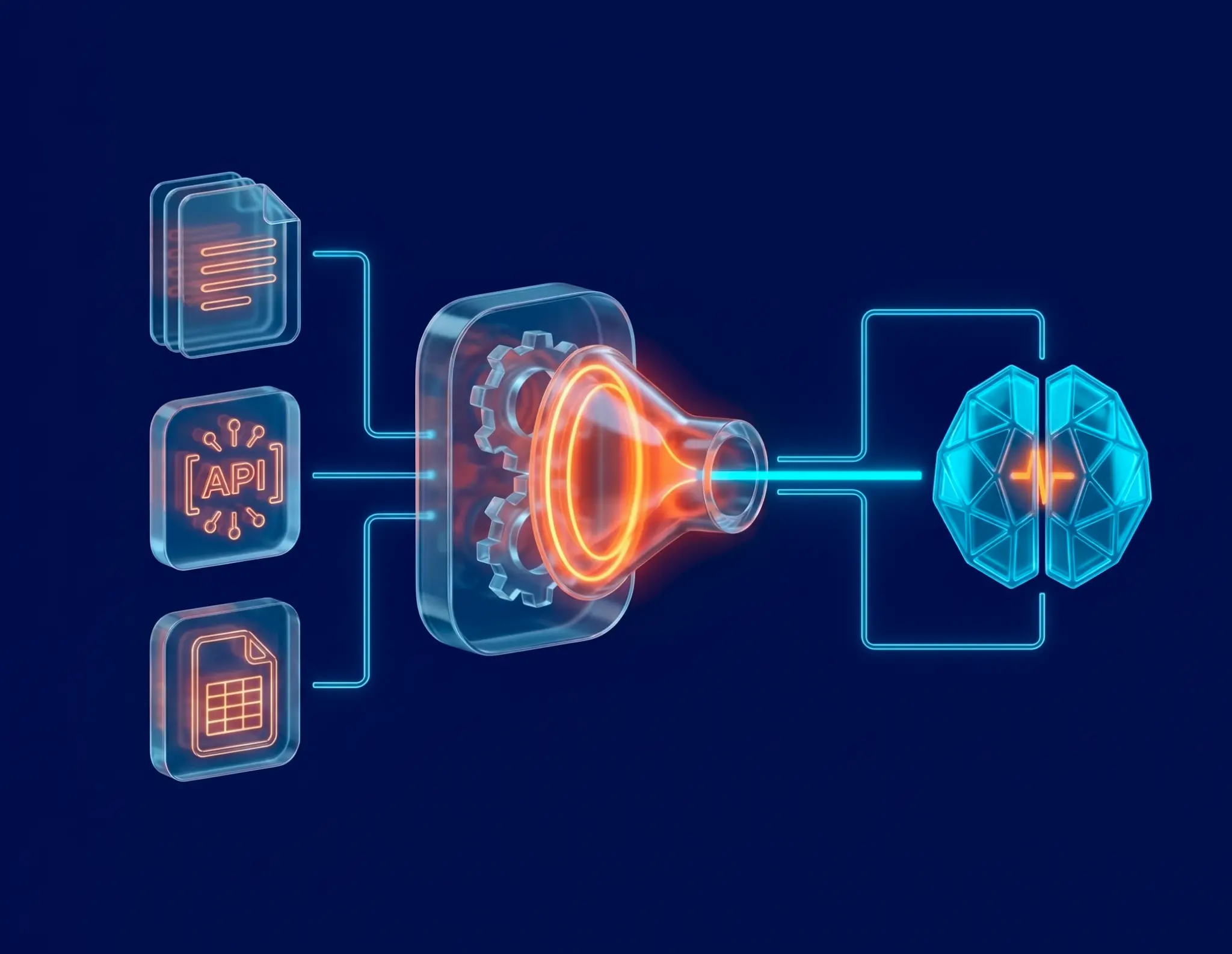

Raw HTML is a source, not a dataset. The gap between them is where most scraping projects quietly fail, and where the most valuable use cases of web scraping are actually built.

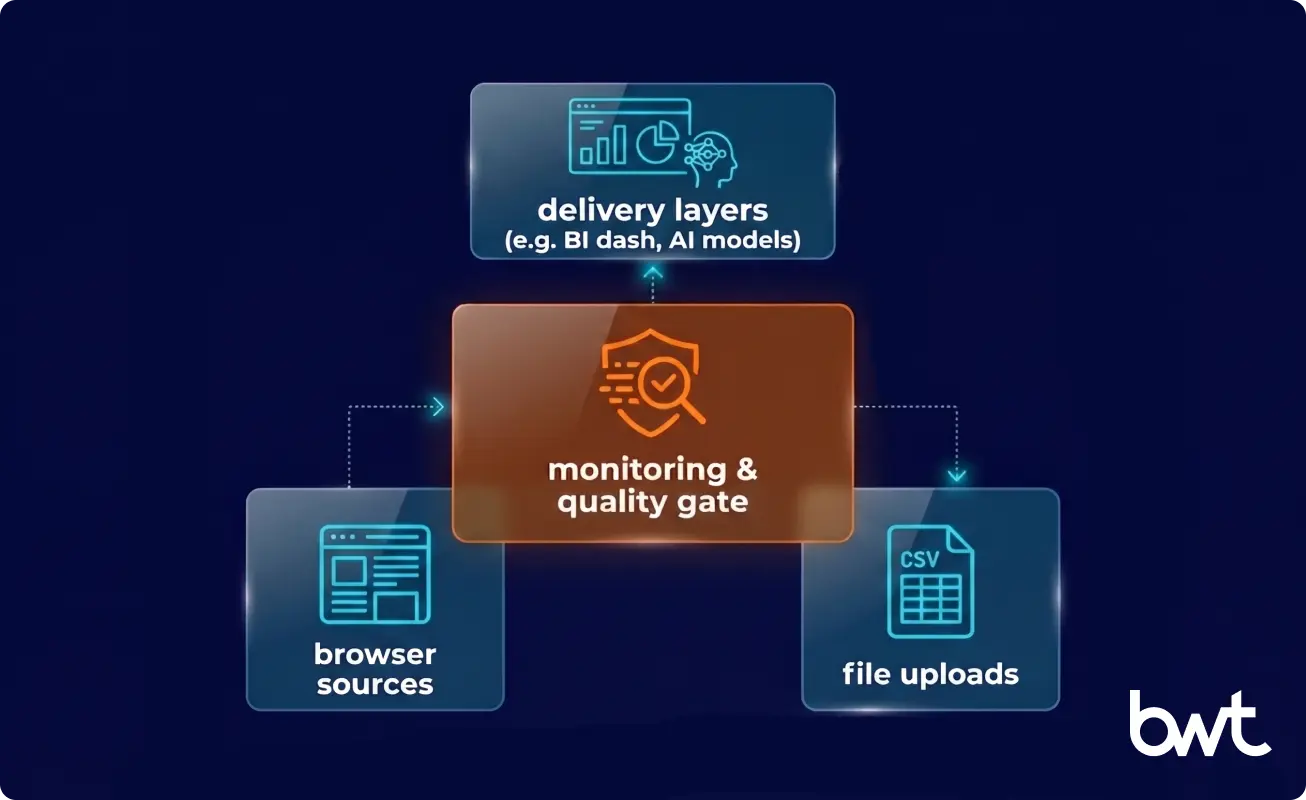

Applications that generate durable value treat extraction as step one. Step two is transformation, parsing page content into typed, validated records. Step three is enrichment, where scraped data joins internal sources, gets deduplicated, and gets tagged by category, sentiment, or urgency.

McKinsey’s State of AI 2025 survey found that 68% of failed AI deployments trace back to data quality and freshness at the input layer. The model layer wasn’t the problem. The pipeline determines whether the AI system works in production.

GroupBWT keeps source captures separate from the cleaned data layer, so parsers can be updated without re-collecting records when a site changes layout. AI agents browsing autonomously need structured output they can reason over, not raw HTML.

“The biggest misconception we encounter is that AI replaces the scraping infrastructure. It doesn’t. It raises the bar for what that infrastructure has to deliver. An LLM parsing raw HTML at inference time is expensive and unpredictable. The right architecture separates collection, transformation, and AI consumption into distinct layers, each with its own monitoring and quality gates.”

— Alex Yudin, Head of Data Engineering, Web Scraping Lead at GroupBWT

How Compliance Actually Plays Out in 2026

Web scraping operates in a legal environment that is still being actively written. Wire compliance into the pipeline from day one rather than bolt it on later.

How it plays out today:

- robots.txt declares paths a site owner would rather not see crawled. Courts have ruled differently on whether ignoring it creates legal exposure. Default move: honor it unless you have a specific legal basis not to.

- GDPR and CCPA kick in the moment scraped data carries personal information. Consult legal counsel before deploying personal data collection at scale. The question is not “is this technically possible” but “do we have a lawful basis.”

- Terms of Service restrictions appear on most major platforms. Enforceability varies. The relevant question is whether the activity creates business or reputational risk.

- Anti-bot systems are now standard at high-traffic sites. Operating within a site’s access patterns without triggering blocks is real engineering work, not a configuration option.

That tension between data value and collection complexity is where the best use cases for web scraping are typically found, and where the gap between a scraping script and a production-grade system is widest.

“Enterprise clients come to us after a compliance incident, not before. The pattern repeats. A script that worked at low volume created problems at scale, either because it triggered rate limits that looked like abuse, or because it was collecting personal data without a governance framework. Building the governance layer in from day one is not bureaucracy. It’s the only way the system stays operational two years later.”

— Oleg Boyko, CCO, Enterprise Data Extraction Delivery at GroupBWT

Four Things Production-Grade Scraping Buys You

Data That Lands Inside the Decision Window

Pricing intelligence four hours old is operationally different from pricing intelligence four days old. The response window for most competitive moves (repricing, promotional adjustments, bid management) is measured in hours. Continuous refresh changes what decisions are possible, not just how quickly they get made.

Patterns You Can Read, Not Just Snapshots

Knowing a competitor changed pricing is one thing. Knowing they changed it on 47 specific SKUs in two categories, at a margin suggesting a promotional campaign next week, is pattern-level intelligence a purchased feed will not surface. Record-level data collected over time is the only way to see the pattern.

Analysts Stop Extracting and Start Thinking

An automotive parts company spent analyst time manually extracting pricing data for 200,000+ SKUs. An automated pipeline reduced that cycle from weeks to hours. Analyst time moved to analysis, and SKU coverage expanded.

Infrastructure That Scales With Source Count

A single scraping script breaks at the first site change. A production system with monitoring, alerting, version control, and replay of historical extractions scales with the business. New sources slot into the existing pipeline rather than requiring a separate project. Our Enterprise Scraping infrastructure is built around that principle: architecture that outlasts the deployment.

Planning to build a business case internally? Our guide on how to identify market opportunities for business growth using web scraping has frameworks and benchmarks suited to that conversation.

Sizing the Build: Volume, Cadence, Sources

A Working Matrix by Scale

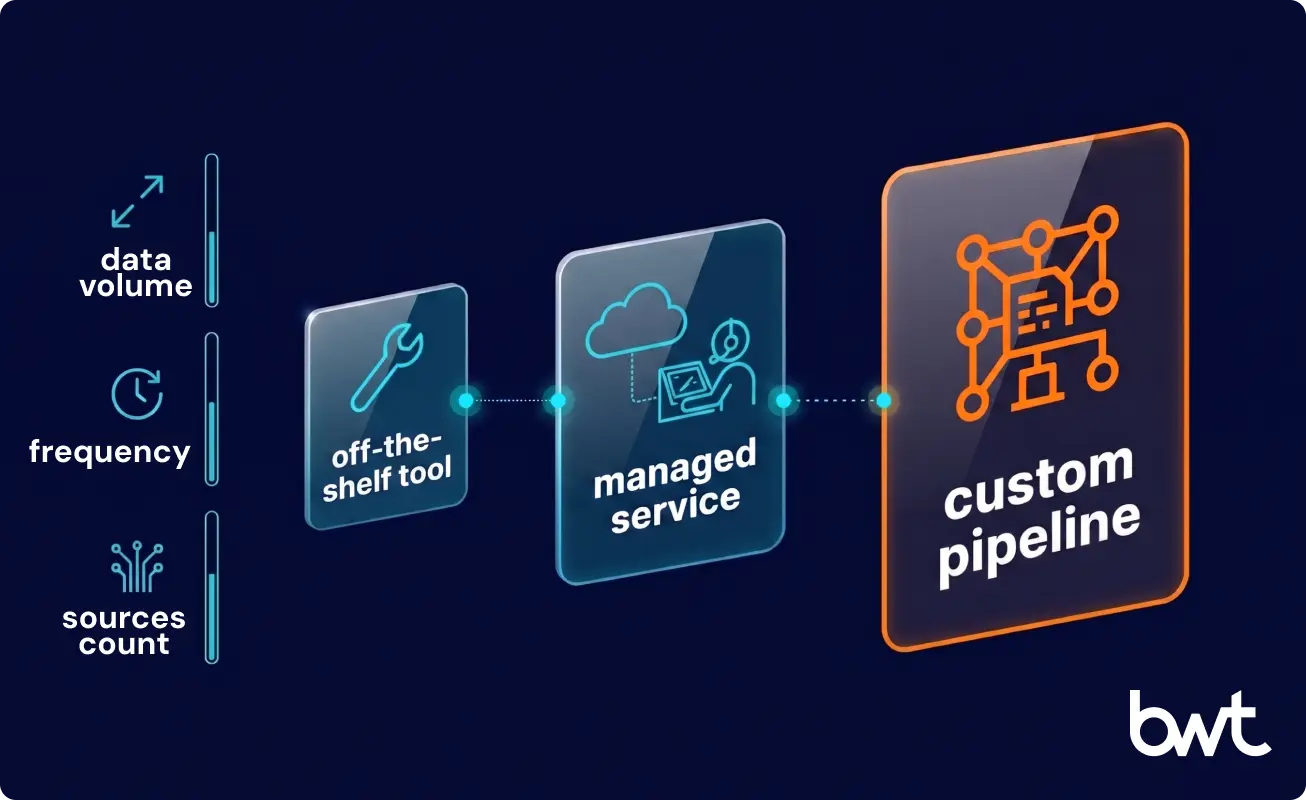

The use case diagram for web scraping investment decisions maps three variables: data volume, refresh frequency, and number of distinct sources. Those parameters drive infrastructure cost more than any other factor.

| Scope | Refresh Frequency | Source Count | Recommended Path |

| Under 10K records/day | Weekly | 1–3 | Off-the-shelf tool |

| 10K–500K records/day | Daily | 3–15 | Managed scraping service |

| Over 500K records/day | Hourly or real-time | 15+ | Custom-built pipeline |

These ranges are approximate. A single highly dynamic source with aggressive bot detection can require more engineering than 20 stable static sources combined.

Numbers From Live GroupBWT Deployments

- E-Commerce price and product monitoring: 959,139 SKUs daily, 99.5% data completeness

- Fleet pricing across 11 European Automobile marketplaces: daily refresh, 6% selling price uplift

- Tender monitoring across 50+ procurement portals: 25% increase in capture volume vs. manual baseline

- Healthcare CRM migration: 50% reduction in transition time, 35% improvement in record completeness

- AI-powered lead generation for a sports technology client: 25% more qualified leads, 20% higher conversion

Every outcome ties to a specific infrastructure decision: refresh cadence, source coverage, normalization logic. The client’s data environment and team capacity to act on collected data also affect results.

When the Math Works, by Company Size

For a startup or SMB (under 50 employees), break-even on custom infrastructure typically appears when the manual alternative exceeds 20 analyst-hours per week. Below that, off-the-shelf tools like Apify or Bright Data often make more sense.

For a mid-market company (50–500 employees), the calculation shifts toward data freshness and reliability. Once quality failures produce bad pricing decisions or missed procurement opportunities, the cost of inaction becomes measurable. We have seen mid-market clients where a single missed tender exceeded the annual cost of a dedicated scraping system.

For an enterprise, the question is not whether to invest, but how to build to production-grade standards. Anti-bot environments, compliance requirements, and data volume at enterprise scale make DIY approaches a recurring source of incidents.

Off-the-Shelf vs. Custom: Honest Trade-offs

| Dimension | Off-the-Shelf Tools | Custom-Built Pipeline |

| Setup time | Hours to days | 2–8 weeks |

| Maintenance burden | On your team | On the vendor |

| Anti-bot capability | Limited, generic | Custom per-source profiles |

| Compliance controls | Manual, bolt-on | Built-in governance layer |

The challenges in web scraping (bot detection, layout changes, rate limiting, data quality drift) are solvable at any scale. The question is whether they are your team’s problem or your vendor’s.

Anatomy of a Production Scraping System

Engineered to Outlast Site Redesigns

Most scraping scripts get written for the site as it exists today. Production systems get written for the site as it will exist over the next two years, accounting for layout updates and anti-bot upgrades.

GroupBWT’s pipelines monitor for content drift. When a site changes its HTML structure, our systems detect the deviation within minutes and flag it before a malformed record reaches a downstream dataset. That is why clients’ datasets stay clean through source changes that would break a simpler setup.

Discovery, Build, Validation: The Delivery Arc

Three sequential phases run the project. Discovery maps target sources and data contracts. Build develops scrapers, parsers, and transformation logic. Validation runs QA gates, completeness checks, and anomaly monitoring before handoff.

For a mid-complexity project (5–15 sources, daily refresh, structured output), the cycle runs 3 to 6 weeks. For the digital shelf deployment across 100+ marketplaces, we delivered a working prototype in week two and reached full production coverage within six weeks.

Scale Is an Engineering Problem, Not Scripting

The applications that produce the most durable value share a trait. They get treated as ongoing data infrastructure, not one-time pulls. The same disciplines applied to any production system (versioning, monitoring, governance, failure recovery) apply here.

“Scale in scraping is a systems problem, not a scripting problem. A team good at writing scraping code will hit a wall at 50 sources or a few million records per day. Not because their code is wrong, but because monitoring, state management, failure recovery, and source versioning require a different engineering discipline. That gap is what we close.”

— Dmytro Naumenko, CTO, Cloud Architecture and Delivery at GroupBWT

Starting Points for Teams Scoping This Now

Companies winning on pricing, competitive intelligence, and market sensing in 2026 treat external data collection as infrastructure, not a quarterly reporting project.

If your team is scoping a data capability, or has a scraping setup that has become a maintenance burden, the next step is a scoped conversation about sources, volume, and what the data needs to feed. We have run that conversation 140+ times. It rarely takes more than 30 minutes to identify whether a custom build makes sense.

Start with our web scraping services overview or reach out for a technical scoping call.

The highest-frequency applications across GroupBWT’s client base: price and product monitoring for E-Commerce and Retail, competitive intelligence for sales teams, tender aggregation for enterprises, and market trend analysis for Beauty & Personal Care and Automobile. A fast-growing category is scraping to feed AI systems: LLM fine-tuning datasets, RAG pipelines, and structured contracts for AI agents.

Legality depends on what is being scraped, how it is used, and which jurisdiction applies. Scraping publicly accessible data (prices, product descriptions, public filings, job listings) is generally legal in most jurisdictions, though boundaries continue to be refined through litigation. GDPR and CCPA apply when scraped data includes personal information. Businesses deploying scraping at scale should have legal counsel review the data types and sources before production.

GroupBWT focuses on 7 primary industries (E-Commerce, Retail, Beauty & Personal Care, Travel, Real Estate, Automobile, Telecom) because they all have large volumes of publicly accessible structured data that changes frequently and directly affects pricing, inventory, or positioning. Adjacent verticals like financial services, healthcare, sports technology, and nonprofits also generate strong applications around regulatory monitoring and lead intelligence.

The combination works both ways. AI improves scraping through LLM-powered parsers that handle semi-structured content, navigation flows, and natural-language descriptions traditional extractors fail on. Scraped data in turn feeds AI systems, providing the fresh external context that RAG systems and AI pricing engines need to function reliably.

Off-the-shelf tools work when source sites are stable, data volume is under 10,000 records per day, and your team can maintain scrapers. They break at scale because anti-bot systems, layout drift, and data quality issues need engineering responses general-purpose tools cannot handle. A custom solution makes sense when data is time-sensitive, anti-bot environments are sophisticated, compliance governance is required, or the downstream consumer has strict data quality requirements.

Read summarized version with

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment