Read summarized version with

Introduction

Fragmentation looks like a scraping problem. It isn’t. Scrapers are the easy part. The hard part is keeping 100+ unstable sources unified and auditable while portals change faster than your team can patch them. Everything below is what data aggregation services taught us about operating a UK procurement intelligence platform with 4.3M tender records across 100+ sources.

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment

Why Fragmentation Is an Architectural Problem

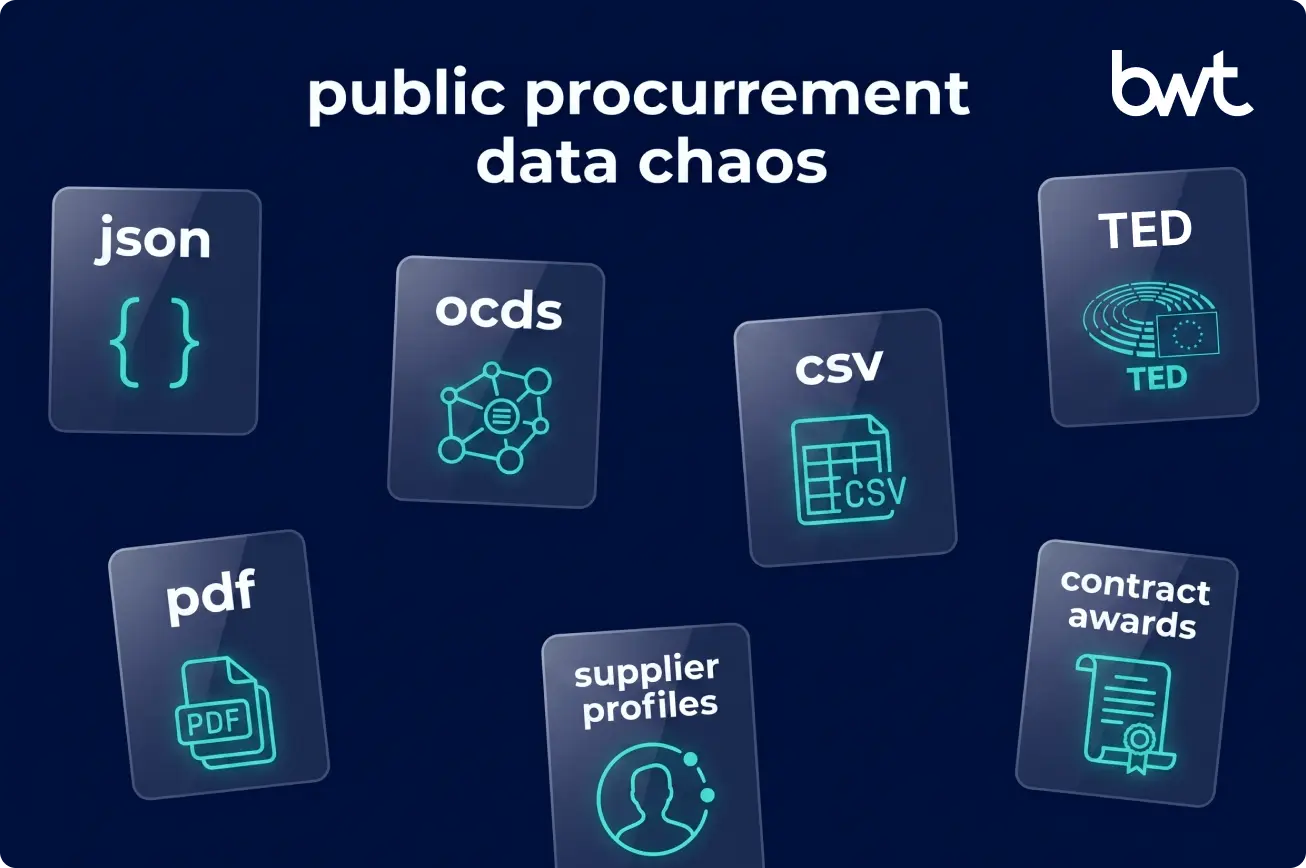

Every government, region, and agency runs procurement slightly differently — central federal portals, dozens of regional authority sites, defense and healthcare platforms, each with its own schema, release cadence, and naming conventions.

GroupBWT’s aggregation is the work of collecting these feeds, normalizing them into a single schema — we use the Open Contracting Data Standard (OCDS), an open specification that models the full contracting lifecycle (planning → tender → award → contract → implementation) — and delivering them so an analyst never has to care whether a notice came from a local council or a national aggregator.

For intelligence platforms, compliance teams, and bidders, the payoff is a single source of truth and no missed opportunities. The cost of not aggregating is an analyst opening a CSV on Monday, checking TED on Wednesday, and watching a multi-million notice slip past because a regional portal changed its HTML on Tuesday night.

Key Challenges in Tender Data Aggregation

Fragmented Portals and Inconsistent Formats

In production, “building for continuous portal change” is the baseline. Portals break constantly, and your aggregation system must absorb that:

- Upstream bugs you inherit: A portal introduces an HTML-entity bug that blocks its own search results — which means it also blocks your scraper’s keyword filter. You now own their bug. Fix: add a parallel query path around the broken entity so ingestion keeps flowing until the portal fixes itself (which can take weeks).

- Silent drops: Platforms like Defence Online stop publishing releases through the standard feed without warning. We implemented an FTS API fallback to backfill 350–440 releases a day and a distribution-anomaly alert that pages on-call when daily release counts drop more than 2σ from the 30-day mean.

- Redirects and access changes: The NCHA In-tend portal redirects to sell2.in-tend, requiring instant resource reconfiguration. Atamis Defra tightens access restrictions — session logic, cookie flow, or IP ranges change without notice, and ingestion stops until the scraper adapts.

Metadata Gaps and Missing Contract Logic

Not all portals provide CPV codes, buyer IDs, or even clear contract values. Where a portal omits the buyer name or publishes a shell alias, we enrich against Companies House (rate-capped at 600 requests per 5 minutes in a dedicated augmentation service), maintaining an internal table of verified names and “also-known-as” mappings. Every enrichment is recorded in a separate field — the original value stays untouched.

Threshold Segmentation and Legal Risk

Different procurement rules apply depending on contract value thresholds (e.g., the UK Procurement Act). Mixing above-threshold and below-threshold notices without proper tagging destroys the dataset’s analytical value — the legal requirements, timelines, and publication duties for each are vastly different.

Why TED Alone Is Not Enough

Tenders Electronic Daily (TED) is the central registry for European public procurement, but relying solely on TED data is a mistake. TED only captures above-threshold contracts. The vast majority of procurement volume, by count, occurs below these thresholds on local portals.

How to Aggregate Tender Data: A 6-Step Playbook

Step 1. Build a Source Matrix Before Writing Code

Document every target portal, its format (HTML, XML, JSON API), its update frequency, its notice types (Prior Information Notices, Contract Awards, framework call-offs), and its legal jurisdiction. This matrix is the spec — scrapers are the implementation.

Step 2. Pick One Normalization Standard and Commit

Raw data is useless without data aggregation into a unified model. OCDS is the industry baseline and worth adopting even when it doesn’t fit perfectly — you extend it, you don’t replace it.

In our UK procurement system, one of the first architectural calls was how to generate OCIDs across 100+ heterogeneous sources. OCID — Open Contracting ID — is the unique identifier OCDS assigns to a single contracting process across all its notices.

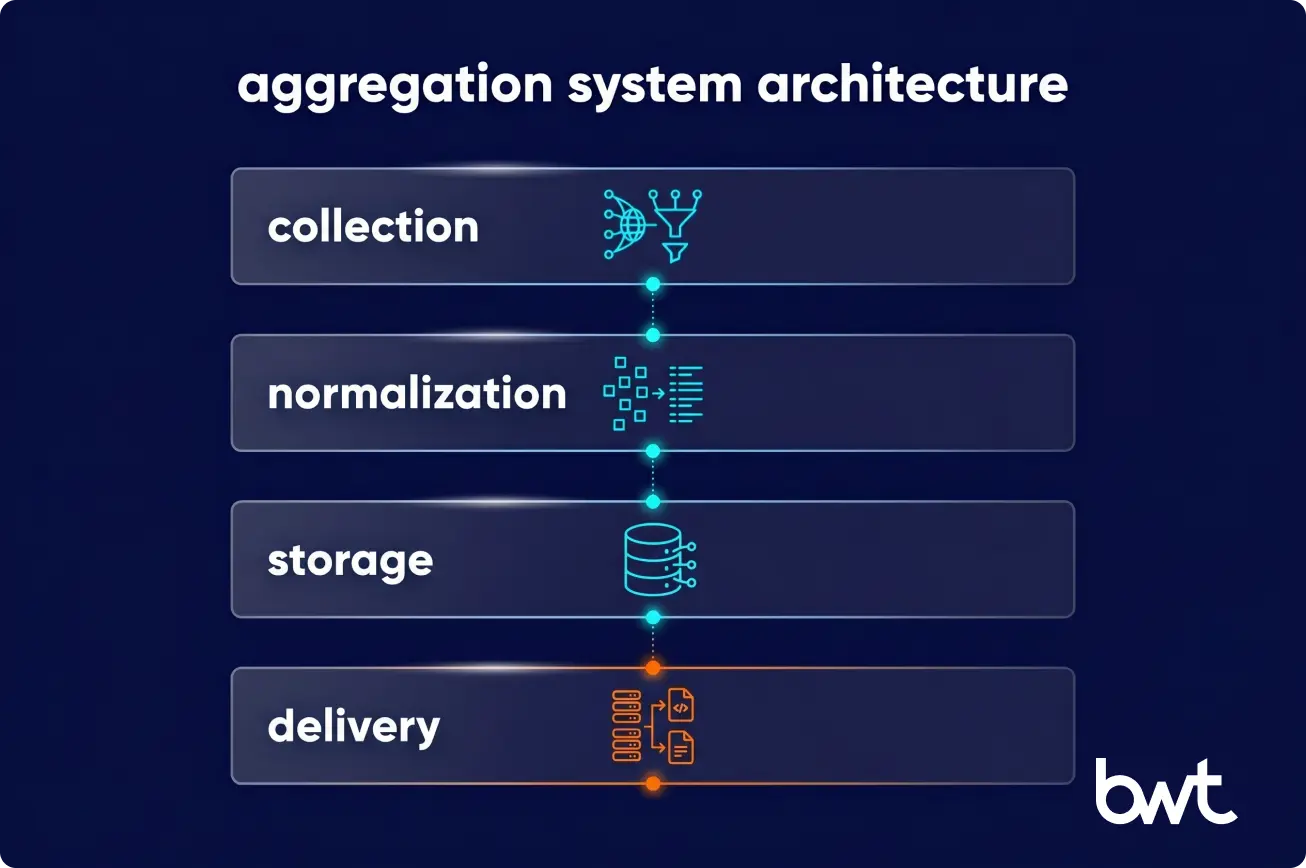

Step 3. Design the 4-Layer Split Before the First Scraper

The mistake most internal teams make: a monolith where one scraper fetches, parses, saves, and serves. It works for 5 portals and collapses at 50. In production, we enforce a strict 4-layer split — not because it’s elegant, but because a crashed scraper, a schema change, or a storage migration must not take down the other three concerns.

Step 4. Tag Every Record with Jurisdiction and Threshold

Your pipeline must tag records with source jurisdiction, procurement regime (above/below threshold, framework call-off, concession), and buyer type. These tags are cheap at ingestion and impossible to reconstruct later.

Step 5. Automate Monitoring for Schema Drift, Not Just HTTP Errors

Writing scrapers is easy; maintaining them is a nightmare. A common failure mode is the “side-project that becomes a full-time job for multiple engineers.” HTTP 503 alerts catch outages. Schema-drift alerts catch the silent failures — a renamed field, a new HTML wrapper, a CPV column that moved one cell right. Alert on distribution anomalies, not just status codes.

“The first sign that a procurement pipeline is in trouble isn’t an alert — it’s a QA analyst opening a portal manually and finding a contract that isn’t in our system. By then, the monitoring gap has been open for days. We wire distribution-anomaly alerting before we finish the ingestion layer, not after.”

— Alex Yudin, Head of Scraping at GroupBWT.

Step 6. Build the Delivery API Before the Dashboard

Dashboards are downstream of a good API. Build the API first, then let the dashboard, the alerts, and the third-party integrations consume it.

Designing a Tender Data Aggregation System

The 4-Layer Architecture

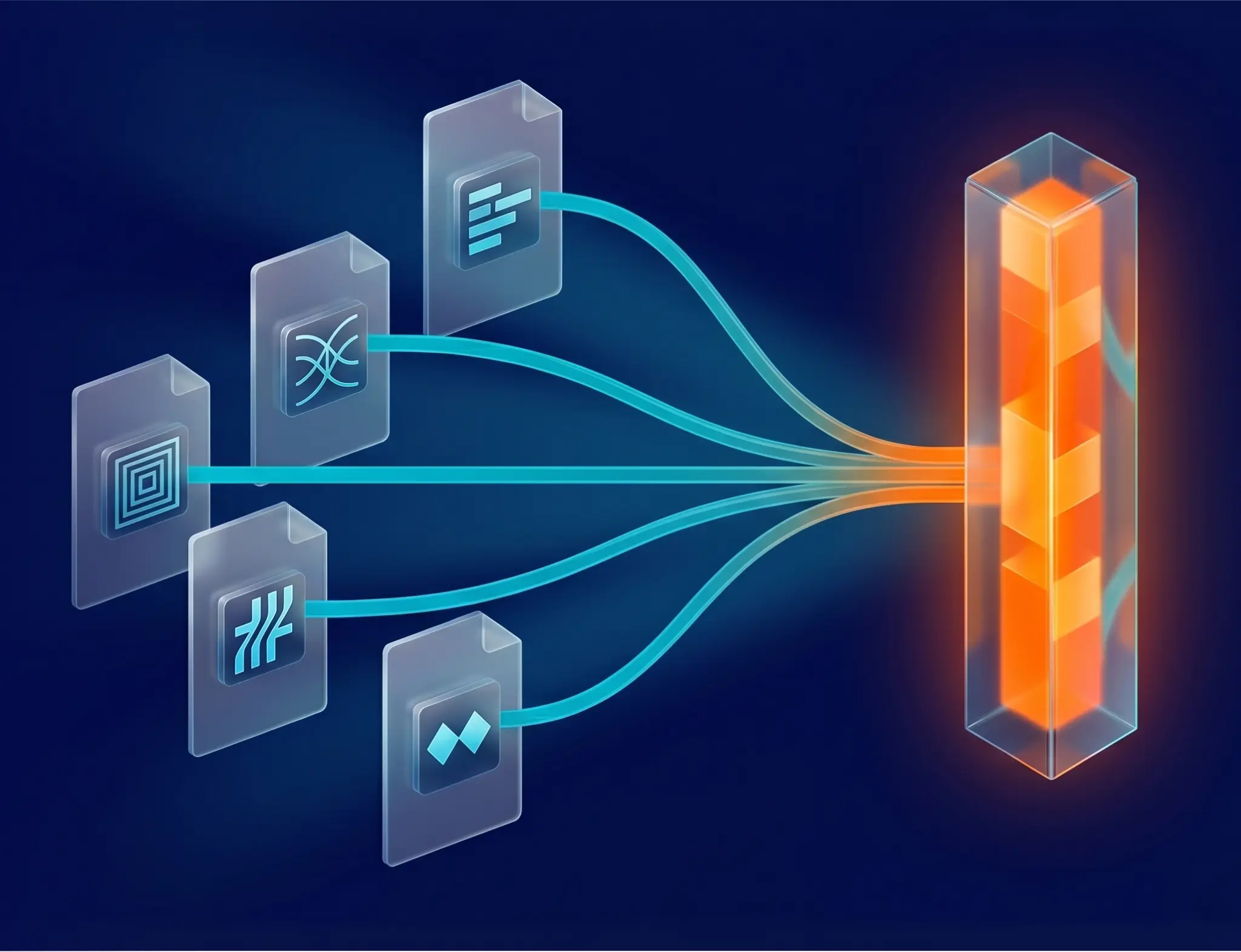

- Collection. Dedicated workers responsible only for fetching raw payloads — HTML, XML, JSON, file attachments. No parsing beyond what’s needed to decide “did we get a full response or not.”

- Normalization. The parsing layer that translates raw payloads into the unified OCDS model. Every parser is isolated per source.

- Storage. The Data Saver layer handles deduplication, versioning, and history. Raw payloads and HTML snapshots are persisted alongside normalized records.

- Delivery. The Tender API (public and internal) that serves clean data to end-user applications, subscriber alerts, and third-party integrations.

Learn more about architecture data pipeline best practices.

Production Stack (For Reference)

This stack is not a recommendation for every project. It shows how one production system was assembled around reliability, not trendiness.

- Scrapers: Python + Scrapy, Poetry for packaging, Pydantic for payload validation, kombu for messaging integration.

- API & business logic: PHP 8 / Symfony services — API Gateway, Auth, Tender, Management, Data Saver.

- Messaging: self-hosted RabbitMQ (“Data Bus”) — not Kafka, not Celery; the simpler primitive wins in ops.

- Storage: PostgreSQL (operational data), MongoDB (OCDS tender store), S3 (raw payloads, HTML snapshots, tender attachments), Redis-stack (vector similarity search for deduplication candidates).

- Search: Sphinx, incrementally indexed — tuned for CPV-hierarchy queries and multilingual full-text.

- Infrastructure: AWS, Terraform + Terragrunt, ArgoCD + Helm for deployments.

- Observability: Sentry (errors), Grafana Loki (logs), Prometheus (metrics).

Fault Tolerance and Monitoring

When an external portal goes offline, the collection service pauses and retries with a backoff rather than crashing the pipeline. Schema-drift alerts, distribution anomalies (2σ deviation from 30-day baseline), and per-scraper success-rate dashboards all feed the same on-call rotation.

Schema Design for Structured Procurement Data

Adopting or adapting OCDS is the industry’s best practice. It forces you to think in terms of the entire contracting lifecycle (tender → award → contract) rather than isolated documents. Extend it where needed — we added a “Framework Agreement Timeframe” field to model UK public-sector framework contracts properly (see EU/International section below).

Audit-Ready Data Flow and Traceability

Every aggregated record links back to the original raw payload — HTML snapshot, JSON response, and any attached tender documents (ITT packs, specifications, Q&A) stored in S3 at ingestion time. If a client questions a contract value six months later, we can show the exact bytes the portal served, not a reconstruction.

Centralizing Tender Data for Cross-Role Access

Why Centralization Matters After Aggregation

Once you have 4.3M tender records, the challenge shifts from data engineering to data delivery. Sales teams need real-time alerts, analysts need historical trends, and compliance teams need audit trails. One dataset, three very different access patterns.

Searchable Tender Data Interfaces

A unified database needs a search index built for CPV-hierarchy queries, fuzzy multilingual matching, and near-instant full-text across millions of records. In our UK system, Sphinx handles this — rebuilt incrementally so a search hit on Monday morning reflects a notice ingested overnight.

Centralize via API and GUI, Not Email Alone

Delivery runs through a PHP 8 / Symfony API layer (API Gateway → Auth → Tender → Management), feeding an operator interface rendered in Twig, plus a public Tender API for third-party integrations and an outbound daily-alert feed for 8K–15K subscribers delivered by 5 AM London time. The GUI is the primary product surface — email is the delivery channel of last resort, not the first.

Aligning Centralized Logic with Aggregation Rules

The business logic used to filter data on the frontend must match the normalization rules applied at ingestion. Duplicate filtering logic drifts; centralize it in the API layer.

Automating Tender Data Collection and Standardization

Automated Source Monitoring

A system delivering 8K–15K subscriber alerts by 5 AM London time cannot rely on manual triggers. Schedulers run continuously, tailored to each portal’s release windows — some publish nightly, some hourly, some in unpredictable bursts.

Standardize Structure at Ingestion

Standardization must happen before the data hits the main database. Fields like Publication Date and Deadline are converted to UTC ISO format immediately. Currency, CPV codes, and buyer identifiers go through per-source normalization rules.

Compliance Validation During Collection

If a high-value tender is scraped but missing a mandatory buyer name, the system flags it for human review or falls back to Companies House enrichment — rather than pushing corrupted data downstream.

Scaling Safely

Scaling means realistic request pacing and proxy rotation, not aggressive scraping. Public procurement data is generally of public interest, but national portals vary widely in what they explicitly permit — we review each source’s terms and legal basis individually. The rule isn’t “scrape politely”; it’s “don’t ingest anything you can’t defend in writing if the portal owner asks.”

EU and International Tender Aggregation Considerations

TED, eForms, and National Portal Complexity

The transition to eForms replaced prior TED notice formats — a structural change that broke integrations across the industry and required parser updates in every EU-sourced pipeline. This is the baseline reality of public procurement data aggregation, not an exception.

Framework Agreements as a First-Class Concept

UK public sector — NHS, HealthTrust, STFC — is built around framework agreements: long-running master contracts that spawn individual call-offs over years. A system that treats each call-off as a standalone tender will misreport both market share and buyer activity. OCDS doesn’t model framework timing well out of the box; we extend the standard with a custom “Framework Agreement Timeframe” field so analysts can trace a call-off back to its master framework and see when that framework expires.

Cross-Border Supplier Mapping: Flag, Don’t Merge

A major challenge is that “Siemens AG”, “Siemens Ltd”, and “Siemens Mobility” appear across multiple portals and notices. Our rule is to flag, not merge: we surface these as related records via OCDS extensions, but we never auto-collapse them. Merging distinct legal entities silently — even when the brand is identical — corrupts audit trails and can mis-attribute award values. Entity resolution is a reviewer’s tool, not an ingestion step.

Legal Implications of International Aggregation

Data collection must comply with local data protection laws (GDPR) and database rights, especially when aggregating contract award notices that contain personal data (named signatories, contact points, SME director details).

Build, Buy, or Partner: How to Decide

| Criterion | Build in-house | Buy off-the-shelf feed | Partner |

| Source coverage | Full control | Vendor-defined (often TED-only) | Custom, expandable |

| Time to first data | 6–9 months | 1–2 weeks | 2–3 months |

| Below-threshold UK/EU coverage | Possible, expensive | Rarely | Yes |

| Schema changes (eForms, Procurement Act) | Your problem | Vendor’s timeline | Negotiated SLA |

| Maintenance cost at 100+ portals | 2–3 FTE ongoing | Subscription | Fixed engagement |

| Audit-ready lineage | You design it | Vendor-dependent | Built in |

Rule of thumb: build if aggregation is your product; buy if you need TED coverage only; partner if you need deep below-threshold or cross-border coverage without burning your engineering roadmap on scraper maintenance.

Best Practices for Building a Tender Aggregation System

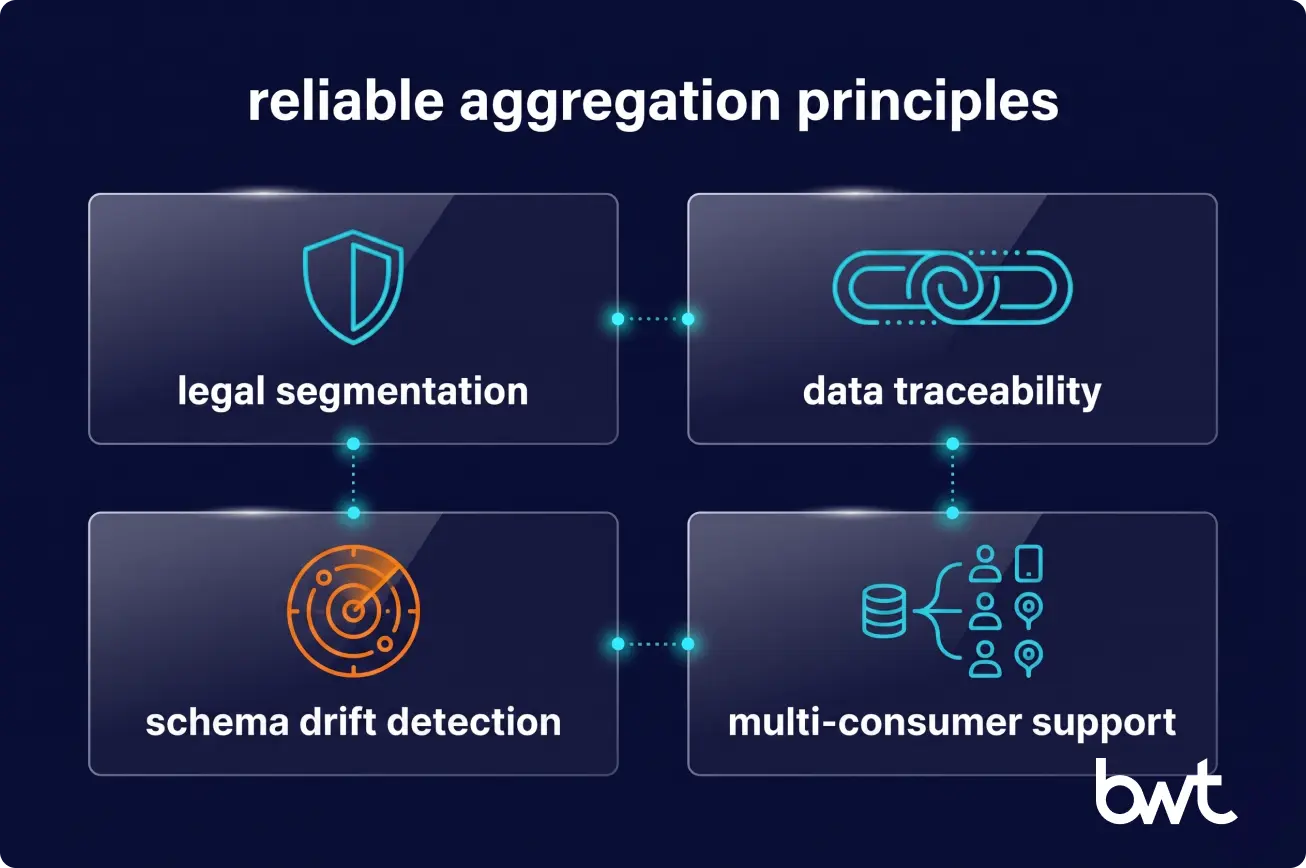

Design for Legal Segmentation from Day One

Keep source tags pristine. When regulatory frameworks change (post-Brexit UK procurement, eForms rollout), you need to cleanly segment historical data from new data without a migration project.

Make Data Lineage Visible Across the Pipeline

If a user flags a bad record, an engineer should be able to click through to the exact raw HTML or JSON payload that generated it — with ingestion timestamp and source URL attached.

Build for Continuous Schema and Portal Change

Schema drift is not an exception; it’s the default. Alerting on distribution anomalies (not just HTTP errors) is the difference between a self-healing pipeline and a quiet fire.

Serve BI, Compliance, and Audit from One Dataset

A well-architected centralized tender system serves multiple masters. Structure your API so BI tools can ingest bulk data for dashboards, while compliance teams can query specific audit trails down to the raw payload.

Why Organizations Partner for Tender Data Aggregation

When Internal Teams Hit Scaling Limits

Most internal teams can successfully scrape 5 to 10 portals. When the requirement scales to 100+ sources with SLA-grade delivery, the maintenance overhead consumes the engineering team, and scraper maintenance rarely competes well against product work in roadmap planning.

In production, our operating rule is simpler than any slogan: a late tender is a lost tender. The SLA target for the daily alert run — 8K–15K subscribers, delivery complete by 5 AM London time — drives every architectural tradeoff upstream, from scraper scheduling to index refresh cadence.

Benefits of Custom Aggregation Infrastructure

Partnering lets you leverage existing architectures (the 4-layer split, Companies House augmentation, framework-agreement extensions) and battle-tested monitoring without paying the learning tax of building from scratch.

What to Expect from a Procurement Data Partner

Expect an SLA on data delivery, proactive fixing of broken scrapers, a clear architectural plan for how your unified tender feed solution will scale, and full data lineage by default. For more on how we approach this, explore our data engineering services.

Ready to stop fighting broken scrapers? Let’s discuss how to build a scalable, automated pipeline for your procurement data.

6–9 months for baseline architecture and the first 20–30 sources. The long tail (maintenance, schema drift, new portals) is perpetual — plan for 2–3 dedicated engineers ongoing, not a one-off project.

For above-threshold EU contracts, yes. For deep UK below-threshold coverage, cross-border supplier intelligence, or custom CPV logic, no off-the-shelf feeds either stop at TED or lock you into their taxonomy.

Incremental real-time indexing over tender-shaped data (lots of structured fields, CPV hierarchies, multilingual full-text) tuned to our query patterns. Elasticsearch would work; the operational cost of rewriting battle-tested ranking logic isn’t justified by the upside.

Schema drift detection. A portal can change one field name and corrupt three months of data silently. Alerting on distribution anomalies (not just HTTP errors) is the difference between a self-healing pipeline and a quiet fire.

We flag, we don’t merge. Related entities (“Siemens AG” vs “Siemens Ltd”) are surfaced via OCDS extensions so analysts can see the relationship, but the records stay distinct. Auto-merging distinct legal entities misattributes award values and corrupts audit trails.

Read summarized version with

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment