Read summarized version with

Introduction

Seven scrapers. Thirty-plus European cities. Reddit threads, TripAdvisor pages, crime indices, transport APIs, none of them sharing a schema or refresh schedule. GroupBWT built the pipeline that turned the noise into structured, confidence-scored city intelligence on a sub-15-minute refresh cycle. The scoring engine only worked because the data underneath was schema-aligned and deduplicated before any LLM saw it.

This guide is for data and engineering leaders whose production AI is gated by the data layer, not the model. AI-ready data pipelines close a gap traditional infrastructure leaves wide open. Schema discipline. Feature engineering. Quality enforcement at the volume ML actually demands.

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment

How AI-Ready Pipelines Differ from Traditional Data Pipelines

A traditional pipeline grabs data at one source. It reshapes that data in transit. The output lands somewhere a reporting tool can read. Enough for BI. Not for AI.

ML models are brittle in ways SQL queries are not. A reporting pipeline tolerates missing fields; a training pipeline turns them into corrupted weights. Schema drift a warehouse absorbs silently produces nonsensical feature vectors downstream. Stale inputs are worse than no prediction because they look authoritative. Google’s Rules of Machine Learning places training-serving skew at the top of its list of recurring production bugs. The data layer is where ML systems break first.

The EU travel startup behind the city-scoring platform learned this directly. A Reddit comment like “the tacos near La Rambla were insane” had to be tagged as a Barcelona gastronomy signal first. Only after that tag landed could the engine score it against the right rubric. The result then got written to the right table. All before any LLM touched it.

Key Characteristics of AI-Ready Data Pipelines

High data quality and consistency

Schema validation, null handling, anomaly detection: all of it happens inside the pipeline, not in a QA pass after the fact. The parallel validation pipeline GroupBWT built for an EU food delivery company covers 1,200 geo-zones, deduplicates by listing ID at ingestion, and tags every field with session metadata. Through session-specific cookies and rotating proxies, it delivers a 98.6% field-level match rate against the client’s primary vendor dataset on three reference fields (listing ID, price, availability), each session under 1.8 hours.

Scalability for large-scale data processing

Pipelines built this way absorb volume growth without re-architecture. The same delivery pipeline processes 2 million validated records per session on the codebase that ran dry tests on two sample zones (London, Paris).

Real-time and batch processing capabilities

Crime indices update quarterly. TripAdvisor reviews arrive daily. Running everything on the same cadence wastes compute on static data. Fresh signals get missed at the same time.

Data governance, security, and compliance

Lineage rides on every record. Legal answers a regulator without re-ingesting raw sources, and BI rebuilds historical views the same way. Four metadata fields make that possible: source, ingestion timestamp, schema version, and jurisdiction flag. Skip one, and traceability stops scaling.

Support for structured and unstructured data

Reddit threads, tabular CSVs, REST API responses, HTML fragments all land in the same pipeline. The work is unifying them into one schema before processing.

AI-Ready vs AI-Powered Data Pipelines

| AI-Ready Pipeline | AI-Powered Pipeline | |

| Definition | Infrastructure prepared to feed AI models reliably | Uses ML components within the pipeline itself |

| When to use | Before training your first model | When the pipeline benefits from intelligent processing |

| Examples | Feature stores, quality validation, schema governance | Intelligent routing, auto-schema mapping, anomaly detection |

AI-ready is the foundation; AI-powered builds on top. You can’t run reliable AI-powered processing without the discipline that the foundation enforces first.

Also Read: AI Consulting for Small Businesses: Strategies, ROI, and Expert Tips

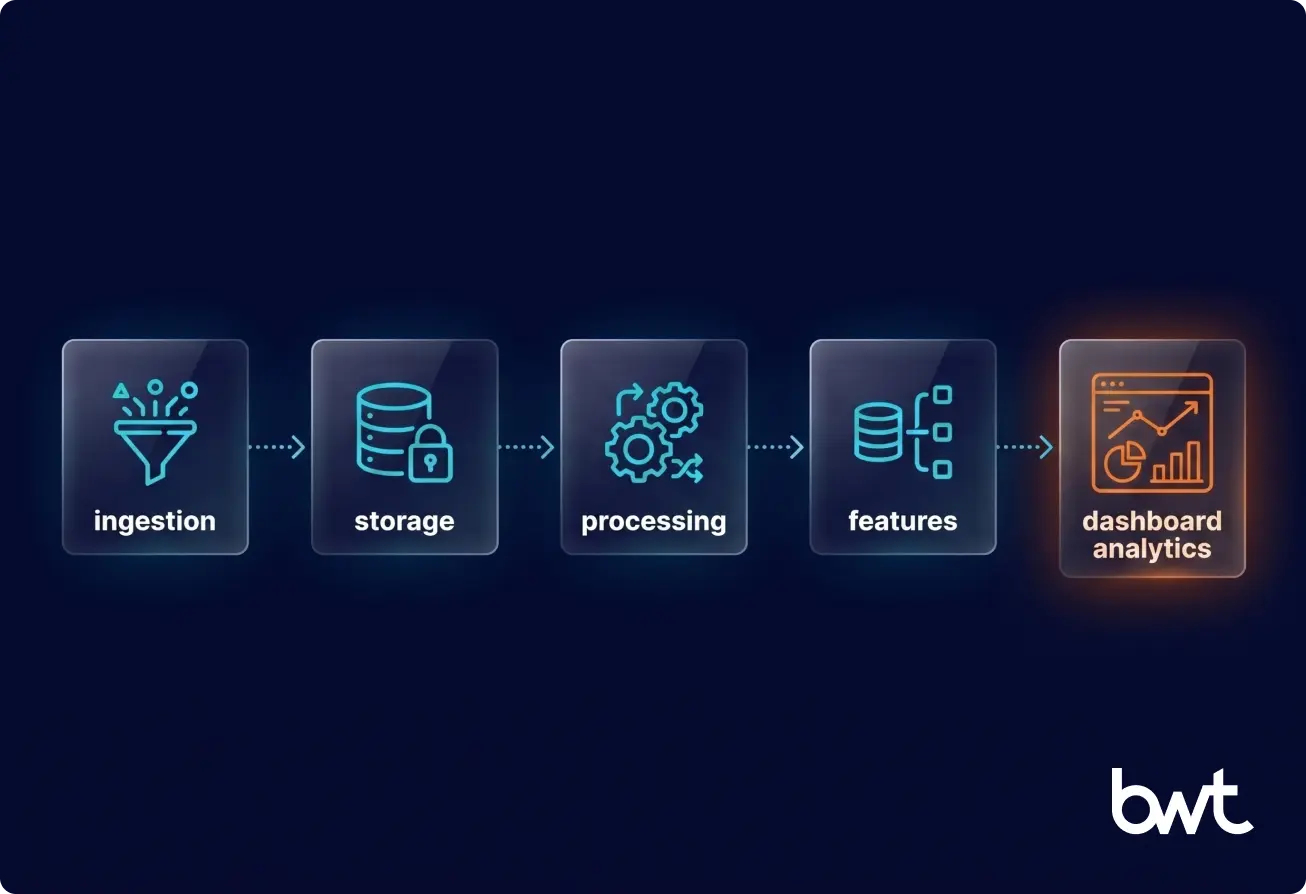

Core Components of an AI-Ready Data Pipeline

Data Ingestion from Multiple Sources

This is where teams underestimate complexity. A source returns clean JSON in testing, then nested HTML in production. A vendor feed silently drops fields after a schema change. The fix is building ingestion logic per source. A generalized connector seems easier upfront but it’s the wrong call: one source breaking cascades through every consumer downstream.

Data Storage: Data Lakes, Warehouses, and Lakehouses

| Storage Type | Best For | AI Trade-off |

| Data Lake | Raw, unstructured data; flexible schema | Requires a transformation layer before training |

| Data Warehouse | Structured, query-ready data | Poor fit for unstructured inputs |

| Lakehouse | Raw fidelity + structured query layer | Higher complexity, but most practical for AI |

GroupBWT implemented a three-zone data lake for a global analytics firm: raw held source snapshots, processed held cleaned timestamped fields, analytics fed dashboards and cross-source inference. Asynchronous isolation meant a source-layout change updated only that stream’s logic block.

Data Processing and Transformation (ETL/ELT)

ETL consulting services cover the layer where raw data becomes usable. ETL fits when transformation logic must be versioned alongside the data. ELT fits when raw fidelity for future reprocessing matters more. Production pipelines usually run both, picking per source.

Feature Engineering and Data Preparation

Model performance is made or broken here. GroupBWT’s approach on the data engineering pipeline for an AI travel platform used rule-based heuristics for first-pass filtering before AI classification. Sending every review through an LLM would have cost about $16 per city per refresh. The hybrid design increased scoring coverage by 20–30% per city and dropped per-city compute to $0.16: a 100x reduction driven by filtering, not model choice.

“When you’re processing hundreds of thousands of reviews, the temptation is to throw everything at an LLM. We took the opposite approach, rule-based filtering first, AI only where it adds real value. That’s how you get $0.16 per city, not $16.”

— Alex Yudin, Head of Data Engineering, GroupBWT

Data Serving and Access Layers

ML models, dashboards, and APIs read processed data through the serving layer. A feature store keeps retrieval fast and enforces point-in-time correctness. BI hits a separate analytics layer. Mess up either side, and training-serving skew creeps in. Accuracy degrades quietly in production. Offline validation won’t catch it.

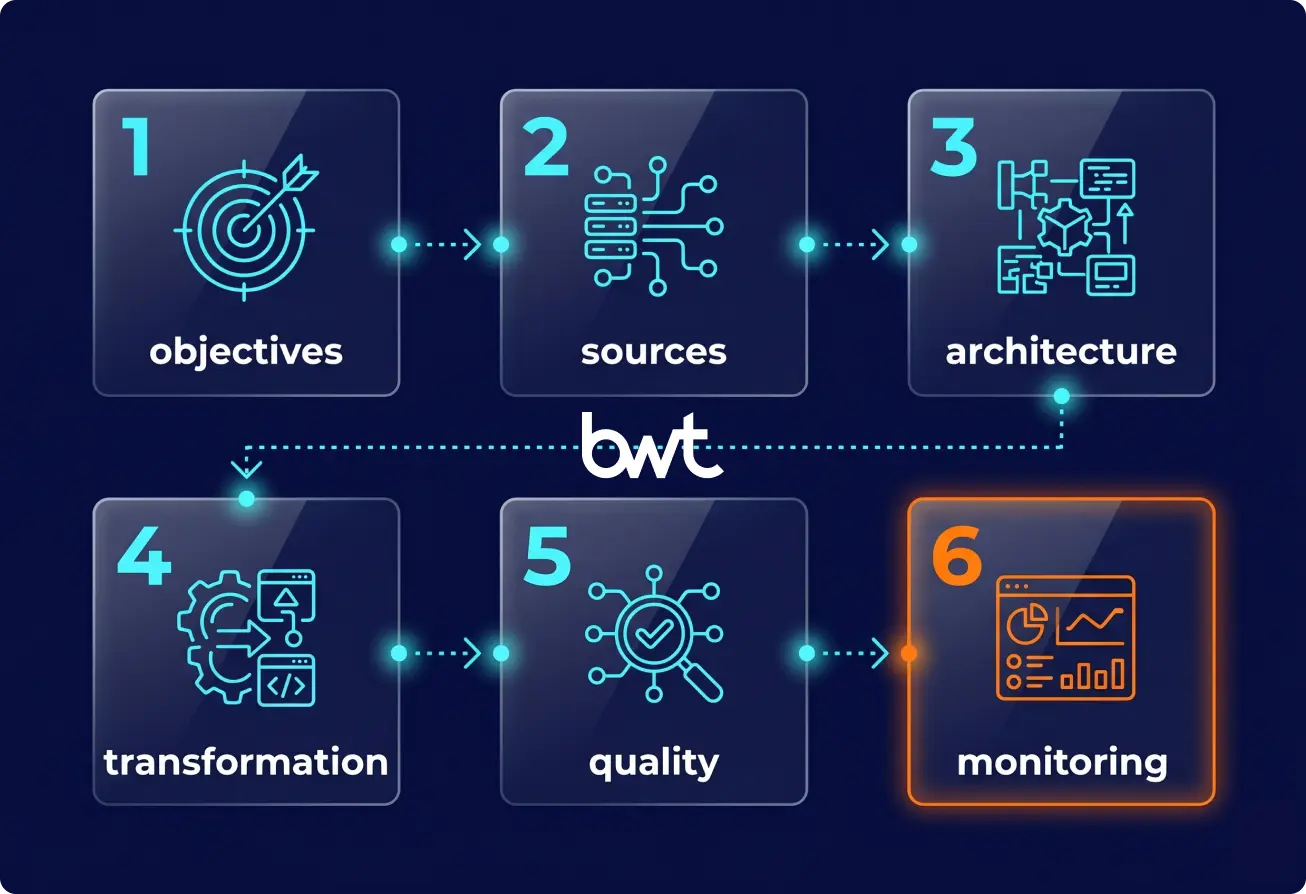

How to Build an AI-Ready Data Pipeline Step by Step

Anyone learning how to build an AI-ready data pipeline has the same first impulse. Pick infrastructure. Step back from that for now and start somewhere else.

Step 1: Define Business Objectives and AI Use Cases

Hold off on connectors and warehouse choices for a moment. Start with what the AI actually needs. Prediction task? 90 days of history or seven? Sub-second latency real, or assumed? A fraud model deciding in milliseconds has different infrastructure needs than a weekly churn job.

Step 2: Identify and Integrate Data Sources

Map every source you’ll pull from. Document the format. Note the update cadence you can rely on. Track how often that source has broken historically and how access actually works in production. The worst surprises come from sources that change without notice. RSS feeds drop articles. Vendors remove fields. Sites swap HTML overnight. Source-specific ingestion logic is the only reliable defense.

Step 3: Design Scalable Data Architecture

Storage and processing decisions come before code. The choice between a lake, a warehouse, and a lakehouse depends on data types and query patterns, not preference. Document schemas, then pin down how raw, processed, and serving zones relate to one another. A typical engagement for data engineering services & solutions spends one to two weeks on this layer before any connector code.

“The teams that rush into implementation without settling the data architecture spend twice as long on the project. Schema decisions made under deadline pressure become the bottlenecks everyone blames the pipeline for six months later”

— Dmytro Naumenko, CTO, GroupBWT

The teams that rush into implementation without settling the data architecture spend twice as long on the project. Schema decisions made under deadline pressure become the bottlenecks everyone blames the pipeline for six months later. — Dmytro Naumenko, CTO, GroupBWT

Step 4: Implement Data Processing and Transformation

Make transformation jobs idempotent. Non-idempotent jobs duplicate or corrupt data on retry, exactly the problem you don’t want when the orchestrator reruns a failed DAG. dbt for SQL transformations (lineage and version control built in), Apache Spark for distributed jobs. Version transformation logic alongside the data.

Step 5: Ensure Data Quality and Validation

Quality checks live inside the pipeline. Schema validates at ingestion. After that, completeness gets tracked per source over time, and any missing required field surfaces the moment it appears. For AI specifically, add distribution checks so feature drift shows up before the model retrains on corrupted data. The NIST AI Risk Management Framework treats both data quality and lineage tracking as core controls in its “Measure” function, not an optional add-on.

Step 6: Deploy Monitoring and Data Observability

When you can’t see inside the pipeline, you can’t trust what comes out of it. Track extraction success rates and field completeness. Watch processing latency and per-source error rates separately. GroupBWT uses Grafana and Prometheus for infra-level alerts; Metabase for business-level visibility. When one scraper went down on the travel platform post-launch, source isolation kept the rest running.

AI-Ready Data Pipeline Architecture

Architecture choices determine whether the system scales and what it costs to maintain. Get this layer wrong, tool selection won’t fix it.

Batch vs. real-time design

Batch pipelines run on a fixed schedule. Simple. Cheap. Stale by definition. Real-time pipelines stream events through Kafka or Flink with sub-second processing. Production AI usually runs both. Batch handles historical training. Real-time handles inference inputs.

Modern data stack

Ingestion runs through Airbyte or Fivetran. Storage means Snowflake or BigQuery for warehousing, S3 underneath for lake assets. Transformation is dbt territory. Orchestration goes through Airflow or Prefect. Serving sits behind a feature store for ML or an analytics interface for BI. Every layer stays replaceable without disturbing the others. GroupBWT’s data pipeline architecture examples walk through how the layers connect.

Microservices and distributed systems

Once multiple teams share a pipeline, AI ready data pipelines add service contracts between processing and serving. One team’s query stays stable when another team changes upstream schemas.

Lake vs. warehouse vs. lakehouse

For most AI workloads, the lakehouse wins. Raw data stays available for future reprocessing. Training pipelines and analytics dashboards still get warehouse-level querying through the same layer.

Best Practices for Building AI-Ready Data Pipelines

- Design for scalability from day one. When the EU travel startup launched, it was already running across 30+ cities and 7 sources. The roadmap pointed at significantly more of both. Growth landed without structural rewrites because scale was a design input, not a problem to fix later.

- Implement data quality checks early. Quality issues compound. A bad record slips through at ingestion, corrupts a downstream feature, the model trains on it, predictions go wrong. Each link costs more to debug than catching it at ingestion would have cost.

- Automate workflows and orchestration. Manual data management doesn’t scale. Airflow and Prefect handle scheduling, dependencies, and failure recovery so pipelines run on their own cadence.

- Use metadata and data catalogs. Every field carries provenance. The enterprise data lake GroupBWT built for a global analytics firm tags every record with source_type, region, timestamp, entity, status. Analysts and legal query the same dataset that BI uses, without translation layers between them.

- Ensure security and compliance. For enterprise AI, session-level metadata tagging stops being optional. The same goes for audit logs, encrypted storage, and jurisdiction flags on every record. Keep raw source files by default rather than overwriting them, since forensic analysis and compliance work both depend on the originals.

Common Challenges and How to Overcome Them

The same failure modes show up across industries when teams are building AI-ready data pipelines for technology companies, retailers, banks, or anyone else with serious data volume.

Data silos and fragmentation

Teams own separate stores in incompatible formats. The fix isn’t forcing everyone onto one tool. It’s a schema-aligned integration layer that federates across sources without touching their internal structures.

Poor data quality and inconsistent schemas

Schema drift from upstream is the most common failure mode. A vendor silently removes a field, and downstream models start failing in ways nobody can trace. Field-level change detection that alerts before corrupted data reaches training is the standard fix.

High infrastructure costs

Scaling horizontally for volume spikes is expensive and often unnecessary. Per-source scheduling (static sources quarterly, dynamic daily) cuts compute on mixed-cadence pipelines.

Latency and performance issues

Real-time pipelines bring their own headaches. Event ordering breaks. Late-arriving data corrupts windowed aggregations. Production systems achieve “effectively exactly-once” through idempotent consumers, deterministic deduplication keys, and transactional sinks. Most AI applications don’t actually need sub-second latency. They need freshness guarantees. Clarify the real requirement before choosing streaming over a well-tuned batch system.

Coordination costs rise as more teams consume the same pipeline. Feature stores and data contracts handle most of it; catalog tooling closes the gap and makes multi-team scaling tractable.

Tools and Technologies for AI-Ready Data Pipelines

You can swap tooling. The architectural discipline of making the tools work together is what doesn’t transfer.

- Data ingestion: Kafka handles streaming. Airbyte covers 300+ open-source connectors. Fivetran picks up managed SaaS sync.

- Data processing: Apache Spark runs distributed jobs. dbt handles versioned SQL transformation. Apache Flink takes stateful streams.

- Storage: Snowflake and BigQuery cover cloud-native warehousing, with S3 sitting underneath as the lakehouse foundation.

- Orchestration: Apache Airflow runs DAG workflows. Prefect handles dynamic, developer-friendly scheduling.

- Quality and observability: Great Expectations covers assertion checks. Monte Carlo handles pipeline observability. Grafana with Prometheus sits at the infrastructure layer.

A recent GroupBWT production stack ran on Python 3.12, MySQL 8.0, Redis, Celery, Metabase, Airflow, and Snowflake. That set of tools wasn’t a template — it was picked for that project.

Use Cases of AI-Ready Data Pipelines

Each use case maps to a concrete pattern GroupBWT has shipped.

Machine learning model training

What matters is data that stays clean and versioned with point-in-time consistency. On the travel scoring engagement, the training corpus rebuilds every refresh with full source provenance, which means models can retrain on demand without re-ingesting from scratch.

Real-time recommendation systems

This is where sub-second feature serving from a low-latency store earns its keep. The travel platform refreshes 30+ city scoring features in under 15 minutes per cycle. That’s the freshness floor needed to re-rank live.

Predictive analytics and forecasting

Here you need historical feature windows aligned to the model’s inference horizon. The enterprise data lake feeds 90-day trend analyses across regions and entities. Point-in-time correctness is preserved through versioned snapshots so a forecast trained on Tuesday’s view never sees Wednesday’s data leak in.

Fraud and anomaly detection

Streaming pipelines built around “effectively exactly-once”. That means idempotent consumers and deterministic deduplication keys; the food delivery pipeline dedups on listing ID at ingestion. Transactional sinks complete the picture. The same blocks generalize from price-monitoring to fraud workloads.

Customer behavior analysis

Review data, session logs, and purchase history: different signals about the same customer, unified into one schema. The Reddit-plus-TripAdvisor unification on the travel platform demonstrates the pattern across structured and unstructured sources at once.

Business Benefits of AI-Ready Data Pipelines

Faster Time-to-Market for AI Solutions

| Metric | Before an AI-ready pipeline | After an AI-ready pipeline |

| City refresh time | Manual CSV stitching; cycles in days | 30-city refresh in under 15 minutes |

| Per-city scoring cost | ~$16 if every review went through the LLM | $0.16 after rule-based pre-filtering |

| Source resilience | One failed scraper stalled the whole run | Source isolation: failures don’t block the rest |

| Confidence reporting | No way to flag low-evidence cities | Confidence scores surfaced to end users |

No new scraping budget was needed. The pipeline pulled more value out of the sources already running, and that’s what let the startup launch richer city profiles than originally planned.

Improved Model Accuracy and Reliability

The old saying about garbage in, garbage out applies here literally. GroupBWT’s confidence scoring flagged low-data cities instead of inventing numbers from thin evidence. That protected platform credibility. It also gave founders a clear map of where to invest data-collection effort next.

Better Decision-Making with Clean Data

Replacing fragmented vendor feeds with a direct-ingestion data lake cut change-to-BI sync below 15 minutes and removed 17 hours per week of manual QA at the global analytics firm. Analysts shifted from checking accuracy to investigating what changed and why.

Reduced Operational Costs

“Teams that skip data readiness and wire AI directly into raw infrastructure don’t save time. They pay for it in retraining cycles, debugging sessions, and the longer timeline of building the pipeline they needed at the start, after the fact.”

— Oleg Boyko, CCO, GroupBWT

Benefits of Working with Data Engineering Experts

The argument is operational. On the travel AI platform, rule-based filtering ahead of the LLM cut per-city compute from $16 to $0.16. That saving sat in the pipeline, not the model. The real-time delivery pricing pipeline delivered 2 million validated records per session across 1,200 locations, with a 98.6% match rate, within a sub-2-hour SLA. The enterprise data lake cut change-to-BI sync below 15 minutes and removed 17 hours per week of manual QA. Tooling preference didn’t matter for any of these. Architecture did. Every record came in schema-aligned and deduplicated, with observability instrumentation in place before any consumer touched it.

What to Look for in a Data Partner

Schema contracts before any connector code lands. When one scraper fails, can the other twenty-nine keep running? That’s per-source isolation by default. Quality and observability ship with the pipeline, not bolted on after the first incident. Every record carries its lineage; no exceptions. Versioned, idempotent transformation logic alongside the data. And a runbook your team can actually use without having to re-engage the vendor.

If a vendor leads with the tools they prefer rather than these architectural decisions, that’s the tell. Production-grade AI ready data pipelines are a function of architecture, not toolchain preferences.

If your data infrastructure isn’t ready for AI, talk to GroupBWT’s data engineering team to understand where the gaps are.

It’s infrastructure is built specifically to collect data, process it, validate it, and serve it in a format ML models can consume reliably. Schema consistency stops being optional. Feature engineering becomes a first-class concern, alongside quality validation and confidence scoring. None of that is bolted onto a BI stack as an afterthought. The output isn’t just clean data. It’s model-ready data, versioned and traceable to the source.

A traditional pipeline moves data from source to destination. It transforms data for query and loads the result into a warehouse. ML cannot tolerate the imperfections such pipelines accept: missing fields, schema drift, inconsistent timestamps. AI-ready architecture closes those gaps. Quality gates and a feature store sit at the heart of it. Lineage metadata is baked into every record. Batch and real-time both work side by side. The difference shows up in whether AI initiatives ever reach production.

A basic build connecting three to five stable sources reaches production in two to four weeks. A real-time pipeline carrying streaming plus feature engineering plus multi-team observability typically takes three to six months. Where you land in that range depends on source complexity and how clearly business requirements are defined upfront. The enterprise data lake GroupBWT built reached 98–100% source coverage; the timeline tracked how precisely the client could articulate what each source needed to prove downstream.

Think of the lake as cheap, flexible storage for raw, unstructured data at any scale. Useful, but a transformation layer is still needed before models can consume anything from it. A lakehouse adds a structured query layer on top (typically through Delta Lake or Apache Iceberg). That gives you raw storage fidelity along with warehouse-level querying in the same place. For most AI workloads the lakehouse is the more practical choice. It preserves originals for reprocessing while exposing processed features through a query interface training pipelines can use directly.

Read summarized version with

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment