Read summarized version with

Introduction

$5.71 billion. That’s where the sentiment analytics market landed in 2025, per Precedence Research. Five years ago, sentiment analysis was a side project someone ran in a spreadsheet. Now it sits at the center of how data analytics impact social media strategy for brands that can’t afford to guess what their audience actually thinks.

Here’s a number that should make any brand manager uncomfortable: 67% of consumers permanently leave a brand after receiving a response that doesn’t match their emotional tone. Not temporarily. Permanently. Sentiment analysis is what stands between getting the response right and losing customers you’ll never win back.

This guide walks through the full process — from collecting raw social data to building classification pipelines that actually work in production. We’re writing this from the perspective of a team that has built these systems across industries, not from the perspective of someone selling you a SaaS subscription.

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment

Why Sentiment Matters for Brands and Businesses

The purpose of sentiment analysis goes beyond knowing what people think. Social media data analytics can tell you a post reached 50,000 people. What it won’t tell you is that 60% of those who commented are furious about shipping delays. Your dashboard shows green. Your customers are leaving. That’s the gap sentiment analysis closes, and most types of traditional analytics miss it completely.

That gap costs real money. Brands actively using sentiment data hold a significant NPS advantage over competitors who rely on engagement metrics alone. And on the consumer side, misreading emotional tone drives permanent churn — not temporary dissatisfaction, but customers who never come back.

Here’s what makes this different from generic analytics advice: sentiment analysis lets you act before a trend becomes a crisis. One of our clients — a Big Four consulting firm — monitors public opinion on government policies across the Middle East, where traditional media is state-controlled and social platforms are the only unfiltered signal. That’s not a marketing use case. It’s intelligence work. The same underlying technology applies whether you’re tracking brand perception after a campaign or detecting a PR crisis three hours before it hits mainstream news.

How Sentiment Analysis Differs from Social Media Analytics

These two get confused constantly. They measure different things.

| Scenario | Social Media Analytics tells you | Sentiment Analysis tells you |

| Product launch post gets 50K impressions | “This post reached a lot of people” | “72% of comments are complaints about the price increase” |

| Mentions spike 300% on Tuesday | “Something happened — you’re trending” | “The spike is driven by anger over a leaked policy change, not excitement” |

| Campaign runs across 3 platforms | “Instagram drove the most clicks” | “Instagram clicks are positive engagement, but Twitter mentions are mostly sarcastic” |

| Competitor launches a rival product | “Their post got 2x your engagement” | “Their audience is skeptical — 58% of replies question the product claims” |

Sentiment analysis adds the emotional layer that raw numbers miss entirely. Think of analytics as the thermometer. Sentiment is the diagnosis.

How Sentiment Analysis Works

A survey of sentiment analysis research — particularly Zhang et al.’s 2024 NAACL paper “Sentiment Analysis in the Era of Large Language Models” — shows that the field is moving fast but unevenly. Transformer models hit 94%+ accuracy on broad NLP benchmarks that include tasks like named entity recognition and question answering. But sentiment classification specifically is a harder problem. On the TweetEval dataset — the standard benchmark for social media text — the best LLM scored just 69%, per AIMultiple’s 2026 benchmark. That’s not a contradiction; general language understanding and correctly reading emotional tone are different skills. LLMs are good at understanding text. They’re less reliable at deciding whether that text is positive, negative, or sarcastic. Sentiment analysis is harder than the marketing copy suggests.

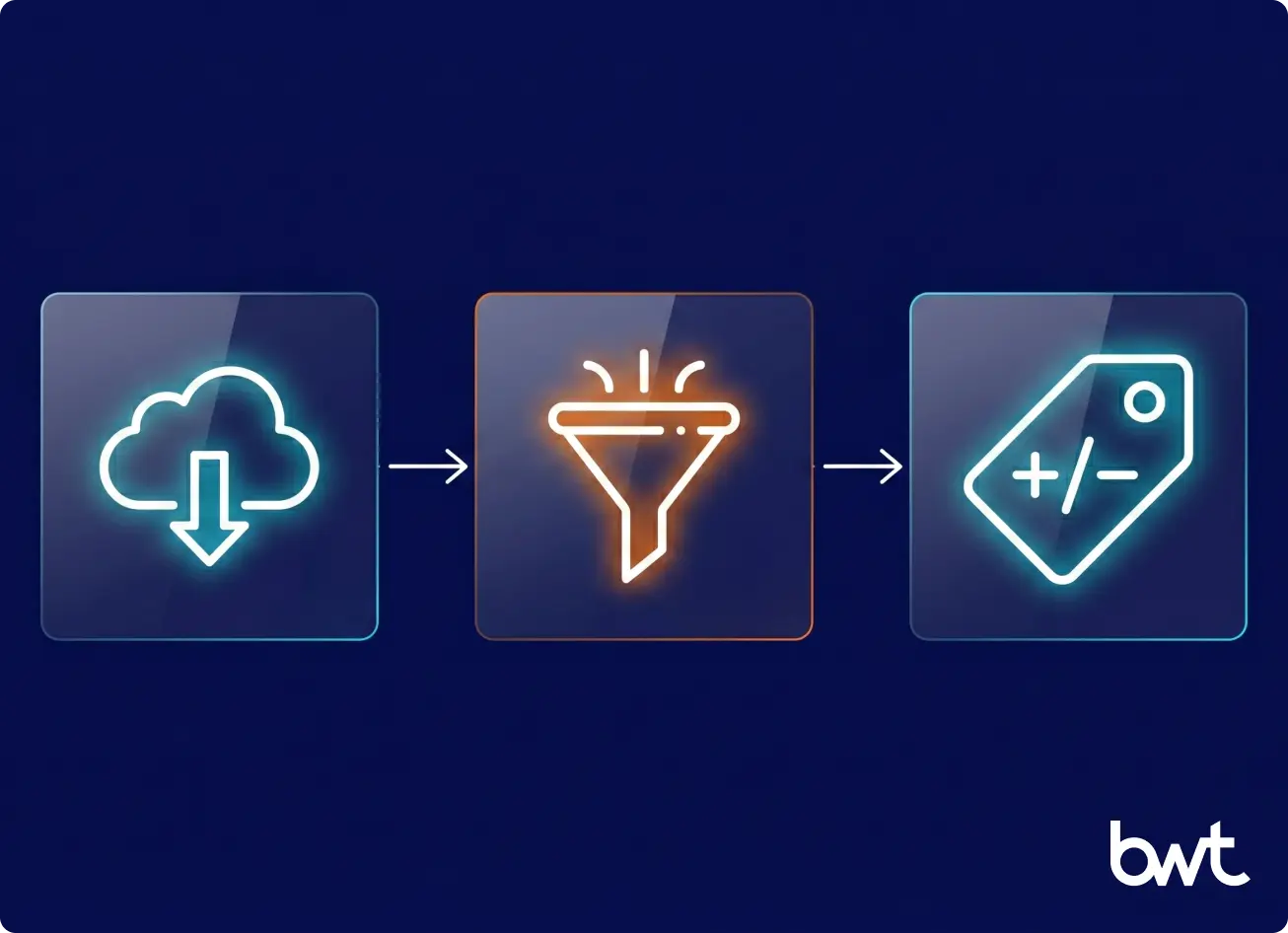

The pipeline follows three core stages: collection, preprocessing, and classification. Each one can break in its own unique way.

Data Collection from Social Platforms

This is where most guides lie to you. They say “connect your social accounts” or “use platform APIs.” Reality is messier.

Here’s what nobody tells you about data analytics on social media at scale: the data access costs alone can kill a project. X’s Enterprise API runs $42,000+ per month as of early 2026. Their Basic tier? $200/month — double what it was in 2024. A newer pay-per-use option caps you at 2 million post reads, per TechCrunch (October 2025). Check current pricing before you budget — X changes these numbers often. Meta locks down public data access. TikTok offers no public sentiment API at all. The 5.66 billion social media users are generating content constantly. Accessing it at the volume you need for real social media data analytics? That’s where the engineering challenge starts — and why social media data scraping becomes a critical capability.

We built a social listening system for a Big Four consulting firm that needed to track public sentiment around government policies across the UAE, Saudi Arabia, and Qatar. Official APIs couldn’t deliver what they needed — coverage across 20+ keyword queries in both English and Arabic, pulling 140,000+ tweets across seven collection sessions. The solution was web scraping for sentiment analysis — building custom data connectors where official APIs either didn’t exist or couldn’t deliver the coverage needed. We also ran proof-of-concept collectors for TikTok (6,000 posts plus 20,000 comments) and Instagram (10,000+ comments).

Performing sentiment analysis means solving the collection problem first.

“Everyone wants to talk about models and accuracy. But in fifteen years of building data collection systems, the number one reason sentiment projects stall is that nobody budgeted for getting the data in the first place.”

— Alex Yudin, Head of Data Engineering at GroupBWT

You need proxy infrastructure, rate limit management, and someone who knows the quirks of each platform’s anti-scraping defenses. Data analytics for social media starts with actually getting the data — and that step alone can take weeks to get right. That’s why many teams turn to dedicated web scraping services rather than building collection infrastructure from scratch.

Text Preprocessing and Cleaning

Social media text is raw in ways that break most models. Hashtags mashed together with no spaces. @mentions that double as sarcasm. URLs crammed mid-sentence. Emoji combos that mean different things on different platforms. Abbreviations your model has never seen. Two languages mixed in the same comment. Feeding this mess directly into a classifier? You’ll get numbers. They just won’t mean anything. This is where data analytics in social media projects either invest in preprocessing or produce garbage insights.

A D2C mattress brand we’ve worked with for over five years needed to process 800,000+ reviews across 50+ platforms in five languages — English, German, French, Italian, and Dutch. The preprocessing pipeline uses spaCy with a custom language detection component, Google Cloud Translation for normalization to English (running about $600-700 per quarterly session), and an automated deduplication layer that catches near-identical reviews (anything above 70-80% textual similarity gets merged). That step alone filters out a surprising volume of duplicate content that would skew sentiment scores.

Sentiment analysis falls apart without this cleaning stage. Garbage in, garbage out — but the garbage looks different on every platform.

Sentiment Classification Methods

Three types of approaches dominate right now, and which one fits depends on your scale and accuracy needs. Rule-based systems score text against predefined word lists. NLTK’s VADER is the one you’ll see in every tutorial — a 2014 tool that still earns its place because it handles social media quirks (slang, emoji, ALL CAPS) without any training data. Legacy? Sure. But for a quick baseline on English-language posts, it works. Machine learning models need labeled examples to learn from but generalize across domains once trained. Deep learning and NLP models built on transformer architecture read context and nuance at a level the other two can’t touch. They also cost significantly more to run in production — a tradeoff that shapes every pipeline design decision in data analytics in social media.

Here’s what surprised us in production: the best results come from designing systems that route text intelligently, not from picking one model for everything. One case study that illustrates this: we built a platform that classifies sentiment from social media posts, travel reviews, and forum discussions across nine data sources. The architecture uses five processing stages — and the key design decision was making the first stage cheap. A lightweight filter checks whether each post actually contains an opinion worth classifying. Roughly 80% of collected social media posts aren’t reviews or sentiment-bearing content — they’re noise. The first stage catches that. The 20% that passes moves into a classification layer running on AWS Comprehend, serverless, pennies per request. Only the genuinely ambiguous records — the ones where a mid-tier model isn’t confident — get escalated to an LLM. End result? 5x cheaper than pushing everything through GPT. Accuracy on the records that actually matter to the business stays comparable. This is what social media data analytics looks like when the architecture matches the problem instead of throwing the most expensive model at everything.

The lesson for building sentiment pipelines? Architect the pipeline so each component handles what it’s best at. Cheap filters for volume. Mid-tier models for routine classification. Expensive LLMs only where the nuance justifies the cost.

Also Read: Web Scraping to Extract Customer Reviews | Tools, Methods, Compliance

Step-by-Step Process to Perform Sentiment Analysis

If you want to know how to perform sentiment analysis from scratch, these six steps cover the full cycle. Most teams jump straight to picking a tool. That’s exactly backwards. Data analytics and social media projects fail when the tooling decision happens before the business question is clear.

Step 1: Define Business Goals and KPIs

What are you actually trying to measure? Brand health over six months? Customer reaction in the 48 hours after a product drop? How your competitors’ audiences feel about their latest move? Each of these examples requires different data sources, different update frequency, and a different response workflow.

Pick your primary metric before touching any tool. Net Sentiment Score works for broad brand tracking. Aspect-based sentiment (rating individual product attributes separately) works for product teams. Volume of negative mentions with severity weighting works for crisis detection.

Step 2: Collect Relevant Social Media Data

Map your platforms before you collect a single post. B2B audiences cluster on LinkedIn and X. Consumer brands? Instagram, TikTok, review sites. Niche communities talk on Reddit and forums your SaaS tool probably doesn’t monitor. Social media and data analytics work together only when the data source matches where your audience actually speaks.

For a cybersecurity client focused on disinformation monitoring, we designed a system to process 900,000 posts per day across four platforms — X, TruthSocial, Gab, and Gettr. Real-time keyword monitoring flagged brand mentions as they appeared. Running sentiment analysis at that velocity isn’t a batch job you run overnight. It demands background processing systems that handle tasks without blocking each other — and knowing how to build a resilient web scraping infrastructure that adapts when platforms change their page structure without warning.

Step 3: Choose a Sentiment Analysis Method

It depends. (Unsatisfying answer, but honest.) Three things decide this: volume of data, how specialized your vocabulary is, and whether owning the pipeline matters for compliance or IP reasons.

If your team needs sentiment scores by Friday and nobody on staff writes Python, a SaaS platform is the obvious move. Brandwatch, Sprout Social, Hootsuite — connect your accounts Tuesday morning, set up keyword tracking over lunch, pull your first report before you leave. $100 to $1,000 a month, depending on volume. Where these tools fall short is vocabulary. A dermatology brand posting about “retinol purging” and getting it classified as negative? That happens. Medical jargon, financial shorthand, industry-specific slang — SaaS models weren’t trained on your niche, and they won’t learn it no matter how long you subscribe.

Python pipelines give you full control. Libraries like NLTK (with its VADER scorer), spaCy, and HuggingFace Transformers let you build a sentiment classifier that fits your exact use case. A basic VADER setup takes minutes and requires no training data — you feed it a sentence, it returns a positive/negative/neutral score. Won’t catch sarcasm. Won’t understand your industry’s slang. But for teams exploring data analytics in social media for the first time, it’s enough to prove whether sentiment scoring helps your use case at all before committing real budget.

When none of that is enough — you’re multilingual, you answer to regulators, your domain vocabulary makes general models useless — that’s custom pipeline territory. Budget shifts to $50K–$150K+ spread over a year or two. Sentiment analysis tools at this tier aren’t products you install. They’re systems someone designs around your specific data, your specific questions, your specific compliance requirements.

Step 4: Train or Configure Your Model

Generic models handle generic language. They choke on domain-specific terms. “Sleep latency” in a mattress review doesn’t mean what a general classifier thinks. Neither does “pressure relief” or “edge support.” When your industry speaks its own language, fine-tuning on domain data isn’t optional — it’s the difference between useful output and expensive noise.

One case study worth mentioning from a travel project: the platform classifies social media posts and reviews about cities across five separate sentiment categories — gastronomy, transportation, safety, attractions, entertainment. One number for the whole city would have been useless. The model had to parse “the food blew my mind but getting across town took two hours” as strongly positive on one axis and strongly negative on another. Social media data analytics only works at this level when the classification matches the question the business is actually asking.

Step 5: Analyze and Interpret Results

A 65% positive sentiment score. Good or bad? Depends entirely on context. Airlines and telecoms live at lower baselines than electronics brands — 65% might be the best quarter they’ve ever had.

What matters more than any single number is the trend. Build dashboards with clear visualization of how sentiment moves over weeks, not just where it sits today. We use Metabase for monitoring our social media data analytics pipelines — collection volumes, filter rates, errors, sentiment breakdown, all in one view. When negative sentiment spikes on a random Tuesday afternoon, the team traces it to the triggering event within minutes. Not after the news cycle picks it up. Before.

Step 6: Turn Insights into Action

A dashboard full of sentiment scores that nobody acts on? Waste of money. The value shows up when negative product feedback routes straight to the product team without anyone filing a ticket. When a potential crisis escalates to communications before Twitter finds it. When competitive sentiment data feeds into next quarter’s positioning. The investment pays off at the action layer, not the reporting layer.

Our Big Four consulting client used exactly this approach: when sentiment around a government policy shifted negative across Arabic-language social posts in one region, the team flagged it to their client’s communications division within hours — before mainstream media picked up the story. The social media data was there. The pipeline surfaced the sentiment shift. The team acted on it before it became a crisis.

Tools for Sentiment Analysis

The tooling landscape splits into three tiers, and picking the wrong one wastes either money or time.

“We’ve seen companies spend six months on a custom pipeline when a $200/month SaaS would have solved their problem. We’ve also seen teams outgrow their SaaS tool in three months and wish they’d started building earlier. The answer is always in the data volume and the questions you’re asking.”

— Oleg Boyko, CCO at GroupBWT

No-Code and SaaS Tools

For teams that need answers without building infrastructure, these platforms handle collection through classification in one interface.

| Tool | Best For | Key Strength | Starting Price |

| Brandwatch | Enterprise social listening | Broad platform coverage, AI insights | Custom quote |

| Sprout Social | Mid-market social teams | Publishing + analytics + sentiment integrated | ~$249/mo |

| Hootsuite Insights | Multi-platform monitoring | Team collaboration, broad channel support | ~$99/mo |

| Talkwalker | Global brand monitoring | 150+ languages, image recognition | Custom quote |

| MonkeyLearn | Custom text analysis | No-code ML model builder | Free tier available |

SaaS is the fast path for teams that need results without building infrastructure.

Python Libraries and NLP Frameworks

When SaaS tools don’t give you enough control, Python is where custom work happens — building web scraping for data science pipelines included. NLTK’s VADER analyzer handles social media text well — it understands slang, emoji patterns, and capitalization. spaCy excels at preprocessing — tokenization, entity recognition, and language detection. The mattress brand project uses spaCy as its backbone for processing reviews across five languages. HuggingFace Transformers gives you access to pre-trained models like DistilBERT — a distilled version of BERT-base that retains most of its accuracy while running significantly faster, making it a practical choice for production pipelines where speed and cost matter.

Enterprise AI Platforms

At enterprise scale, data analytics means combining multiple SaaS tools into a unified layer that nobody has to reconcile manually. We built a customer data platform for a European cosmetics enterprise that pulls data from five channels — Snapchat, TikTok, Dash Social, Quid (a consumer intelligence platform), and Facebook/Instagram — into a single CDP built on an enterprise data warehouse designed for full traceability and auditability. That’s advanced sentiment analysis at enterprise scale, where the challenge isn’t any single tool but making them all talk to each other.

If you’re working with a large dataset of social posts, or building your own dataset for analysis, the data engineering required to maintain these pipelines is a project in itself.

Common Challenges in Sentiment Analysis

No sentiment system works perfectly out of the box. Every team we’ve worked with hits at least one of these walls, usually within the first month of production.

“A model that scores 90% in your test environment will drop to 70% the first week it sees real user data. Demo datasets are clean. Production is not. The teams that succeed are the ones who budget for that gap from day one instead of treating it as a surprise.”

— Dmytro Naumenko, CTO at GroupBWT

Sarcasm and Context Understanding

“Love how this update broke everything.” A human reads frustration. Most classifiers read positive sentiment. Sarcasm flips polarity, and it’s everywhere on social media.

The good news: sarcasm detection has improved significantly — accuracy reached 84% in 2026, up from 61% just a few years prior. LLMs are surprisingly good at recognizing irony in isolation. GPT-4o scored 98% on TweetEval’s irony detection tasks per the same AIMultiple benchmark. But recognizing that a sentence is sarcastic and correctly classifying its actual sentiment are two different problems. The same model that catches irony still misreads the resulting emotional tone more often than you’d expect.

Multilingual Data

Operating across languages multiplies complexity fast. XLM-RoBERTa achieves a 90.3% F1 score on cross-lingual sentiment tasks, per research published in Nature. Standard multilingual BERT trails at 84.5%. The gap matters when you’re processing content in Arabic, French, Dutch, and German simultaneously.

Our Big Four consulting client needed bilingual search across English and Arabic — two languages with completely different sentence structures and sentiment markers. The mattress brand processes five European languages quarterly. In both cases, we handle language detection first, translate to a common language for classification, then map results back. AI sentiment processing covers 58 languages in 2026, up from 31, but low-resource languages and code-switched content (mixing languages mid-sentence) still trip up every model we’ve tested.

Emoji and Slang Interpretation

Context changes everything. “This product is fire 🔥” is positive. “This update is a dumpster fire 🔥” is very negative. Same emoji, opposite sentiment. The cosmetics brand project we support processes consumer intelligence data from Quid, which includes Unicode text, translated content, and emoji — and the emoji detection pipeline had to be custom-built because off-the-shelf tools treated all fire emoji as equivalent.

Bias and Model Accuracy

Achieving the most accurate sentiment analysis requires accepting an uncomfortable truth: no model is reliable across all tasks. When researchers benchmarked eight LLMs on the TweetEval dataset in 2026, the top-performing model scored 79% overall. But the same tests revealed dramatic inconsistency: one model scored 82% on emotion detection but only 50% on hatefulness detection. Another dropped to 52% on certain task types while performing well on others. The takeaway isn’t that LLMs are bad at sentiment — it’s that you need to test any model on your specific task before trusting it in production.

Model drift is real too. Slang evolves. New memes appear. A model trained six months ago shows measurable accuracy drops on current social content. Reliable sentiment analysis demands ongoing validation, not a one-time setup.

Best Practices for Accurate Sentiment Analysis

Getting a sentiment pipeline running is one thing. Keeping it accurate over months and years is where data analytics in social media gets genuinely hard.

Continuous Model Training

Set retraining schedules. Weekly for fast-moving consumer brands. Monthly for most B2B use cases. Quarterly at minimum. Our travel platform retrains its classifiers weekly as new review data flows in, using Metabase to monitor whether sentiment distributions shift unexpectedly. If they do, that’s a signal the model is drifting or the source data has changed.

Combining Quantitative and Qualitative Analysis

Numbers tell you what’s happening. Reading actual posts tells you why. Tracking sentiment gives you the scale to spot patterns across thousands of mentions, but the best insights still come from humans reviewing the most emotionally intense examples. We’ve seen cases where a perfectly calibrated model missed a rising trend because the language was too new — human reviewers caught it within hours.

Ethical and Legal Considerations

This is where most companies don’t think carefully enough. Under GDPR, sentiment analysis may qualify as “profiling” — automated processing that evaluates personal characteristics like behavior and preferences. When profiling feeds into decisions that significantly affect individuals (ad targeting, credit scoring, hiring), GDPR imposes strict requirements around consent. The legal specifics depend on your use case, jurisdiction, and data handling practices — this is an area where companies should consult legal counsel rather than rely on general guidance.

Data minimization is a core GDPR principle. Collect only the data you need for the specific analysis, and nothing more. The EU AI Act adds another layer — emotion recognition systems face specific regulatory scrutiny, and some applications are restricted entirely.

Our mattress brand client takes this seriously: a BWT Statement Report gets signed quarterly and submitted to UK regulators (CMA and Clearcast) verifying review data accuracy. For them, this isn’t analytics. It’s a legal shield.

Real-World Use Cases

Sentiment analysis shows up in nearly every industry. Here are the four most common patterns we see in production.

Brand Monitoring

Nike’s “Dream Crazy” campaign generated $163 million in earned media value and a 31% sales increase. That didn’t happen by accident — sentiment tracking guided the strategy. Industry data consistently shows that sentiment-aware advertising delivers stronger engagement and significantly lower acquisition costs compared to campaigns optimized on reach alone.

Our consulting client used sentiment monitoring to track public perception of government policies across three Middle Eastern countries. In regions where press is controlled, social media is the only reliable gauge of public opinion. That example shows the tool working beyond marketing — it’s governance-grade intelligence.

For brands looking to track competitor sentiment across retail channels, brand monitoring scraping provides the data foundation.

Product Feedback Analysis

Companies using negative sentiment alerts in their product feedback loops consistently report measurable churn reduction and higher save rates on at-risk accounts.

We’ve maintained a digital shelf monitoring platform for over seven years that covers 70+ retailers across dozens of countries, serving FMCG brands like Kimberly-Clark and Panasonic. The Rating & Reviews module collects and monitors sentiment across all those touchpoints. Scraping reviews at that scale requires infrastructure that handles site-specific quirks, anti-bot defenses, and data normalization across dozens of different review formats.

Crisis Detection

Speed saves brands. Sentiment-based crisis detection consistently identifies issues hours earlier than volume-based alert thresholds — in some tests, up to 18 hours sooner.

The disinformation monitoring system we designed for a cybersecurity firm processed 900,000 posts daily across four social platforms. Even at that velocity, one honest caveat: you can’t guarantee 100% parity with a platform’s official feed. Algorithms show different content to different users in different countries. Building for coverage rather than perfection is the realistic approach.

Competitive Intelligence

Sentiment data sharpens competitive positioning. Tracking how audiences react to competitor launches, campaigns, and product changes on social media gives brands a window into market dynamics that surveys and reports can’t match in speed.

Our cosmetics enterprise client tracks competitor sentiment across social channels — Snapchat, TikTok, Instagram, and Facebook — pulling data into a unified consumer intelligence layer. When a competitor’s product launch triggered a wave of negative social media reactions, the client’s marketing team spotted the pattern within days and adjusted their own campaign positioning accordingly. For deeper reading on data-driven competitive intelligence analysis, we’ve written about the methodology separately.

When to Use an AI-Powered Sentiment Analysis Solution

Scaling Beyond Manual Tools

The transition point usually looks like this: someone on the team is spending hours in ChatGPT, copy-pasting social posts, manually prompting for sentiment scores, and assembling results in a spreadsheet. Our Big Four client’s analyst described it bluntly: “I had to do many revisions myself, prompted a couple of times — it’s tiresome, it takes a lot of time sometimes, and it’s annoying.”

When manual processing becomes a bottleneck, sentiment analysis automation isn’t a luxury. It’s overdue.

Integrating Sentiment into Business Intelligence

Sentiment data gets powerful when it connects to other business metrics. Pipe it into your data analytics layer alongside sales figures, support tickets, and campaign performance. Build a customer data platform that unifies social sentiment with transaction data. That’s where you move from “people are unhappy” to “unhappy customers in this segment are churning at 3x the normal rate after this specific product update.”

Building Custom Sentiment Models

How to do sentiment analysis at enterprise scale? At some point, you outgrow SaaS. The thresholds vary, but common triggers include needing domain-specific classification, processing in languages your current tool doesn’t cover well, requiring real-time processing at volumes above what APIs can handle cost-effectively, or needing to own the pipeline for compliance reasons. Custom-level implementations typically cost $50,000-$150,000+ over 12-26 months for enterprise implementations, versus $100-$1,000+ monthly for SaaS platforms.

Final Thoughts on Implementing Sentiment Analysis

Sentiment analysis is no longer experimental. The market is approaching $6.5 billion. The tools work. The business case is proven — stronger customer retention, measurable churn reduction, crises detected hours earlier than traditional alerts.

What separates companies that get value from sentiment analysis and those that don’t isn’t the technology. It’s the pipeline. Clean data in, well-chosen models in the middle, action at the end. Skip any stage and you’re wasting budget.

If you’re evaluating whether to build or buy, start with a clear picture of your data volume, your language requirements, and your accuracy tolerance. An experienced AI consulting service can help you map the right approach. SaaS handles 80% of use cases well. The remaining 20% — multilingual, domain-specific, high-volume, compliance-sensitive — is where custom engineering earns its cost back.

Sentiment analysis uses natural language processing and machine learning to classify text as positive, negative, or neutral. On social media, it reads the emotional tone in comments, reviews, and public posts to provide signals teams can act on. Standard engagement metrics confirm conversations happen but reveal nothing about emotional direction. Real-time sentiment data enables crisis teams to catch threats earlier, product teams to separate one-off complaints from systemic issues, and marketing to measure whether campaigns land the way they intended.

SaaS platforms like Brandwatch, Sprout Social, and Hootsuite Insights include built-in classification. Setup involves connecting your social accounts and defining keywords or topics to monitor — most platforms generate sentiment reports the same day. MonkeyLearn offers drag-and-drop interfaces for building custom classifiers without writing code. The trade-off is flexibility: no-code tools work well for standard brand monitoring but struggle with domain-specific language or multilingual content.

Analytics tracks quantitative metrics — impressions, clicks, shares, follower growth. Sentiment evaluates the emotional tone behind those interactions. A post with 50,000 impressions but predominantly frustrated comments appears strong by analytics standards but negative by sentiment. The two are complementary, not interchangeable. Effective strategies use both to get a complete picture.

Sarcasm remains the hardest problem. “Love how this update broke everything” reads as positive to most classifiers because the words are positive. Multilingual content adds complexity — models trained on English struggle with Arabic, Hindi, or code-switched text. Emoji interpretation depends on context. And model drift is constant: language evolves, new slang appears, and a classifier trained six months ago shows measurable accuracy drops on current content. In recent benchmarks, even the best-performing LLM scored only 69% on standard social media sentiment classification tasks.

Range varies significantly by approach. Open-source Python libraries (NLTK, spaCy, Transformers) carry no licensing fees — you pay for engineering time and cloud compute. SaaS platforms run $100 to $1,000+ per month depending on volume and features. Custom enterprise implementations requiring domain-specific models, multilingual support, or compliance-grade pipelines typically cost $50,000 to $150,000+ over 12 to 26 months. The right choice depends on your data volume, language requirements, and how much control you need over the pipeline.

Read summarized version with

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment