Whether you need to monitor sanctions risks, track competitors’ pricing, or detect brand crises instantly, reliable news data extraction is the foundation.

News article data extraction only creates business value if the dataset stays correct after the next redesign. At GroupBWT, we design and operate production pipelines that keep core fields stable through HTML drift, paywalls, and shifting compliance rules.

This is the exact pipeline GroupBWT builds and operates for clients who cannot afford silent data loss. If you want us to run it end-to-end, start with our data extraction service and web scraping services, or see how we support content aggregation for multi-source feeds.

“The real frontier isn’t AI or the Cloud—it’s data truth.”

— Alex Yudin, Head of Data Engineering & Web Scraping Lead, GroupBWT

Your output should be a dataset, not a document

If your goal is to extract structured data from news article pages at scale, define a minimum schema and QA gates before you write your first crawler.

| Field | Example | Minimum quality gate |

| url | https://… | Unique + canonicalised |

| source | Reuters | Allowlist/denylist + licensing notes |

| published_at | 2026-02-04 10:31 | Parseable datetime |

| headline | … | Non-empty |

| body_text | … | Minimum length + boilerplate removed |

| language | en / de / uk | ISO code + routed correctly |

| entities | Apple, ECB | NER coverage threshold |

| topic | “risk”, “M&A” | Controlled taxonomy |

| sentiment_score (optional) | -0.31 | Bounded range + versioned model |

Why data extraction from news articles breaks in production

The challenges of news data extraction show up when you go from “it works on my laptop” to “it runs every 10 minutes for 200 sources.”

Fails happen most often because teams optimise for crawl throughput, then discover too late that quality, duplication, and compliance are what break dashboards.

Unstructured content is normal (unstructured data extraction news)

News pages look structured to humans, but the underlying code often changes completely between sections, archives, and updated templates. If you don’t design for variability, you “capture” pages but cannot normalise them into stable fields.

JavaScript, sessions, and anti-bot create false completeness

A successful HTTP 200 does not mean you extracted the article body. Modern publishers often hide content until a user interacts with the page (like scrolling or clicking ‘Show more’), or block automated access entirely

Language multiplies the QA surface (multilingual news data extraction)

Going multilingual is not “just translation.” It changes tokenisation, entity rules, and dedupe behaviour, so you need per-language routing and quality gates. Going multilingual is about getting instant alerts when your competitor launches in a new market—even if they only mentioned it in a local German press release.

Duplicates are not cosmetic—they corrupt analytics

Syndication can turn one story into 40 near-copies that skew counts and alerting. Without duplicate content detection in news data, you don’t have signals—you have noise.

Real-time requirements amplify every weakness

Near-real-time pipelines collect failures faster than you can debug them. If you need tight SLAs, build backpressure, replay, and monitoring from day one.

Compliance constraints shape what you can store and publish

Rights, ToS, copyright, and privacy requirements define what you can store, how long, and how you can redistribute. In most real teams, legal review lags behind MVP—so your architecture should make compliance easier to retrofit, not impossible.

Prevent Data Loss: Choose Tools That Handle Anti-Bot and Layout Changes

When extracting data from news websites, pick tools based on what breaks in production: rendering, anti-bot, drift, and dedupe—not what’s trendy on GitHub.

If you need a refresher on fundamentals, see what is web scraping in data science in practice.

Acquisition: reach the article reliably

We typically combine:

- Scrapy for high-throughput crawling and per-domain rate control.

- Playwright (or Selenium) when JavaScript hides the body text.

- Request scheduling + retries + domain-level budgeting.

- Proxy pools and IP rotation are only used where legally appropriate and necessary.

If your first target is Google News, our guide on how to scrape google news will save you time on source discovery and pagination edge-cases.

Parsing: deterministic first, with controlled fallbacks

Deterministic parsing (selectors, boilerplate removal) is still the fastest and most testable way to extract core fields like headline, published_at, and canonical URL.

But in our practice data extraction from news articles, we see production-grade hybrids: selector-based extraction with LLM-assisted fallback extraction when layouts drift, and vision-based parsing for unknown templates (screenshots/PDFs) when HTML is unreliable.

| Approach | Best for | Known risks |

| Deterministic (selectors + boilerplate removal) | Core fields at scale | Brittle to drift; needs maintenance |

| Hybrid (rules + controlled LLM fallback) | Body_text recovery when selectors fail | Cost, latency, and hallucination risk; requires QA |

| Vision/Document parsing (OCR + layout models) | Unknown layouts, images, PDFs | Lower precision; heavier infra |

Enrichment: Turn Raw Text into Market Alerts and Competitive Intel

Enrichment is where teams overpromise. Entities, topics, novelty, and event detection are useful—if you treat them as probabilistic features and keep a human QA loop for high-stakes alerts.

One specific pitfall is sentiment analysis from news articles challenges: sarcasm, mixed tone, domain jargon, and source bias can turn “sentiment” into a noisy metric without calibration.

TFNP keeps the pipeline stable even when publishers drift

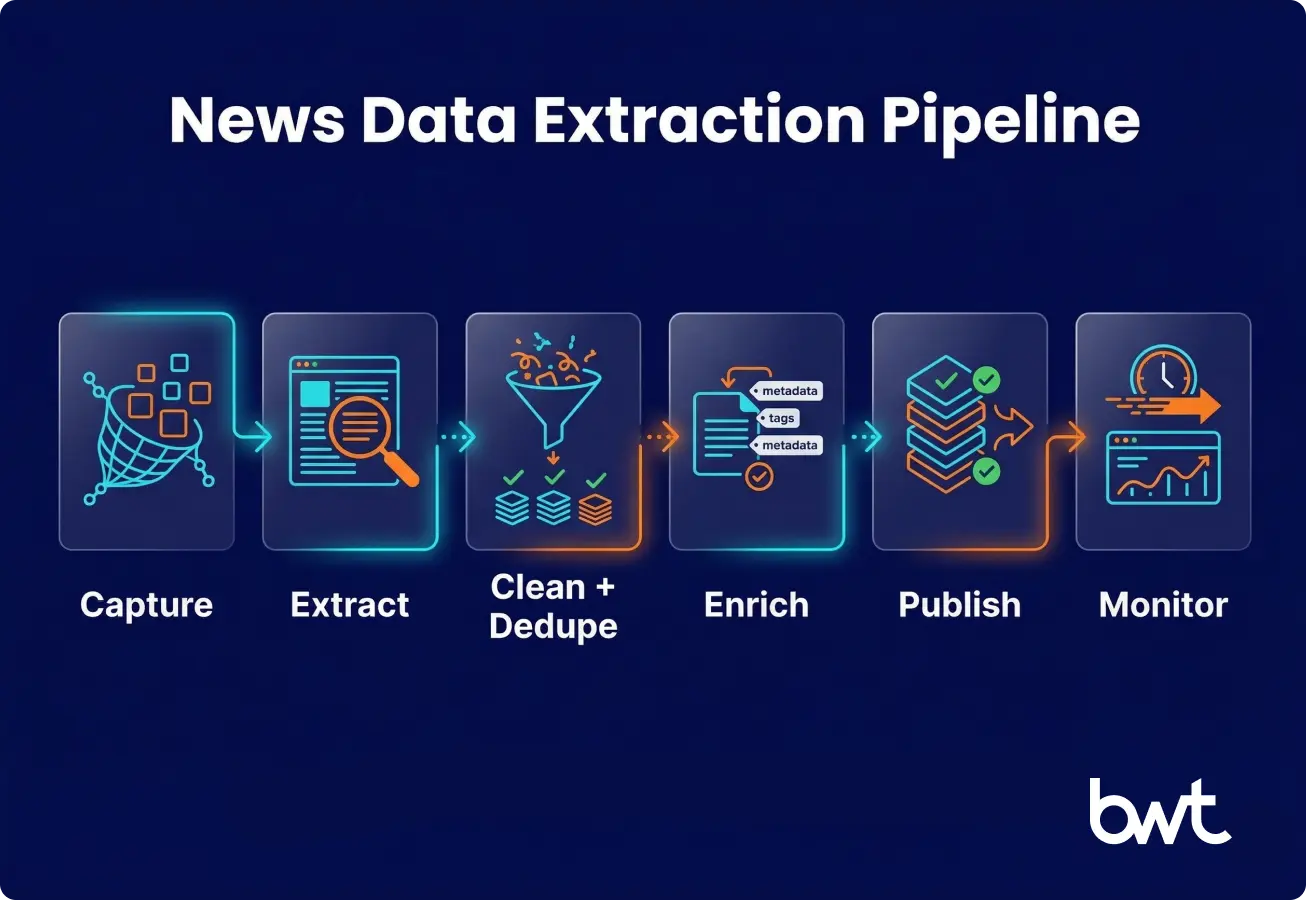

Note: Truth-First News Pipeline (TFNP) is GroupBWT’s reference production model for data extraction from news articles, turning raw pages into governed datasets:

Capture → Clean → Dedupe → Enrich → Publish → Monitor.

In data extraction from news, stages are introduced progressively based on risk and budget. We run this pipeline for clients when “freshness + correctness” is the product, and missing one publisher for a day is unacceptable. In production, we guarantee replayability: every record can be reprocessed after a parser update.

Stage 1 — Capture: store raw pages so you can replay

Store raw HTML (and rendered output where needed) so you can re-run extraction without re-crawling.

- Timestamped snapshots

- HTTP status, redirects, canonical URLs

- Publisher metadata (section, crawl time, crawl method)

Stage 2 — Extract: data extraction of news articles into stable fields

Store raw pages into structured, reusable records.

- Headline/body/author/date extraction

- Paywall detection flags

- Content length + quality heuristics

Stage 3 — Clean + Dedupe: protect analytics from duplication

Normalise fields and cluster duplicates into a single “story” entity.

- Canonical URL rules

- Smart content matching (detecting when the same story appears on 40 different sites)

- Context checks (ensuring ‘Apple’ refers to the company, not the fruit)

- Entity overlap checks

Stage 4 — Enrich: add entities, topics, and event flags

Add the fields that stakeholders actually query.

- NER and entity linking (tickers, registries)

- Topic tags aligned to your taxonomy

- Event detection with human QA for high-stakes categories (e.g., legal disputes, sanctions lists, or fake news verification)

Stage 5 — Publish: deliver to the systems people use

Publish to the destination that fits your workflow.

- Lakehouse / warehouse for analytics

- OpenSearch/Elasticsearch for discovery

- Alerts (Slack/email/webhooks) for time-sensitive monitoring

In early MVPs, Publish may be as simple as an API dump + cron. The point is consistency, not architectural purity.

Stage 6 — Monitor: catch silent drift before stakeholders do

Monitoring is the difference between “data” and “trust.”

- Extraction success rate per domain

- Empty-field anomaly detection

- Schema drift alerts

- Freshness SLAs per source (with automated alerts and backfill triggers if data is delayed)

Quick Self-Assessment: Do you need an enterprise pipeline?

- Refresh Rate: Do you need data every 5-10 minutes (vs. once a day)?

- Volume: Are you monitoring 50+ different publishers (vs. just 5)?

- Access: Is more than 30% of your source content behind paywalls or logins?

- Risk: Would missing a critical news update for 24 hours cost you money?

If you answered YES to 2 or more, a simple scraper will likely fail. You need a governed pipeline.

“Recently, our monitoring detected a layout change on a major financial news site within 5-10 minutes —flagging it before a single empty record could pollute the client’s dashboard. That is the difference between ‘scraping’ and ‘data engineering’.”

— Oleg Boyko, COO, GroupBWT

What production looks like: recent GroupBWT examples

These are simplified examples of how we design and operate pipelines under real constraints (budget, ToS ambiguity, changing layouts).

Case: Automated local news collection for a legal firm

In our automated local news collection for a legal firm case, the hardest part was maintaining freshness without amplifying duplication noise across local syndication networks.

Case: Structuring information about mortgage rates by extracting data

In our project structuring information about mortgage rates by extracting data, the main pain point was normalising inconsistent historical archives without silently dropping key fields.

Three patterns we see across NDA projects

- Freshness breaks first: rate limits and JS rendering force scheduling and backoff strategy.

- Dedupe is never “done”: new syndication paths appear after go-live.

- Monitoring pays for itself: drift alerts prevent executives from acting on incomplete dashboards.

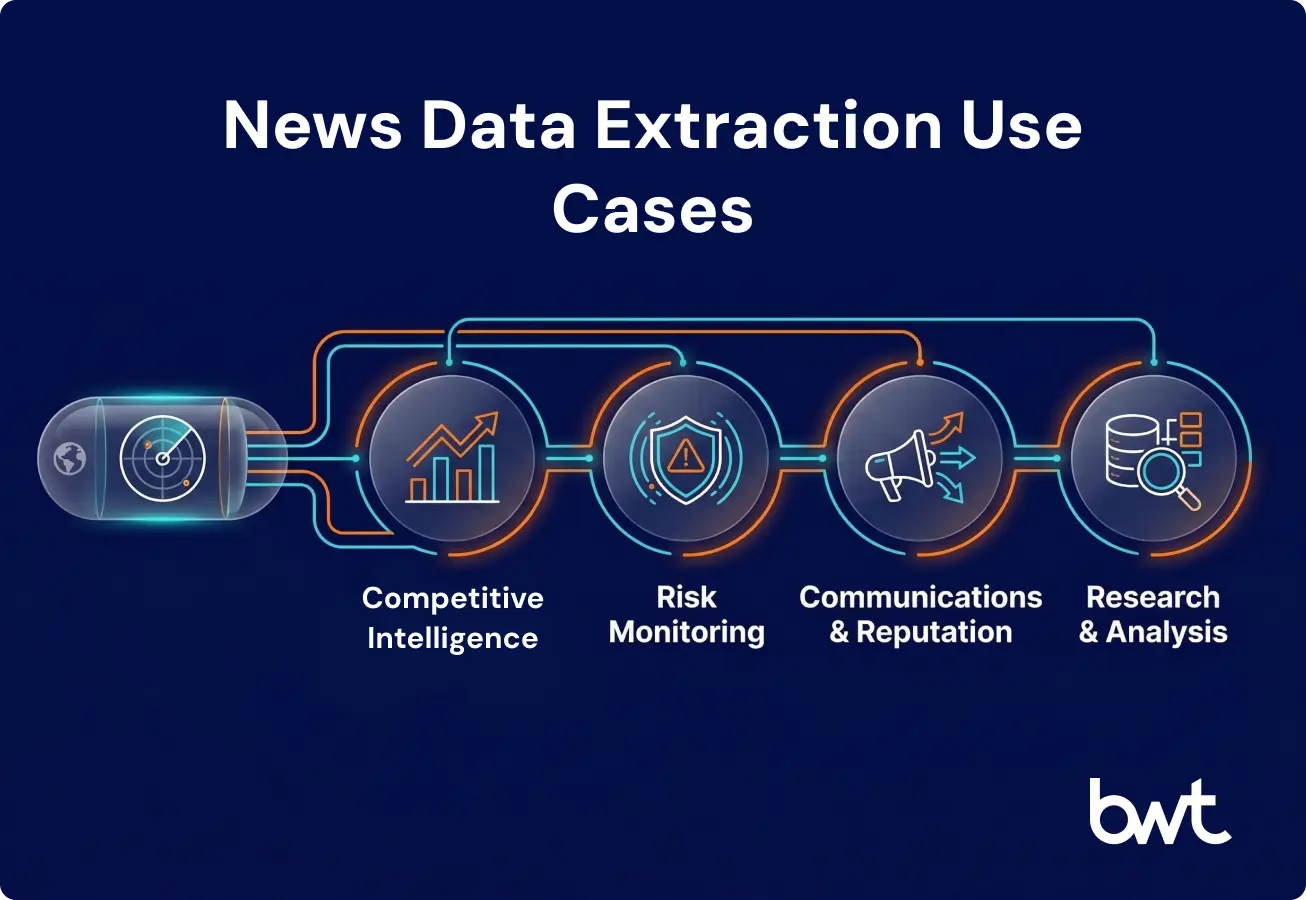

Business use cases and benefits (what to expect, what not to overtrust)

News-driven workflows improve when you measure coverage, freshness, and precision—not when you collect “more pages.”

Common use cases:

- Market and competitive intelligence: launches, partnerships, pricing narratives

- Risk monitoring: sanctions, litigation, supply chain issues

- Comms and reputation: brand mentions and emerging crises

- Research teams: thematic datasets for rates, commodities, regulation

Benefits you can measure:

- Faster decision loops via near-real-time visibility

- Reduced manual research and copy-paste workflows

- Better multilingual coverage with consistent QA gates

- Higher-quality downstream modelling because inputs are stable

Signals to treat carefully:

- Sentiment is noisy in news and needs calibration per domain.

- Novelty depends on window size, corpus choice, and dedupe quality.

- Event detection has a high false-positive rate without a human review lane.

Compliance is a production dependency, not a checkbox

“Do legal first” is ideal, but many teams start with an MVP and involve counsel after value is proven. Design your pipeline so compliance can be tightened over time without rebuilding everything.

Practical Compliance Checklist:

- Data Minimization: We store only headlines, snippets, and entities (no full text storage unless licensed).

- Access Control: We respect robots.txt and implement strict rate limits to avoid server load.

- Audit Trails: We maintain a ToS review log for every source to track changing permissions.

- Redistribution: We integrate licensed feeds or official APIs when data needs to be shared externally.

If your goal is to republish scraped news content, be aware that large-scale scraping and rehosting without added value can also collide with search engine spam policies.

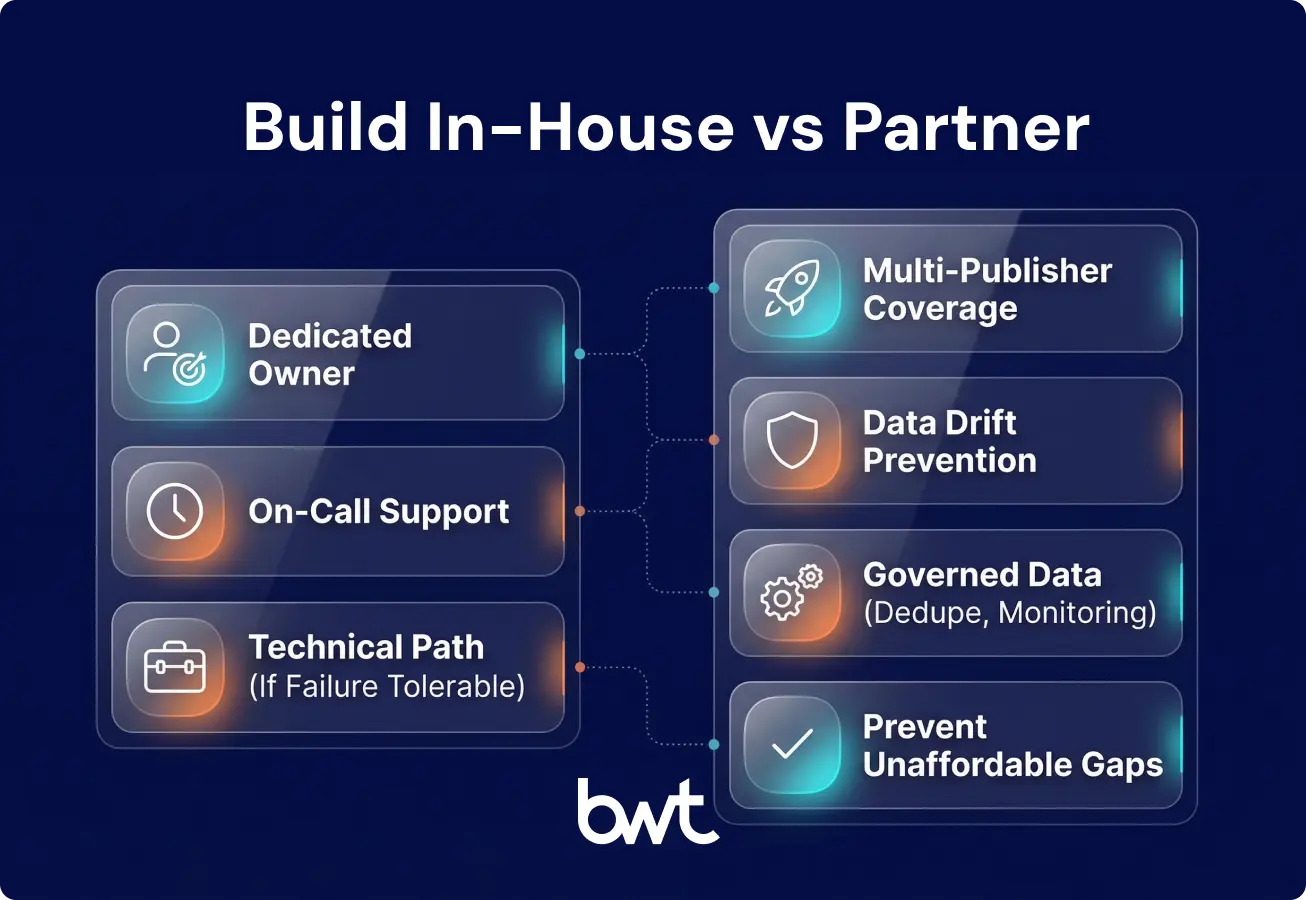

Build vs buy: decide based on the failure you can’t tolerate

If your internal debate is “build or outsource,” stop talking about scrapers and start talking about silent gaps.

A quick decision matrix:

| Your reality | Build in-house | Partner with GroupBWT |

| You have an owner + on-call rotation | Yes | Maybe |

| You need coverage across many publishers fast | Hard | Yes |

| You can’t afford drift-induced data loss | Risky | Yes |

| You need governance, dedupe, and monitoring | Time-consuming | Yes |

If you’re evaluating vendors, start with our breakdown of the best data extraction company for web scraping criteria and the questions procurement should ask.

The TFNP launch checklist (from first call to production in 4–6 weeks)

- Define your minimum schema (fields + examples).

- Pick 10 priority sources and document licensing/ToS stance.

- Implement replayable capture (raw snapshots + metadata).

- Build deterministic extraction plus a fallback parser path.

- Add dedupe rules (canonical URL + similarity).

- Add enrichment aligned to the business workflow (NER + topics).

- Publish to a consumer system (BI/search/alerts).

- Add monitoring (empty fields, drift alerts, freshness SLA).

- Run a monthly source health review (web drift is continuous).

If you need a partner to operate the full pipeline, start with content aggregation, web scraping services, or our data extraction service.

FAQ

-

What is news article data extraction?

It’s the process of collecting news articles and converting them into structured fields (URL, date, source, entities, topic) that can be stored, searched, and monitored.

-

How do you keep news from data extraction reliable on dynamic websites?

Start data extraction from news with replayable capture, add rendering when JavaScript hides content, and implement drift monitoring so you detect missing fields before stakeholders do.

-

Which tools fit enterprise-scale data extraction of news articles?

Most enterprise stacks combine a crawler (Scrapy), a renderer (Playwright), an extraction layer (selectors + boilerplate removal), orchestration (Airflow/Dagster), and storage/search (lakehouse + OpenSearch/Elasticsearch).

-

How do you handle compliance in news and articles data extraction?

Begin with your usage model (internal analytics vs redistribution), minimise stored personal data, document ToS decisions, and use licensed feeds where required.

-

Which business teams benefit most from news data extraction?

Market intelligence, risk/compliance, communications, and research teams benefit most because structured coverage, dedupe, and monitoring convert news volume into measurable signals.