Client Story

A top-3 telecom operator — part of a major international group, €1B+ in annual revenue — runs an automated pricing engine that adjusts mobile phone prices based on what competitors charge in near-real-time. When their previous data partner shifted focus away from scraping, GroupBWT was invited to assess the challenge and propose an architecture

| Industry: | Telecommunications |

|---|---|

| Cooperation: | 2025 |

| Location: | Northern Europe, EU |

Read summarized version with

"We base our pricing on the market's current price. It's fetched every 30 minutes, 24/7 — so it's quite heavy in that sense." — Product Manager

"We are looking for a partner for whom this is the main business." — Product Manager

Enterprise Price Monitoring: SLA Risk, Data Conflicts, and Availability Logic

This wasn’t a build from scratch. The system was in production. The operator needed someone who could take over a live pipeline without breaking it — and match SLA conditions beyond typical scraping agreements.

The scope: ~1,000 SKUs across six domestic competitors, refreshed every 30 minutes, delivered to the client’s queue every 10 minutes. A 99.9% uptime target. One month from RFP to decision, over the holiday season.

During discovery, the client described “oscillation events” — price or availability jumping between collection cycles due to scraping artifacts (fields pulled from different pages returning conflicting values). For automated pricing, this triggered false alerts and incorrect price matches. The contractual layer made it sharper: oscillation time counted as SLA downtime, reducing the monthly fee. Any vendor had to answer: if a competitor’s site returns contradictory data, who absorbs that risk?

Availability interpretation added complexity. “Available” meant a specific variant (model, color, storage) could ship right now — each competitor expressed it differently. Our proposed approach: two-layer normalization. Layer one preserves raw text as-is. Layer two categorizes and converts to a simple yes/no signal — based on rules agreed with the client, with full traceability to the source.

Competitor Price Scraping: Assessment, Architecture, and Operational Model

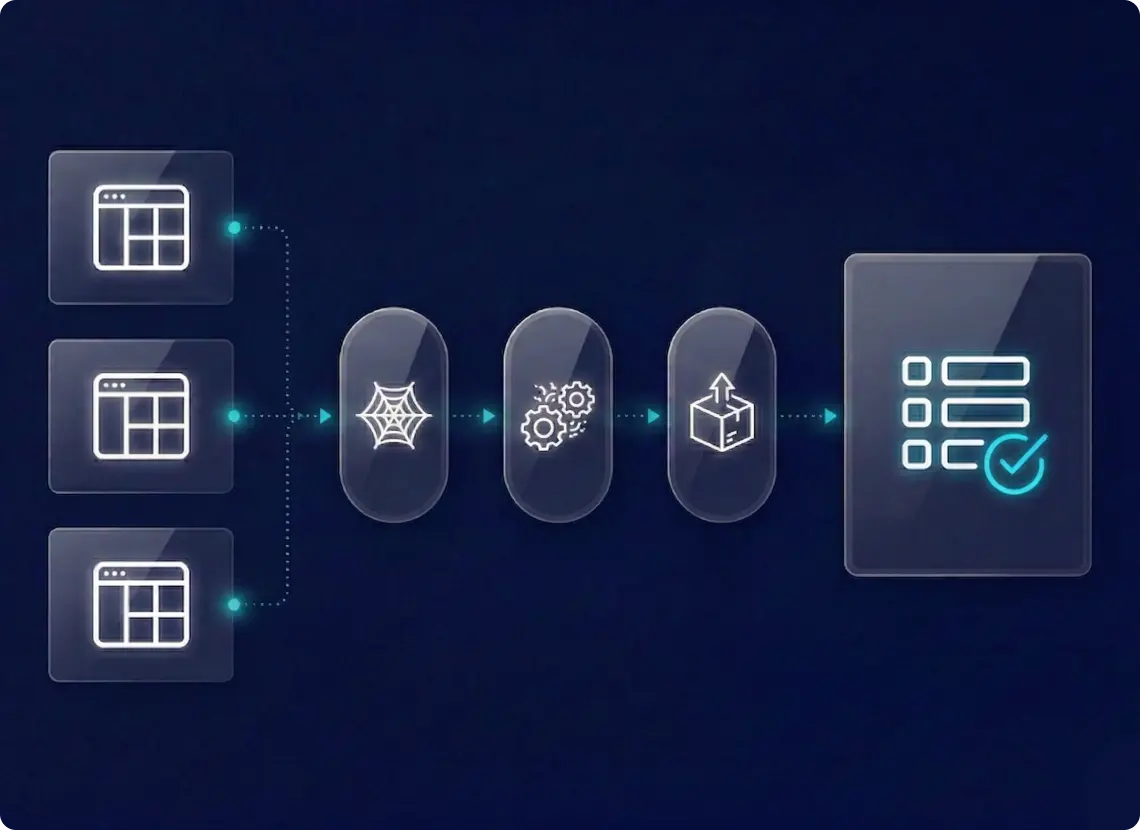

Source Assessment. All six sites were evaluated within two weeks. All expose EAN codes — deterministic matching, no fuzzy logic. Fallback pipeline scoped: extract brand/model/storage/color, generate candidates, score by title similarity and attribute matches, classify as MATCHED or NEEDS_REVIEW.

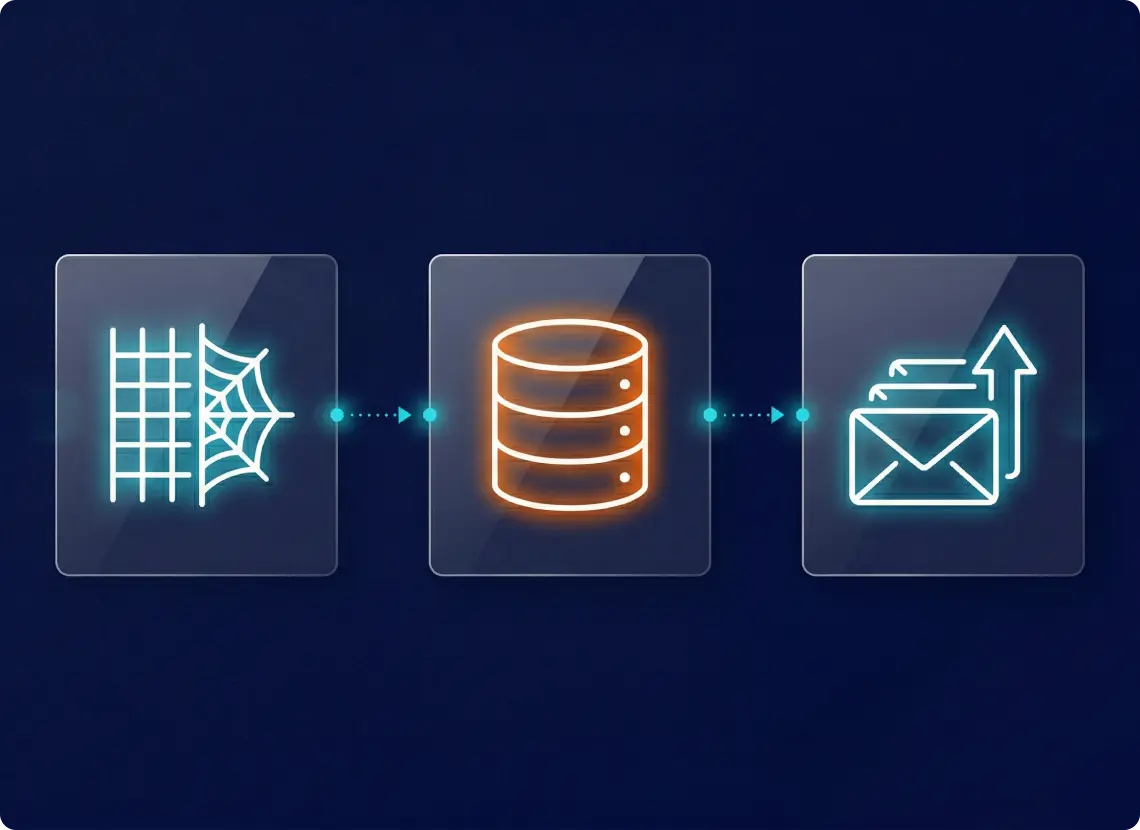

Proposed Architecture. Collection cycle every 30 minutes. Per-competitor spiders query by EAN, cache URLs, extract price, availability, and metadata. Data normalized in PostgreSQL, batch-exported to AWS SQS every 10 minutes. Monitoring via Metabase with proxy cost guardrails: dynamic retry limits and automatic cost-saving mode when unusual blocking is detected.

Stack: Python · Scrapy · AWS EKS/SQS/RDS · PostgreSQL · RabbitMQ · Temporal · Argo CD · Metabase · Stormproxies + Brightdata.

Scraping as a Service. The client didn’t want incident-based billing. The discussion shifted from scraper development costs to a responsibility model: how much does predictability cost, and which scenarios are in the base package? In enterprise scraping, this is often the axis that decides the deal.

Migration Without Data Gap. The client planned parallel operation of old and new providers until the client was confident in data quality — starting with data from even one competitor before full integration. The client’s current provider sent full data snapshots each cycle — so the new system had to match that format for a clean comparison.

All six competitor sites expose EAN codes — that eliminated the fuzzy-matching risk. But we also scoped a fallback approach because a competitor can remove EAN from their storefront overnight.

Price Monitoring: What We Delivered

- 2-week source assessment — six sites evaluated for data access, anti-bot risk, EAN, rate-limiting, and proxy requirements

- End-to-end architecture — scraping → normalization → queue delivery with monitoring and SLA tracking

- Tiered support model — options up to 24/7 with clear subscription-vs-hourly boundaries

- Fallback matching — EAN-primary with scoped alternative

- Migration strategy — parallel validation, phased delivery

- Risk analysis — oscillation handling, proxy cost guardrails, clear boundaries between vendor responsibility and source-site issues

Need Competitor Prices Tracked in Near-Real-Time?

Whether you're a telecom operator, retailer, or any business where pricing depends on what competitors charge right now, we design and build the data infrastructure behind it.

You have an idea?

We handle all the rest.

How can we help you?