Real estate data aggregation is only “worth it” when it creates a single, auditable property truth your team can act on. If your pipeline can’t explain why a listing disappeared, why the price changed, or why two “identical” homes don’t match, your reporting may look precise while still being unreliable.

This guide is written for product, analytics, and operations leaders at brokerages, PropTech platforms, and investment teams who need decision‑grade property intelligence. Engineers will also find the reference stack and tool suggestions useful, but the article is written for a broad business audience.

Our more contrarian take from delivery work in the US, UK, and Europe: stop buying “coverage” as the goal. 80% coverage with bulletproof identity beats 95% coverage with silent duplicates. In one audit of a “nationwide MLS access” dataset, roughly 30% of records behaved like duplicate relists—enough to poison comps, days‑on‑market, and “new inventory” metrics.

If you want the simplest definition before going deeper, start with what does aggregate data mean.

Who this guide is for (and what problem it solves)

If any of these are true in your team today, the lack of proper aggregation is likely already costing you money:

- Analysts reconcile duplicates and statuses in spreadsheets before every report.

- Relists after reductions show up as “new inventory.”

- A portal field change breaks your feed, and you notice it days later.

- Stakeholders debate “which source is right” instead of making decisions.

Common “band-aids” we see before teams fix this properly:

- “Unique by address” rules that collapse units or miss abbreviations.

- Manual relist checks during “busy weeks” become the norm.

- One-off scripts with no monitoring (so breakages go unnoticed).

Glossary

- Real estate data aggregation is the process of collecting raw data, standardising and de‑duplicating it, delivering property data from many sources into one consistent dataset.

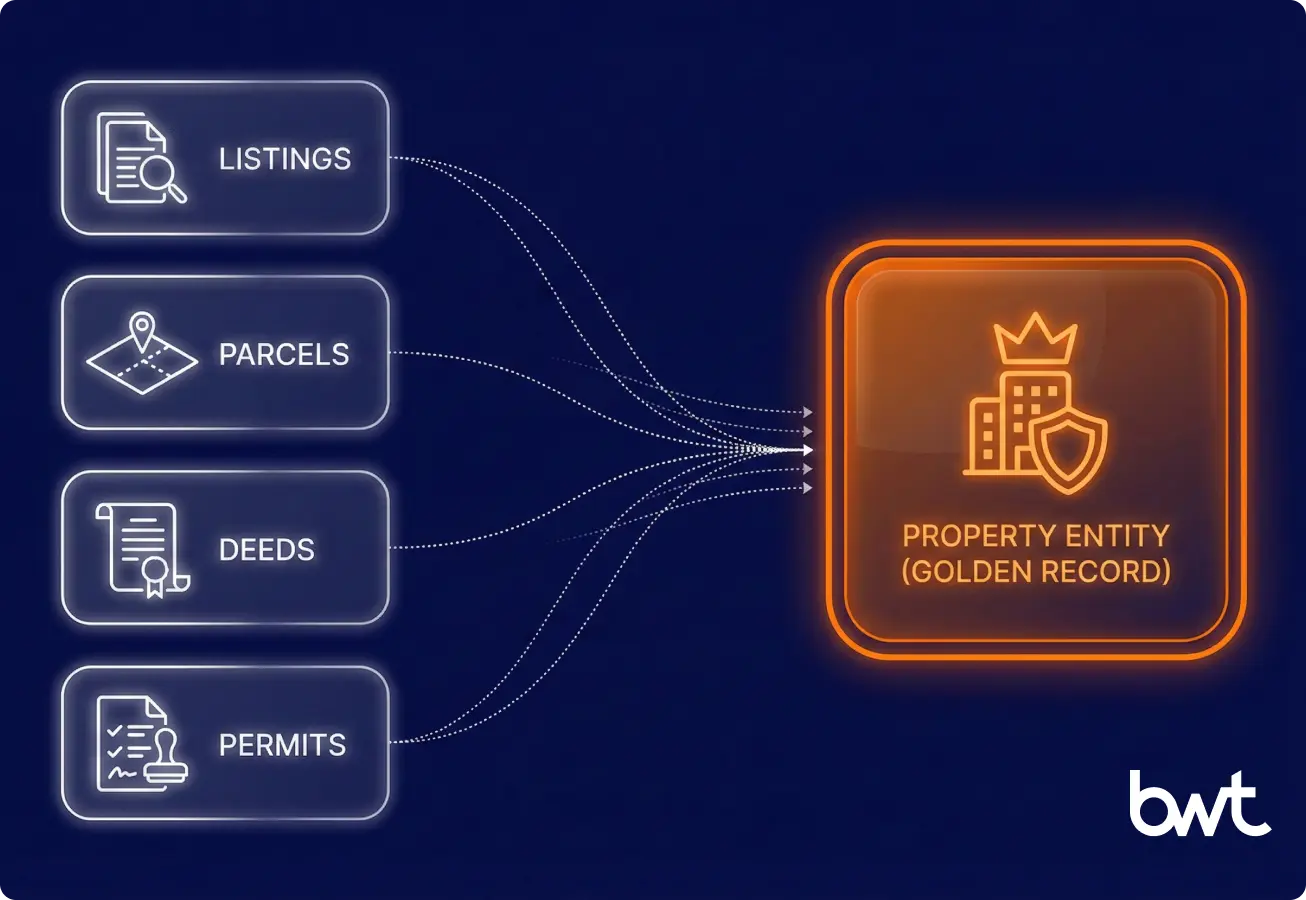

- Entity resolution is matching different records that refer to the same real‑world property (even when IDs, addresses, or names differ).

- Golden record is the current canonical version of a property profile, where each field value is selected via a defined resolution policy (typically: source priority + validation rules + recency windows), supported by confidence scoring and full lineage to the underlying sources.

In other words: “best” means “highest-confidence value under your policy,” not simply “most recent.” - Platform drift is when listing portals, MLS feeds, or public record formats change and break extraction/mapping logic over time.

- Similarity-based matching is comparing attributes (address strings, names, phone numbers, etc.) using distance/similarity metrics (e.g., Levenshtein, Jaccard, cosine similarity) to produce a similarity score.

- Probabilistic matching is estimating the probability that two records refer to the same entity using a statistical model (e.g., Fellegi–Sunter-style approaches). Similarity scores are often used as model features, but probabilistic matching is broader than “string similarity.”

- Property Identity Graph is a system that links listings, parcels, deeds, permits, and agencies to one property entity using rules + similarity signals + probabilistic matching + QA checks (automated data-quality tests).

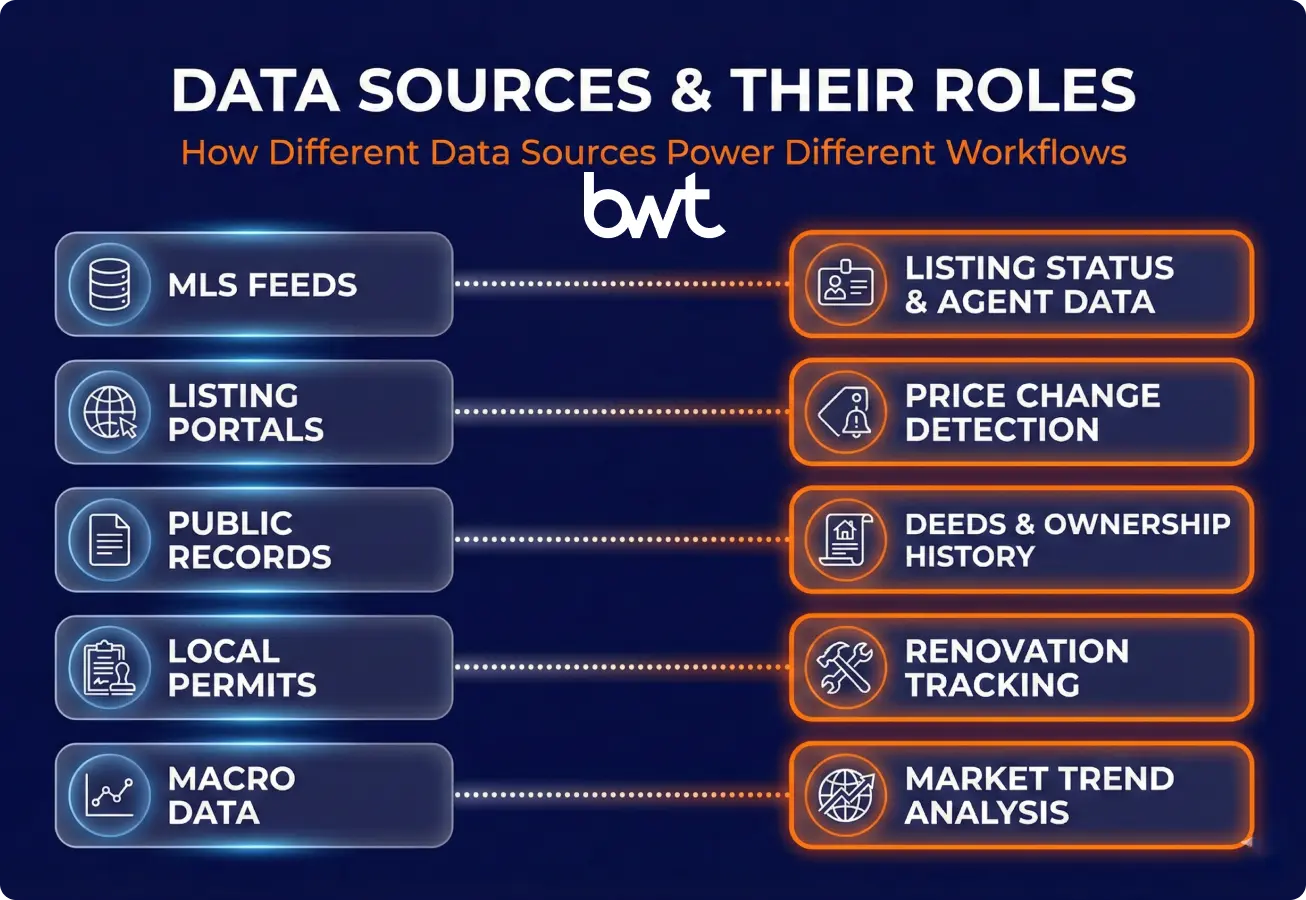

Key data sources in real estate (and what they’re good for)

A reliable pipeline starts with source roles, not source volume. The fastest way to scope real estate data is to be explicit about what each source can prove—and what it can only suggest.

Here is a more concise, high-impact version of your table.

Real Estate Data Source Comparison

| Source | Best For | Main Pitfalls | Refresh |

| MLS Feeds | Verified listings, agent metadata | Licensing limits, inconsistent field naming | Daily |

| Portals | Market reach, fast price updates | Unstable IDs, duplicate “new” listings | Intra-day |

| Public Records | Deeds, tax, boundaries | High latency (weeks), address mismatches | Weekly+ |

| Permits | Renovation & zoning signals | Poor coverage, hard to join with listings | Monthly |

| IoT/Building | Energy, occupancy (Commercial) | Privacy limits, “operational” vs legal truth | Real-time |

| Macro Data | Rates, demographics, trends | Coarse geography, reporting lags | Weekly+ |

Portals tell you what the market is doing right now, MLS tells you what a licensed feed says happened, and public records tell you what’s legally recorded—but usually later. Treat them as complementary, not interchangeable.

Structured vs unstructured real estate data

Structured data (MLS fields, permits tables) is easier to map, but it still breaks with drift and regional differences.

Unstructured data (descriptions, PDFs, images) is where differentiation lives—renovation details, restrictions, “soft” quality signals—but it’s harder to validate and standardise.

A practical rule that saves teams from bad downstream decisions: treat unstructured fields as evidence, not as the canonical truth—unless you can back it with extraction confidence and QA.

In practice, that means:

- store the raw text/PDF/image reference,

- store the extracted value plus confidence,

- keep field-level lineage (what source + what extractor + what date),

- never overwrite a canonical field without leaving an audit trail.

Why data aggregation for real estate is now operational

Pricing, inventory, and demand signals move across platforms faster than human checks can keep up. The cost of stale data shows up as missed deals, wrong valuations, and operational waste (people chasing listings that changed yesterday).

Market transparency

If you can’t normalise status meanings and tie events to one property identity, you don’t get “market insight”—you get noisy counts.

Example (same market):

- In the US, Portal A may label a listing “contingent” while Portal B shows “pending,” and the MLS encodes the same state with a different status code (for example, “Active Under Contract”).

- In the UK, different portals may use “under offer” vs “sold subject to contract (SSTC)” for a similar stage.

If you don’t map those into one canonical status per market, you can overcount “active” inventory and misread supply in a submarket. The same happens with reductions: without relist detection, a price cut can look like a brand-new listing.

Pricing accuracy

Pricing intelligence fails when reductions and relists look like “new” inventory. In our delivery work, we’ve seen similar dynamics in high‑volatility pricing environments: one global pricing engine processed 5,000+ daily promo updates with 98% accuracy only because QA and drift monitoring were engineered as first‑class features—not afterthoughts.

Portfolio performance

Risk is rarely visible in one dataset. Aggregating permits, ownership history, vacancy proxies, and listing churn helps asset managers and investors detect “soft” issues earlier (capex risk, liquidity risk, tenant churn risk).

“In aggregation, the hard part isn’t pulling data—it’s guaranteeing that today’s source change becomes a test case, not tomorrow’s outage.”

— Dmytro Naumenko, CTO, GroupBWT

Core use cases of real estate data aggregation

Aggregation becomes valuable when it lands inside real workflows—valuation, acquisitions, leasing, underwriting, and portfolio reporting.

Common use cases include:

- Property valuation and price intelligence: comps, reductions, days on market, and relist detection.

- Market and competitor analysis: supply/demand shifts, agent performance, submarket heatmaps.

- Investment and portfolio optimisation: acquisition pipeline scoring, asset benchmarking, hold/sell signals.

- Tenant behaviour and demand analytics: search trends, viewing activity proxies, churn indicators (where lawful and available).

The benefits of aggregated real estate data solutions show up when each use case has an owner and an action (alert → review → decision), not just a dashboard.

Examples (named cases):

- content aggregation solutions for real estate agencies: replacing manual portal checks with daily feeds and dedup + change detection, so pricing decisions aren’t delayed by spreadsheet reconciliation.

- automated listing checks for us real estate agents: automating listing-status checks and surfacing deltas (new/reduced/removed) so agents act on exceptions instead of re-checking everything.

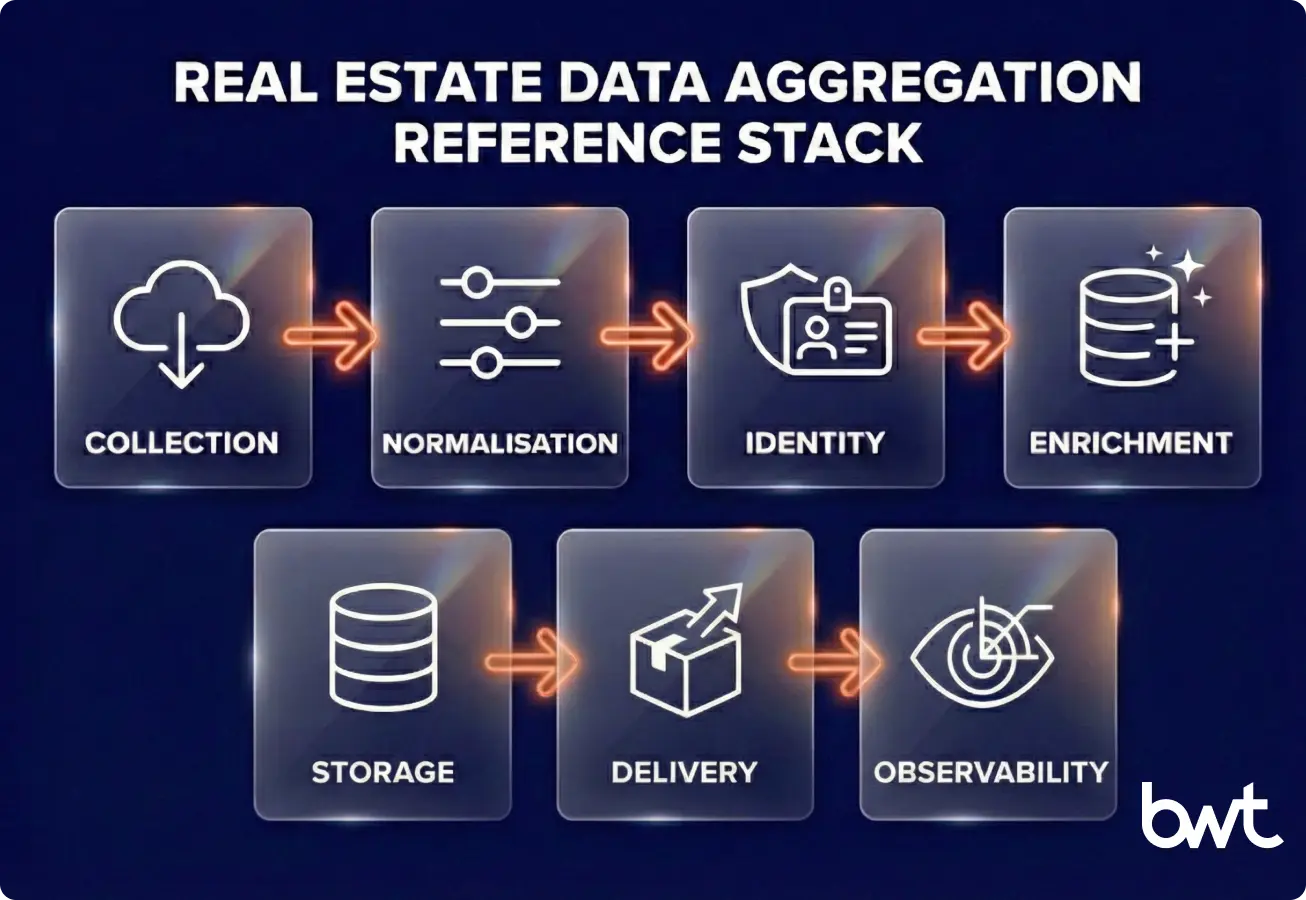

Real estate data aggregation architecture (reference stack + tools)

Judge an aggregation architecture by resilience: can it survive source drift, regional variation, and duplicates without silent data corruption (wrong or incomplete results without alerts)? Below is a reference stack we implement in data‑intensive systems.

Reference stack

| Layer | Key Task | Main Failure | Best Fix | Tooling |

| Ingestion | API/Scraping | Bot blocks / Schema shifts | Change detection / Retries | Airbyte, Fivetran |

| Normalise | Standardisation | Duplicate “ghost” records | Canonical enums / Parsing | dbt, libpostal |

| Identity | Dedup / Linking | Relists as “new” assets | Identity Graph / Scoring | Dedupe.io, Python |

| Enrich | GIS / Risk data | Lost data lineage | Source + Timestamp tagging | Geocoding APIs |

| Warehouse | ELT / Compute | Pipeline lag / Stale data | Incremental partitioning | Snowflake, BigQuery |

| Delivery | BI / API | Logic / Metric drift | Data contracts / SLAs | Looker, FastAPI |

| Monitor | QA / Alerts | Silent data corruption | Anomaly & “Missingness” checks | Great Expectations |

Data collection and ingestion layer

Start by defining what “fresh” means per signal. Prices might need near‑daily updates; ownership history can be weekly; permits could be monthly.

A practical ingestion pattern is multi‑mode ingestion: licensed feeds where possible, API where offered, and extraction only where permitted and necessary. This is also where you log request metadata and “why we trust this record.”

When portals are the only coverage option, web scraping real estate data can be part of the ingestion layer—but only if you design for drift and QA from day one.

If you want a non-real-estate example of the same multi-source playbook (logging, deltas, QA), see how teams aggregate tender data.

Data cleaning, normalisation, and enrichment

Cleaning is not “remove nulls.” Cleaning is building business‑meaningful consistency: address formats, bedroom counts, area units, status enums, and timestamps.

Normalisation should be version‑controlled, with tests, because changes in mapping logic can rewrite your history.

Storage, processing, and analytics layer

Choose storage based on how you’ll use the data:

- Analytics-first: data warehouse (Snowflake/Databricks) with BI on top.

- Product-first: warehouse + serving layer + API gateway.

- ML-first: feature store (system for storing ML features) patterns with strict lineage (traceability of where each feature came from).

Why ELT (not ETL) is usually the default here: in real estate data aggregation you typically want to land raw, immutable extracts in the warehouse/lake first (for auditability, backfills, and “what did the source say on date X?”), then transform with version-controlled models. ETL often hides raw evidence and makes reprocessing harder when mappings or definitions evolve.

BI, dashboards, and API access

Dashboards are where mistakes become decisions. Build explainability into the UI: show last update time, data confidence, and the contributing sources per property.

“If a dashboard can’t show lineage at the field level, your team will eventually stop trusting it—and then the whole system becomes overhead.”

— Alex Yudin, Head of Data Engineering, GroupBWT

Data sources and integration challenges in real estate (the problems that hit at scale)

The moment your pipeline crosses multiple regions or portals, the same property becomes a moving target.

MLS and listing platform limitations

MLS data can be structured and reliable, but it’s not universal, and licensing constraints shape what you can store and redistribute. Listing portals can expand coverage, but fields may be inconsistent, and identifiers may be unstable.

What “licensing constraints” usually means in practice (varies by MLS / vendor contract):

- Retention: Can you store raw MLS data indefinitely, or must you purge after a period / if access ends?

- Redistribution & display: Is it internal-only, or can you show it to end users/clients (and under what display rules/attribution)?

- Derivative works: Are you allowed to create comps/AVMs/alerts, and can you share outputs externally?

- ML training: Are raw records or derived features allowed for model training, or does it require separate permission?

- Access control & audits: Who can access, and what security/logging is required?

Treat “source limitation” as a product requirement, not a surprise.

Data fragmentation across regions and markets

Addresses, administrative boundaries, and property attributes vary by country and even by city. If you don’t model localisation (currency, measurement units, address rules, legal entities), your dataset can look consistent while being semantically wrong.

This is where commercial real estate data aggregation becomes harder: leases, suites, floor plates, and building systems introduce another layer of identity complexity.

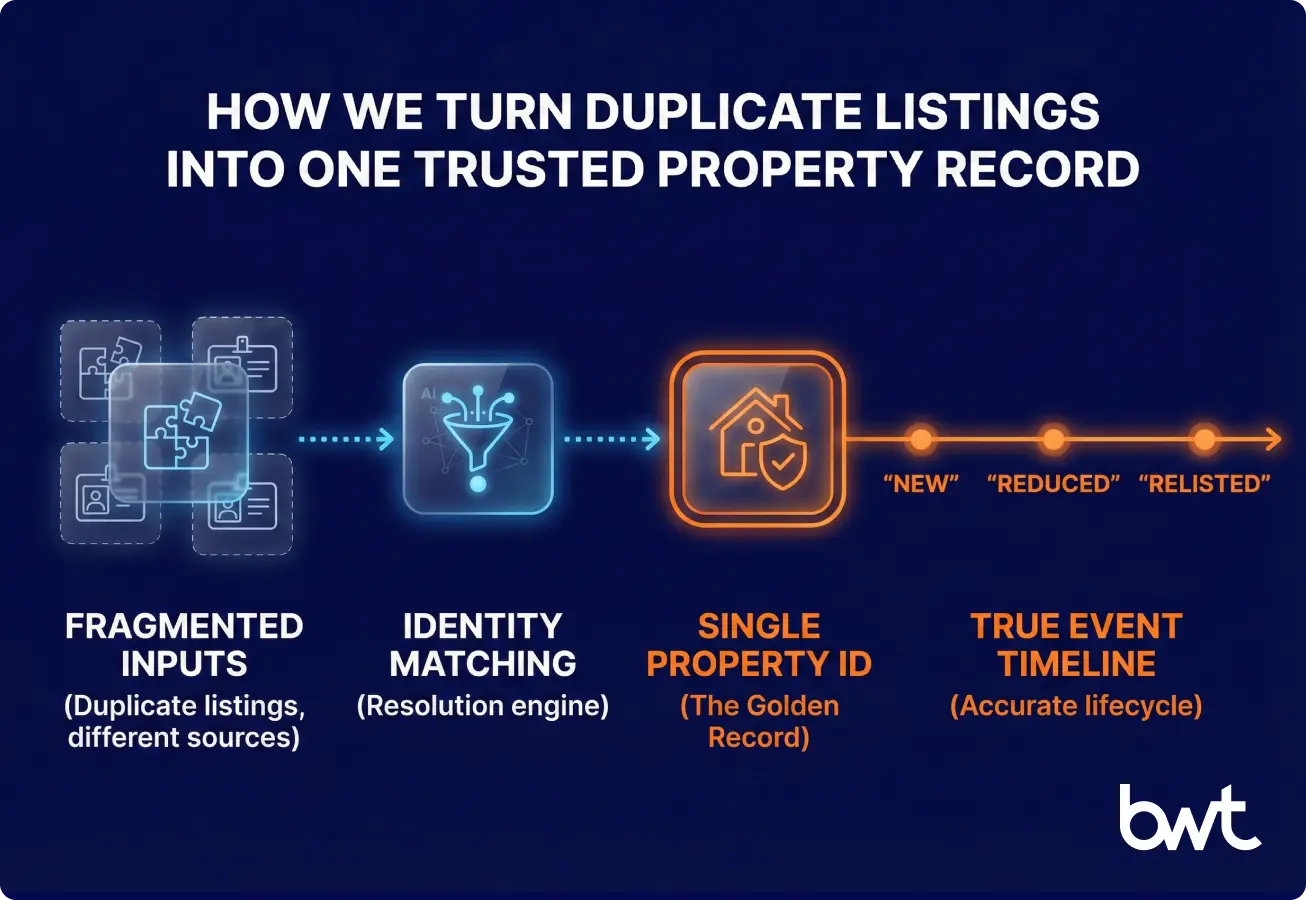

Data quality, duplicates, and inconsistent formats

Duplicates are rarely exact duplicates. They’re near‑duplicates caused by relists, agent changes, portal IDs, unit numbering, or address abbreviations.

Here’s a small example of what dedup has to resolve:

| Source | Address | Unit | Price | Listing ID | Problem |

| Portal A | 12 King St | 3 | 450,000 | A‑991 | Uses “St” |

| Portal B | 12 King Street | 3 | 450,000 | B‑188 | Spells out “Street” |

| Portal A | 12 King St | 3 | 445,000 | A‑1044 | New ID (relist) after reduction |

A robust approach doesn’t “pick one.” It builds a golden record with history and change events.

Compliance, ethics, and legal considerations

Compliance is a design constraint. If you get it wrong, you can lose data access, expose personal data, or create contractual risk.

- Data licensing and terms of use: confirm rights to collect, store, and redistribute data for your exact use case (internal analytics vs client-facing product).

- Privacy regulations and personal data: minimise PII, apply retention rules, and follow applicable laws (e.g., GDPR/UK GDPR, CCPA where relevant).

- Ethical data collection practice: avoid dark patterns, respect access controls, and document why each data element is necessary.

This guide is not legal advice, but rather an engineering and governance checklist to take into your legal review.

Primary references you’ll typically review with counsel/compliance:

- EU GDPR (Regulation (EU) 2016/679): https://eur-lex.europa.eu/eli/reg/2016/679/oj

- UK GDPR guidance (ICO): https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/

- California CCPA overview (State of California DOJ): https://oag.ca.gov/privacy/ccpa

The role of AI and automation in property data aggregation

AI helps when it reduces manual reconciliation, but it hurts when it introduces untraceable decisions.

Where AI performs well:

- AI-based data matching and de-duplication: probabilistic matching (e.g., Fellegi–Sunter-style models) can use similarity signals (Levenshtein/Jaccard/cosine) to improve recall when addresses and IDs vary.

- NLP for listings, descriptions, and documents: extract features like renovations, amenities, and restrictions from text.

- Predictive analytics for pricing and market trends: build forecasts using clean event streams (reductions, relists, time-on-market).

Where AI needs guardrails:

- Never let a model overwrite a canonical field without storing the raw evidence and confidence.

- Use human review for “high-impact” attributes (ownership, legal restrictions, zoning), because errors become expensive fast.

How GroupBWT approaches this differently (identity + QA first, coverage second)

Most teams don’t fail because they can’t collect listings. They fail because they can’t prove identity, quality, and lineage when the source inevitably changes.

In practice, our delivery approach focuses on:

- Identity as a product layer (not a one-off dedup script): rules + confidence scoring + event history.

- QA and observability as default: completeness, drift, and “missingness” alarms so issues surface fast.

- Field-level lineage so the business can answer “why do we trust this number?” without engineering support.

If you’re deciding between building and outsourcing, our data aggregation services overview explains what a managed, auditable delivery usually includes (SLAs, QA reporting, and change management).

Build vs buy: choosing a data aggregation for real estate strategy

There isn’t a universal answer; there is a universal decision process. Below is a matrix your team can use in procurement discussions.

Decision matrix (build vs buy)

| Criterion | Buy (platform/data vendor) | Build (custom pipeline) |

| Time to first dataset | Fast | Medium |

| Coverage flexibility | Limited to vendor scope | High |

| Control over dedup logic | Low/medium | High |

| Auditability & lineage | Varies | Can be designed-in |

| Long-term cost | Predictable, can grow | Higher upfront, optimisable |

| Competitive differentiation | Often low | High |

Recommendation (use this decision tree):

- Start with vendors if you need standard MLS coverage in 6 months.

- Build custom if your competitive advantage is matching accuracy, or if you integrate into proprietary underwriting models.

A hybrid is common: buy licensed feeds where they fit, and build custom acquisition + identity where differentiation matters. This is often how organizations aggregate real estate data without betting the company on a single vendor or a brittle scraper.

If you’re comparing providers, start with this shortlist of top data aggregation companies, then pressure-test every vendor on identity, QA, and lineage—not just source count.

If your workflow is the product, custom data aggregation is usually the more realistic long-term path.

Data aggregation for real estate by business model

Different models need different “truth definitions.”

PropTech platforms and marketplaces

You need near-real-time freshness, strong dedup, and user-facing trust cues (timestamps, verification flags). Your pipeline becomes part of the product experience.

Real estate investment and asset management firms

You need an auditable history, stable entities, and scenario analysis. The key output is not listings; it’s risk-adjusted insight and portfolio comparability.

Brokers, agencies, and valuation companies

You need speed plus accuracy. A pipeline that aggregates real estate data into daily feeds can outperform manual checks, especially during volatile weeks.

How to get started with data aggregation for real estate (a pragmatic plan)

If you’re asking “how to aggregate real estate data” without creating a maintenance nightmare, start with scope and governance, then build the smallest reliable loop.

Step 1: define business goals and KPIs

Pick 2–3 KPIs that tie directly to decisions:

- Coverage: % of target market captured

- Freshness: median hours since last update

- Accuracy: sampled field accuracy (price/status)

- Dedup rate: % of records linked to a property entity

- Reliability: pipeline uptime + completeness alerts

Step 2: select data sources and technologies

Match sources to workflows. A valuation workflow may prioritise MLS and sold history; a lead-gen workflow may prioritise active listings and churn.

Also decide where data lives (warehouse vs product DB) and how teams access it (BI vs API).

Step 3: scale from pilot to enterprise-level platform

Pilot doesn’t mean “toy.” Pilot means:

- 1–2 regions

- 2–3 sources

- full QA + monitoring

- clear success criteria

Then scale by repeating the same pattern per region/source, not by rewriting everything.

30‑day launch checklist

- Define 3 business questions you must answer weekly.

- List sources and confirm licensing/usage rights.

- Define canonical property entity fields (10–20 max).

- Implement address standardisation + status enums.

- Build identity rules (exact + fuzzy + confidence).

- Add completeness checks (per source, per region).

- Add drift detection (HTML/API schema change alarms).

- Create a “truth dashboard” (freshness, coverage, errors).

- Run a weekly human QA sample (50–200 records).

- Lock an update cadence and ownership (who responds to alerts).

A simple cost-benefit + ROI estimate

Net benefit (hours saved × hourly cost) + (errors avoided × avg loss per error)

Total cost platform cost + engineering cost

ROI % = ( Net benefit / Total cost ) × 100

(If you want this as a lightweight “calculator,” paste the three lines into a spreadsheet and treat it as a baseline—not a business case.)

Final checklist: make real estate data aggregation provable

Custom wins when:

- Your matching logic is your advantage,

- You need field-level lineage, or

- You must integrate directly into internal pricing/underwriting systems.

One practical litmus test: if your business can’t clearly explain why a record is trusted, you don’t have real estate aggregate data—you have an export.

“Even strong internal tools fail when listings vanish, layouts shift, and pricing misaligns. The fix is not more scraping—it’s embedding QA and identity logic into the workflow where decisions are made.”

— Oleg Boyko, COO, GroupBWT

FAQ

-

Why do our “active inventory” numbers disagree across MLS, portals, and internal reports?

Because “status” isn’t a shared standard, and the meanings drift. Use canonical status mapping + status history, or you’ll compare unlike-for-like.

-

How do we stop price reductions from showing up as “new listings”?

Solve it as identity + event history: tie relists to the same property and log events (new / reduced / relisted / removed) so a new portal ID can’t reset the timeline.

-

What duplicate rate is a red flag for comps and underwriting decisions?

If unresolved near-duplicates stay above ~3–5%, assume comps, DOM, and “new inventory” metrics are already drifting—track it weekly and treat spikes like incidents.

-

What breaks first when we add a second region or a second portal?

Localisation + drift: address rules, units, and status semantics change, and portal fields/layouts shift—without drift monitoring + regression tests, you’ll ship quiet errors.

-

How to aggregate real estate data without a dedicated data engineering team?

Start narrow (one region, few sources) and demand delivery artefacts: a data contract, QA metrics, visible lineage in the UI, and SLAs. You can outsource ops, not ownership.

-

How often should property data be refreshed?

Set refresh SLAs to the decision cycle: status/pricing daily or near-daily; ownership history weekly (typical). Without SLAs, “fresh” has no owner.

-

What is the difference between data collection and aggregation in real estate?

Collection pulls records; aggregation standardises, resolves identity, deduplicates, and delivers consistent definitions with explainable lineage.