Choose a web scraping service with confidence: data quality, anti-bot resilience, compliance, integrations, monitoring, pricing, and security—plus a vendor scorecard.

How to Choose a Web Scraping Service: The Vendor Scorecard & Operational Guide

According to the Web Scraping Market Analysis by Mordor Intelligence (published January 2026), the global web scraping market is projected to more than double from USD 1.03 billion in 2025 to USD 2.23 billion by 2031.

A web scraping provider is not a “data downloader.” If you’re deciding how to choose a web scraping service, treat it like an operational capability you’ll depend on when dashboards, pricing, risk, or sales teams are already mid-decision—because that’s when bad data gets expensive.

In practice, you’re buying reliability: QA gates, monitoring, and a recovery loop—not “more pages per hour.” That’s the mental model behind web scraping as a service, and it’s how we recommend evaluating vendors (even if you ultimately build in-house).

This guide shows you how to choose providers using a repeatable scorecard, practical acceptance tests, and realistic delivery estimates (shared as aggregated ranges because our clients’ work is under NDA). Use it as a checklist when choosing a web scraping services partner.

Many teams start without formal QA or monitoring—especially on “quick win” projects. That’s normal. This guide helps you see what to add first, without pretending you need enterprise maturity on day one.

Definitions (don’t memorise—do contract them)

You don’t need to memorise these terms — but you should expect your vendor to define them contractually (in the SOW/MSA and the data contract), because that’s what prevents “CSV outsourcing.”

- Web scraping service is a managed capability that extracts data from websites, validates it, and delivers it to your systems on a schedule with monitoring and support.

- A web scraping solution is the combination of tooling + infrastructure + processes used to scrape, validate, and deliver web data (in-house, vendor, or hybrid).

- Data contract is a written schema + rules that define fields, types, required/optional status, and validity ranges (what “usable” means).

- Selector drift is when a site layout changes, and your extraction logic starts returning missing or wrong fields—so numbers quietly stop matching reality.

- Usable Record Rate (URR) is the % of delivered records that pass QA rules (dedupe, null thresholds, validity checks)—i.e., how much of your feed you can actually trust.

- MTTR (Mean Time to Repair) is the time from a detected breakage to a restored, validated delivery—i.e., how long your dashboard can be wrong before it’s fixed.

The 5 procurement questions that prevent expensive rework

If you’re hiring a web scraping company, don’t start with “How many pages can you scrape?” Start with these:

- What does “good data” mean (accuracy, completeness, dedupe rules, URR targets with benchmark context)?

- What breaks most often (JS rendering, layout drift/selector drift, blocks) and how do you recover (MTTR + replay)?

- What’s your compliance stance (Terms of Service (ToS) approach, GDPR/CCPA boundaries, retention, audit logs)?

- How will data be delivered (API/DB/BI), validated, and versioned (data contract)?

- Who owns operations (monitoring, alerts, on-call, change management)?

“Across NDA delivery programmes, selector drift (when site changes make your numbers quietly stop matching reality) causes more production incidents than ‘hard blocks’. If a vendor can’t show drift MTTR targets and a replay path, they’re selling extraction — not reliability.”

— Dmytro Naumenko, CTO, GroupBWT

Why choosing the right web scraping service matters

Business risks of poor-quality scraping

Poor scraping rarely fails with a clean error. It fails quietly and then shows up in decisions:

- Missing records that skew dashboards and forecasts

- Duplicates that inflate KPIs and create false growth signals

- Wrong values (currency, unit size, variant, availability) pulled from the wrong element

- Compliance exposure because collection boundaries and retention weren’t defined

Impact on data accuracy, compliance, and decision-making

Every decision inherits your data’s failure modes. If you can’t audit where a number came from, you can’t defend it to Finance, Legal, or leadership.

That’s why choosing a web scraping company is a data governance decision, not a one-off technical purchase.

Cost of rebuilding scraping pipelines later

“Quick wins” become core workflows fast. When the first version ships without monitoring, replay, and clear contracts, you pay later in:

- Emergency fixes after site changes

- Retroactive backfills because history wasn’t captured

- Manual QA because quality gates were never defined

Rule of thumb (aggregated, NDA): for business-critical datasets, the second rebuild often costs 1.5–3× the initial “cheap” implementation over the first 6–12 months, because you’re adding operations and reprocessing historical gaps under pressure.

Define your web scraping goals before choosing a provider

Before evaluating vendors, write a one-page Data Brief. It turns vendor demos into a measurable evaluation instead of a sales pitch—and it’s the fastest way to align Product, Data, and Procurement.

What data do you need (price, reviews, leads, news, sentiment)

| Use case | Typical fields | Hidden complexity | Who consumes it |

| Pricing intelligence | price, currency, promo, availability | variants, bundles, geo pricing | BI + pricing ops |

| Reviews & ratings | rating, text, author, date | pagination, anti-bot, dedupe | CX + product |

| Lead generation | company, role, email, URL | PII boundaries, consent, enrichment | sales ops |

| News monitoring | headline, date, entities, link | duplication, source drift | risk + comms |

| Sentiment signals | sentiment, topic, entities | sarcasm/context, bias, language mix | research |

Example Data Brief fields (copy/paste)

- Sources (domains/URLs), geo, languages

- Entities/fields + required vs optional

- Update frequency + “freshness window” (e.g., must be <3 hours old for pricing/availability, but 24–72 hours can be fine for long-tail enrichment)

- Expected volume (pages/day, records/day)

- Delivery target (API, S3/Blob, DB table, BI connector)

- QA rules (null thresholds, ranges, duplicates, canonical URLs)

- Compliance constraints (PII, retention, audit logs)

Data freshness and update frequency requirements

Freshness is a system constraint, not a preference. Define tiers per dataset:

- Near real-time (seconds/minutes): alerts, trading, risk

- Frequent (hourly): pricing, availability

- Daily/weekly: catalog enrichment, long-tail coverage

Scale: hundreds vs millions of pages

Scale changes crawl strategy, proxy budget, storage, retries, and QA sampling. Ask how the provider handles:

- Throughput under load (queueing + backpressure handling, i.e., slowing down safely so you don’t crash the pipeline or trigger bans/cost spikes)

- Cost controls when volume spikes

- Sampling strategy (how you QA 1M records without manual checks)

Structured vs unstructured data needs

Structured sources (JSON-LD, stable DOM) are cheaper. Unstructured sources drive cost and risk.

Call out if you need:

- JavaScript rendering (React/Next.js)

- Infinite scroll / client-side pagination

- PDFs/images (OCR)

- Mixed-language pages and locale-specific formatting

If your scope includes extracting meaning from text (entities, sentiment, classification) or working heavily with PDFs/images, you’re moving into AI data scraping territory. So your acceptance tests should cover model error modes (hallucinations, bias, language drift), not just selectors.

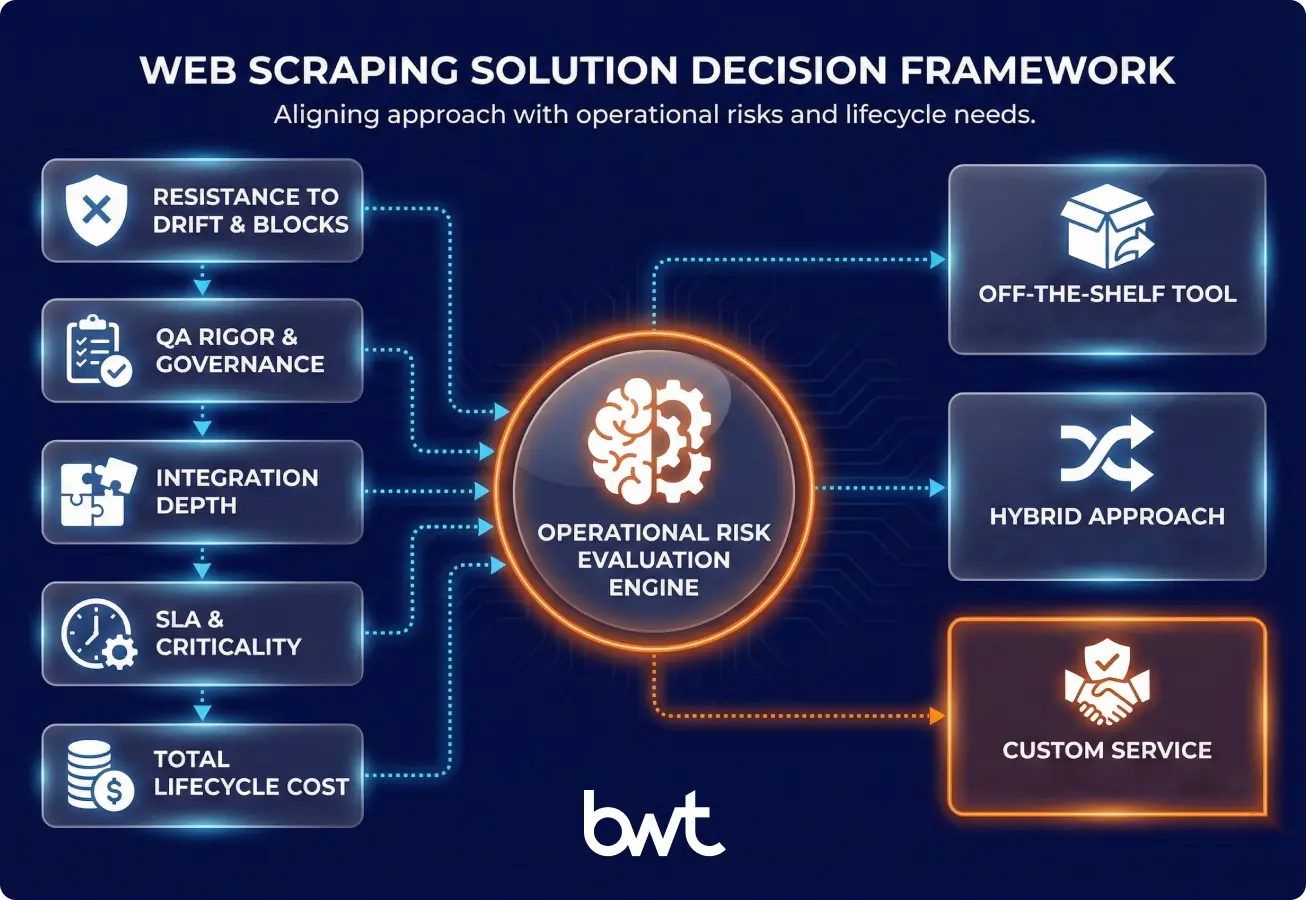

Custom web scraping services vs off-the-shelf tools

The quickest way to decide is to match the tool type to your failure modes (drift, blocks, QA, integrations).

When ready-made scraping tools are enough

Off-the-shelf tools are fine when:

- Sources are stable and low-defence

- You only need a few fields

- Downtime is acceptable

- You have an internal owner who can maintain selectors and retries

When custom web scraping is the only viable option

Custom is usually required when:

- Sites are JS-heavy or change layouts often

- You need strict QA + dedupe and a data contract

- You require SLAs, monitoring, replay, and alerting

- You need direct integration into your systems (not manual CSV handling)

Long-term scalability and flexibility comparison

| Option | Best for | Trade-off |

| Off-the-shelf tool | small scope, fast start | brittle under drift |

| Custom service | enterprise reliability | requires engineering discipline |

| Hybrid | speed + control | needs clean interfaces/contracts |

Key criteria to evaluate a web scraping service provider

To make vendor selection repeatable, score vendors on evidence, not claims.

The TRUTH Scorecard (a vendor test we use)

TRUTH is a vendor evaluation framework we use internally to prevent “CSV outsourcing.”

You don’t have to use our framework — but any serious vendor evaluation should cover these five dimensions:

- T — Truth (Data quality): URR, QA rules, dedupe, auditability

- R — Resilience: drift handling, MTTR, replay, change management

- U — Utility: delivery into your stack (API/DB/BI), schema/versioning

- T — Trust (Compliance + security): GDPR/CCPA posture, controls, logs

- H — Hands (Operations): monitoring, alerts, on-call ownership

Scoring: 0–5 per dimension. Weigh what matters to your use case.

If you’re still building a shortlist, use any market list as a starting point—but don’t skip the proof. Here’s our overview of top web scraping companies, and the scorecard below is how we’d validate any shortlist.

Vendor scorecard (copy/paste)

| Dimension | What “good” looks like | What to request |

| Truth | QA rules + URR target + dedupe logic + audit trail | Sample dataset + QA report format |

| Resilience | Drift playbook + MTTR target + replay loop | Incident example + runbook excerpt |

| Utility | API/DB outputs + schema versioning + change notifications | API docs + example schema + changelog |

| Trust | Compliance boundaries + retention + audit logs + security controls | Compliance memo + retention policy + security overview |

| Hands | Monitoring dashboards + alerts + on-call ownership | Screenshot + escalation SLA + on-call process |

“When we price projects, we assume operations are a first-class cost. In aggregated NDA programmes, monitoring + QA + change management can consume 30–50% of ongoing effort (monthly engineering time after launch, not the initial build) — and that’s exactly what keeps data usable.”

— Alex Yudin, Head of Data Engineering & Web Scraping Lead, GroupBWT

Data quality and accuracy guarantees

Ask for definitions, not adjectives—because “accurate” can mean anything unless you specify measurement.

Minimum acceptance questions (with useful context)

- Is “accuracy” measured field-level (each value) or record-level (the whole record passes)?

– Why it matters: field-level accuracy can look great while record-level usability collapses if a required field is missing. - What is the URR target?

– 98%+ is a common target for business-critical datasets (pricing, risk).

– ~95% can be acceptable for exploratory research.

– 99.5%+ often requires manual review or heavier automation—expensive at scale. - What’s the completeness expectation per source (and what’s an acceptable “partial delivery” threshold)?

- How is dedupe done (URL canonicalisation, content similarity, entity keys)?

Micro-case (why field-level vs record-level matters)

Example scenario: a vendor delivers 1M pricing records/day. Field-level “price” accuracy looks fine, but 8% of records have missing availability after a layout change. Your dashboard still updates, yet pricing ops start discounting items that are actually out of stock. The fix isn’t “scrape faster”—it’s treating missing availability as a hard fail in the data contract, alerting on URR drop, and replaying raw captures after selectors are repaired.

Example QA checks (adapt to your dataset)

- Null-rate by field: drift often shows up as null spikes (<1–3% for required fields is a common starting guardrail)

- Range/format checks: prevents wrong currency/units (price > 0, ISO date)

- Duplicate rate: prevents inflated KPIs (<0.5–2% depending on source)

- Outlier detection: catches wrong selectors (z-score / IQR rules; for skewed distributions like prices/ratings, percentile bands such as 1st–99th are often more robust)

- Freshness window: ensures timeliness (<X hours since source update, tied to your use case tier)

Ability to handle dynamic and JavaScript-heavy websites

A serious provider can explain:

- When they use HTTP extraction vs headless rendering

- How do they reduce render cost (selective rendering, caching)

- How do they detect partial loads and missing blocks

Practical test: give them your hardest JS page and ask for a working sample + QA report, not a promise.

Anti-bot, CAPTCHA, and IP-blocking resilience

Blocking is normal at scale. The key is detection + recovery + transparency, and staying within lawful/ethical boundaries.

Ask how they:

- Detect block signals (challenge pages, anomalies, latency spikes)

- Adjust pacing and retries without causing load spikes

- Report “partial delivery” vs “missing data” clearly

Note: if your use case requires access that conflicts with a site’s ToS or local regulation, the right answer may be partnerships, licensed data, or official APIs — not escalation of evasion.

Scalability and performance under load

Ask for:

- Queue architecture and backpressure handling (how the system slows down safely under load)

- Per-domain budgets and throttling policy

- Backfill strategy (how to replay missed runs safely)

Data delivery formats and integrations (API, DB, BI tools)

Delivery format is a deal-breaker. Confirm:

- Outputs: JSON/CSV/Parquet as needed

- API endpoints and/or direct DB writes

- Compatibility with your BI tooling (Power BI, Looker, Tableau)

- Schema versioning and change notifications

Legal, ethical, and compliance considerations

Choosing a web scraping service without a documented legal posture is a hidden risk you’re inheriting.

What “professional” looks like:

- Documented ToS-awareness and access approach

- GDPR/CCPA alignment where applicable (PII minimisation, retention, access controls)

- Audit logs: what was collected, when, and from where

- Ethical crawling: rate limiting, respect for service stability

If your stakeholders want deeper primers you can forward internally, start here: Is web scraping legal and GDPR web scraping.

Helpful sources:

- GDPR (EU)

- CCPA (California)

- Robots Exclusion Protocol (RFC 9309)

General information only; not legal advice.

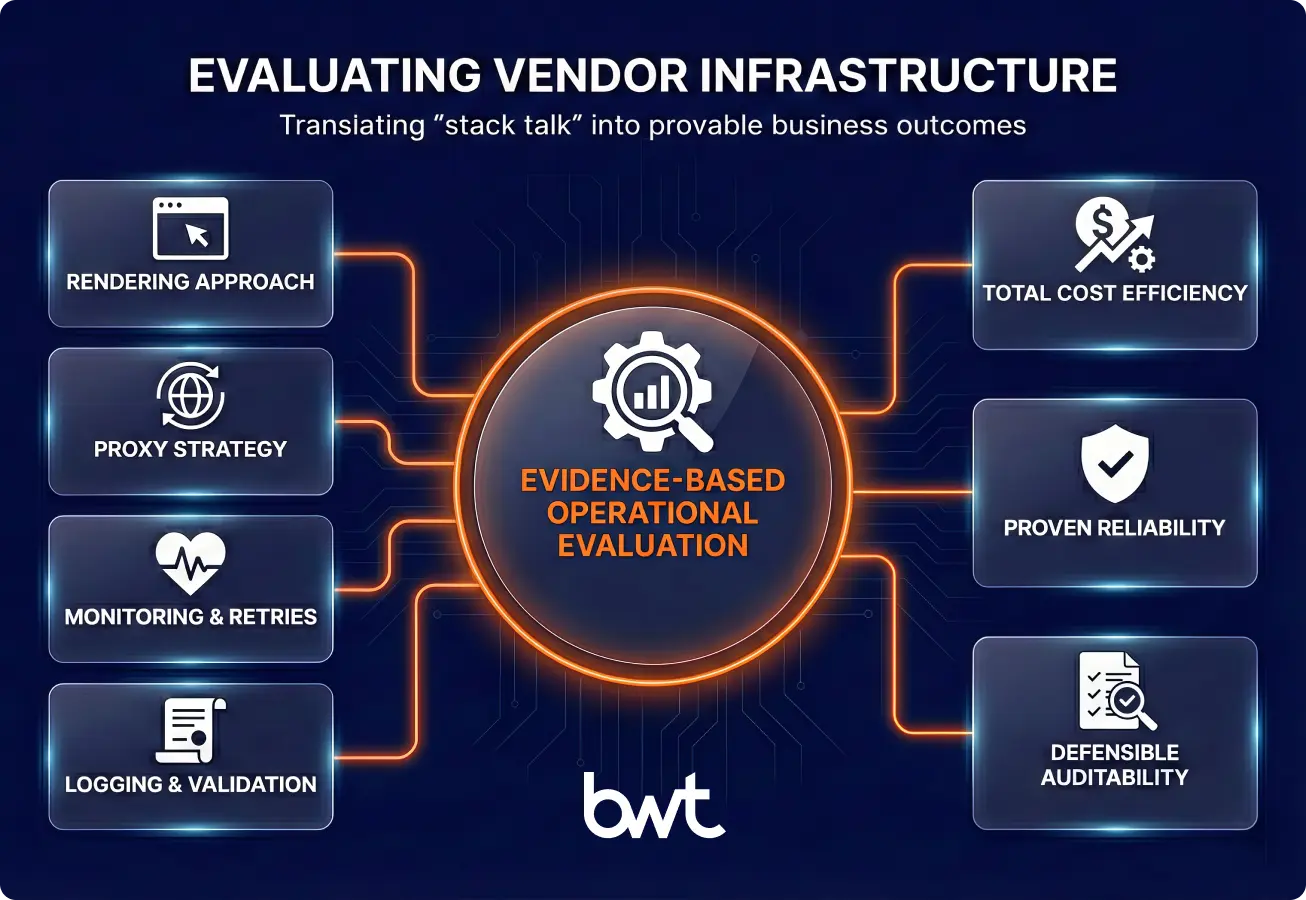

Infrastructure and technology stack capabilities

A vendor’s stack only matters insofar as it changes your cost, reliability, and auditability. Ask them to map components to outcomes.

Ask these questions (plain-language, procurement-friendly):

- Cost drivers: “What % of this dataset requires headless rendering, and how does that change cost?”

- Failure modes: “In the last 30 days, what caused the most incidents: drift, blocks, upstream outages?”

- Proof: “Show a real incident timeline: detection → fix → replay → validation.”

Headless browsers vs HTTP-based scraping

- HTTP-first is cheaper and faster on stable pages.

- Headless is required for JS rendering, complex flows, and some defences.

- The best answer is usually hybrid with fallbacks.

Proxy management and geo-distribution

Ask how they:

- Control location and ISP targeting (when legitimate business needs require it)

- Budget traffic and avoid proxy burn

- Handle region-specific content variations and locale formatting

Monitoring, retries, and failure recovery

These are non-negotiables for production:

- Retries with exponential backoff

- Error categorisation (block vs drift vs upstream outage)

- Replayable raw capture for reprocessing

- Alerting that routes to owners (vendor + your stakeholder)

Logging, versioning, and data validation

Ask for:

- Data contracts (types, required/optional, ranges)

- Versioning for schema changes

- Validation rules (null thresholds, outlier detection)

- Change log: what changed, when, and why

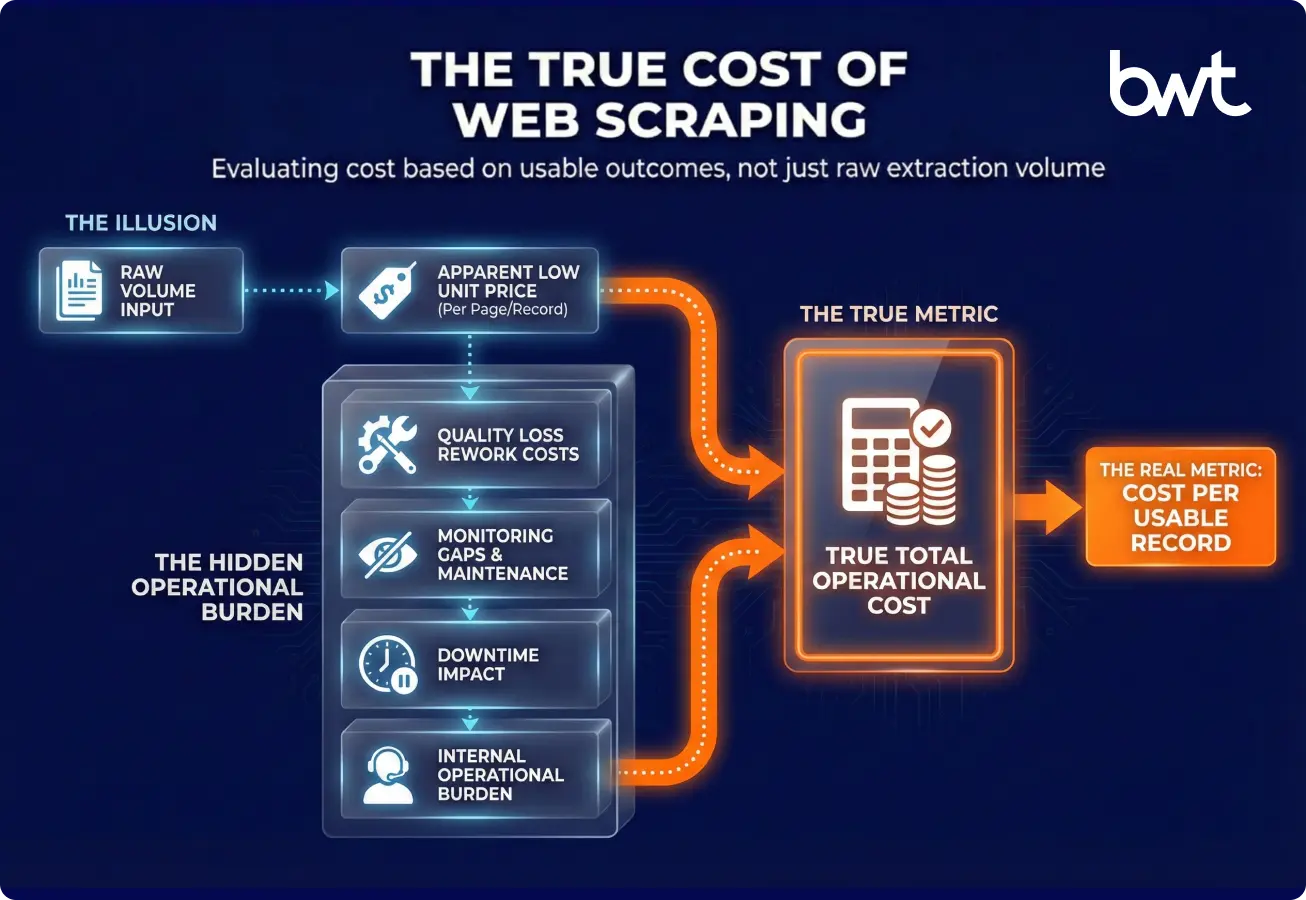

Pricing models: what you’re really paying for

Choosing a web scraping vendor on the cheapest quote usually backfires because most cost sits in resilience and operations.

Per-page, per-record, and subscription-based pricing

| Model | Best for | Watch-outs |

| Per page | predictable crawling | ignores data value |

| Per record | outcome-aligned | needs strict record definitions |

| Subscription | ongoing programmes | scope creep if contracts are vague |

Hidden costs of “cheap” scraping services

Common hidden costs:

- Manual QA because the data is messy

- Frequent breakages with slow fix cycles

- “Extra fees” for JS rendering or complex sources

- Missing monitoring (you become the monitor)

Cost predictability for long-term projects (a simple estimator)

Ask for a cost model that includes:

- Proxy and rendering assumptions

- Maintenance expectations (drift frequency + MTTR targets)

- Backfill/replay pricing and retention

Quick TCO sanity check (micro-example): You ingest 1,000,000 records/month. - Vendor A: $0.0014/record, URR 80% → you pay $1,400 for 800,000 usable records → $0.00175 per usable record – Vendor B: $0.00165/record, URR 99% → you pay $1,650 for 990,000 usable records → $0.00167 per usable record

Even though Vendor B is “more expensive per record,” it can be cheaper per usable record—and it reduces the downstream cost of bad data (manual QA, reruns, stakeholder firefighting).

Security and data ownership

Security questions are straightforward—which is why you should ask them early, in writing, before you’re deep in a proof of concept.

Checklist (ask for written answers + evidence):

- Who owns the extracted data and derived datasets?

- Storage location, encryption at rest/in transit, key management

- Access control (RBAC), audit logs, and retention policies

- Secure transfer methods (SFTP, VPN, signed URLs, private APIs)

- Security proof: SOC 2/ISO 27001 (if available) or completed security questionnaire + pen test summary (where applicable)

If a vendor can’t answer these clearly (or refuses to provide any evidence), treat it as a procurement risk—not a “later” topic.

Red flags when choosing a web scraping service

“If you wonder how to choose a web scraping solution, watch a vendor can’t demo alerting, replay, and a usable-record SLA before contract signature, you’re not buying a service — you’re buying future firefighting. We treat web data like a production data product from day one.”

— Oleg Boyko, COO, GroupBWT

Conclusion: How to choose the best web scraping service

If you remember one rule: choose the provider who proves monitoring, QA, and recovery before they show you a dashboard. That’s what keeps web data trustworthy when websites change.

Quick procurement recap

- Contract “usable data” (data contract + URR target + QA report format).

- Demand resilience (drift MTTR target + replay loop + incident evidence).

- Confirm integration (API/DB/BI delivery + schema versioning + change notifications).

- Validate compliance + security (ToS posture, GDPR/CCPA boundaries, retention, audit logs, RBAC).

- Compare TCO by CPUR (cost per usable record) and include operations explicitly.

Involve Security + the Data Owner in the final review. This is the shortest path to choosing the right web scraping partner without surprises.

If you’re still not sure how to choose a web scraping solution, remember one rule: choose the provider who shows you monitoring, QA, and recovery — before they show you a dashboard.

A strong solution can prove data truth, recover from drift, and integrate safely into your workflows.

FAQ: Choosing Web Scraping Services

-

Is custom web scraping worth the investment?

Custom is worth it when scraping becomes operational: pricing, risk monitoring, or anything tied to revenue decisions. The ROI comes from reliability features—QA gates, replay, dedupe, and alerting—more than from “getting data once.” If the dataset influences decisions weekly or daily, custom usually reduces total cost by avoiding rebuilds and manual QA. If it’s a one-off research pull, a tool may be enough.

-

How long does it take to launch a scraping project?

Timelines depend on source complexity and delivery requirements. Stable, low-defence sources can produce an initial sample fast, while JS-heavy or frequently changing sources need more time for resilience and testing. As an aggregated NDA benchmark, many teams see a first validated sample in days to 2 weeks, and production hardening in 2–6 weeks. Ask vendors to map the timeline to phases: scope → sample → QA rules → integration → monitoring.

-

Can web scraping services scale with business growth?

Scaling isn’t only “more threads.” It’s budgeting proxy usage, controlling crawl depth, preventing duplicates, and keeping data contracts stable as volume grows. A scalable service has queueing, backpressure handling, and monitoring that catches drift early. The best vendors can show how they handle spikes without unpredictable cost explosions. Ask for a capacity plan tied to freshness tiers and per-domain limits.

-

How do providers ensure data accuracy?

Accuracy requires a shared definition: field-level validation, completeness thresholds, and dedupe rules. Strong providers combine automated checks (null/outlier thresholds), sampling, and human review for edge cases. They should also support replay, so you can reprocess raw captures when rules change. Request a sample QA report format and an escalation path when URR drops.

-

What happens if a source blocks scraping?

Blocking is expected in production, so the question is detection, transparency, and recovery. Good providers track block signals, adjust pacing, and communicate clearly when data is delayed vs missing. They should also offer lawful alternatives when access becomes high risk: official APIs, partnerships, or removing the source from scope. Your contract should specify reporting, MTTR expectations, and what “partial delivery” looks like.