Most travel products help people book. This AI travel research platform helps people decide—especially when the decision is emotional and time-sensitive: “Will I enjoy the atmosphere of this city, and is it shifting right now?”

We built an AI-powered travel insights platform that turns publicly available, permitted travel discussions plus baseline indicators into auditable signals (scores, trend deltas, evidence references) with an explicit confidence posture. If you’re building an AI platform for travel, the insight-first pattern below keeps destination pages explainable, current, and defensible.

This delivery approach is typical in our travel industry data scraping engagements: multi-source collection, governed transformation, and outputs that are safe to publish under real operational constraints.

NDA note: Client name and some implementation details are intentionally withheld. The constraints, decisions, and delivery patterns are real.

At a glance: what this AI travel research platform proves

- Problem: “Atmosphere” shifts faster than editorial cycles, while free-form GenAI summaries create hallucination and compliance risk.

- Solution: A governed pipeline: collection → cleaning → classification → scoring → trends → confidence → controlled generation.

- Output: Comparable category scores, trend deltas, evidence references, and confidence labels—ready for pages, dashboards, and internal decisioning.

- Business goal: Support partner conversion without overstating certainty.

The Artificial Intelligence (AI) in Travel Market Report 2026—a deep dive by The Business Research Company—reveals that this sector is pulling off a monstrous 34% annual growth rate this year alone. We are talking about a valuation leap from nearly $166 billion in 2025 to an eye-watering $222.4 billion in 2026.

The real “secret sauce” is the pivot toward hyper-personalization—think AI concierges like Expedia’s Romie that actually learn your quirks and preferences over time—which is exactly why the market is projected to skyrocket to over $710 billion by 2030. It’s a staggering transformation of how we wander the globe, if you can wrap your head around it.

Who needs this pattern—and what we deliberately did not build

This fits OTAs, travel media groups, and destination organisations that need decision-grade destination understanding without scaling editorial headcount. It also fits product teams building an AI platform for travel, where “destination understanding” (not booking logistics) is the differentiator.

We are not selling a ready-made booking or itinerary SaaS. We implement delivery patterns and pipelines your team can own, measure, and govern.

AI data-driven destination research starts with measurable objects, not generic copy

UGC or User-Generated Content reflects lived experience, but it is noisy: slang, sarcasm, spam, coordinated promo bursts, and “trend noise.” We treat it as a signal to analyse—not a paragraph to paraphrase.

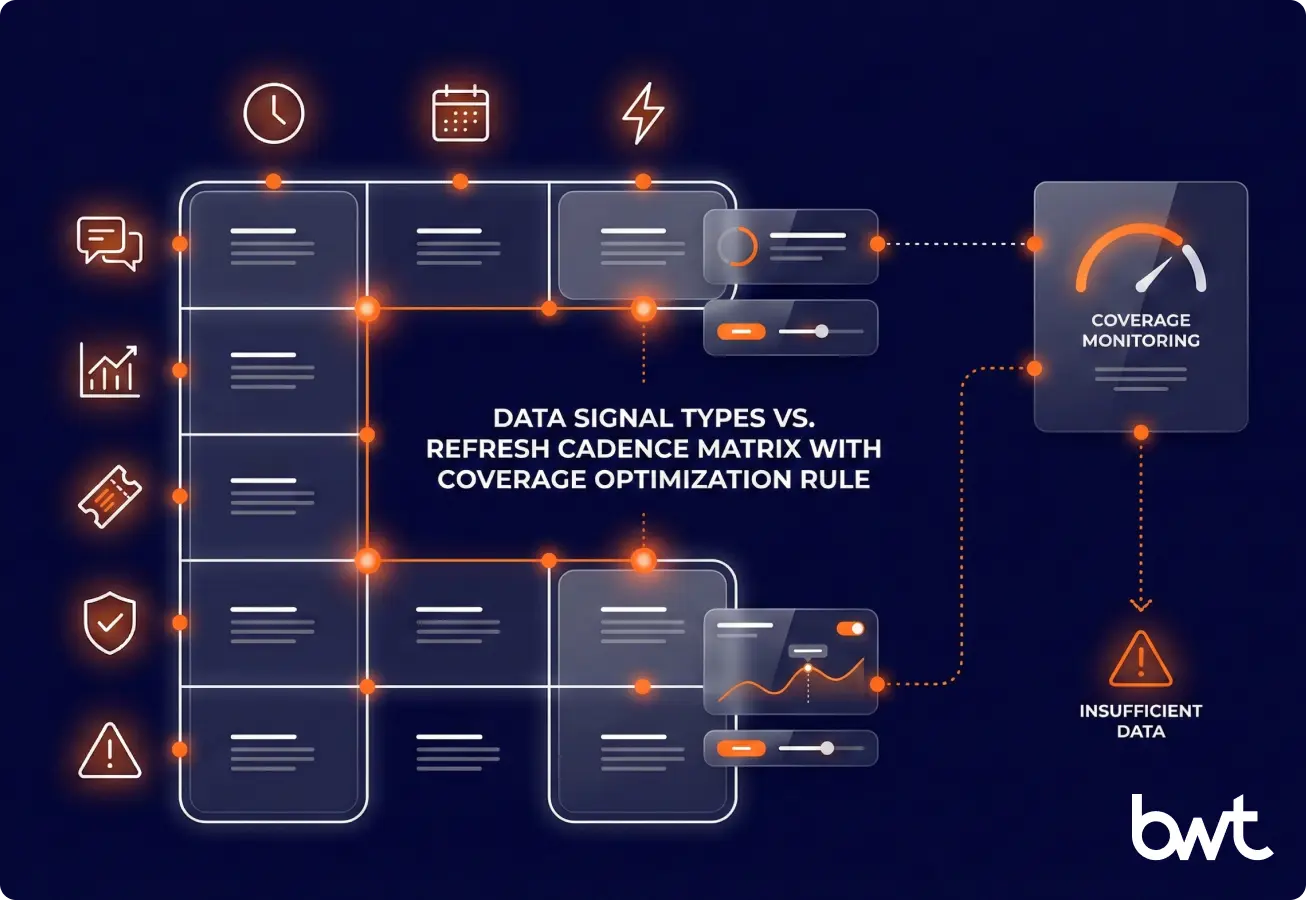

“In travel UGC, no trend ships unless it’s backed by at least two independent source types inside a defined time window; otherwise, the correct output is ‘insufficient data’, not prettier copy.”

— Alex Yudin, Head of Data Engineering & Web Scraping Lead, GroupBWT

Definitions

- Insight Object: A versioned record that contains: category, score, trend delta, confidence label, and evidence references (links or short excerpts) with timestamps.

- Trend delta: The change versus a defined historical window.

- Confidence: A rule-based label derived from coverage, consistency, and volatility.

Coverage = volume + source diversity across the selected time window.

Insight Object schema (what every claim must carry)

| Field | Meaning | Why it exists |

| Category | One of the stable atmosphere buckets | Keeps analysis structured |

| Score | Comparable category score | Enables fast decisions |

| Trend delta | Change vs a prior window | Explains “what changed” |

| Confidence | High / Medium / Low / Insufficient data | Prevents over-claiming |

| Evidence references | Links or short excerpts + timestamps | Makes claims auditable with lower quoting risk |

| Version ID | Scoring/generation version | Enables rollback & governance |

What this AI travel research platform produces (and why it’s safer than free-form text)

The fastest way to lose trust isn’t a wrong score—it’s a confident score with no evidence posture.

“In our AI travel research platform, the LLM is a renderer, not a researcher. The model can only write from versioned Insight Objects + a confidence ladder, and it must refuse when coverage, consistency, or volatility falls below threshold—because a quiet drift is more dangerous than a loud outage.”

— Dmytro Naumenko, CTO, GroupBWT

The five atmosphere categories (kept stable across the product)

- Gastronomy

- Transport

- Safety

- Attractions

- Leisure & Events

Practical scoring logic (high level)

| Component | What we measure | Failure mode if skipped |

| Relevance | Share of items truly about the category | Noise corrupts scores |

| Sentiment distribution | +/0/- plus volatility (and polarisation where relevant) | Averages become misleading |

| Trend window | Explicit “current vs prior” time window | “Now” becomes “always” |

| Confidence rules | Coverage + consistency gates | You publish persuasive nonsense |

AI-powered destination analysis is only credible when confidence is visible

Trends are actionable only when every movement has (1) a time window, (2) a minimum evidence threshold, and (3) a confidence label. If one is missing, you’re doing narrative, not analysis.

Why a travel intelligence platform must be governed end-to-end

A destination product fails in production when governance is bolted on later. We designed the sourcing and pipeline layer to degrade safely instead of silently drifting.

- Collection policy: We design a compliance-first, multi-source portfolio using data collection solutions and source redundancy so a single platform change doesn’t collapse coverage.

- Extraction + normalisation: We standardise content and metadata into a stable schema with a data extraction services company, then validate it (schema checks, language checks, city mapping).

- Aggregation: We unify multi-source signals with data aggregation services so scores remain comparable over time.

- End-to-end feeds: When teams need “sourcing → enrichment → delivery” as a single supply chain, we implement monitorable, auditable end-to-end content aggregation solutions.

When APIs and licensed feeds are limited, we use compliant web scraping services with rate limits, ToS checks, and operational blocklists.

Compliance boundaries we enforce (because “public” is not the same as “permitted”)

- Ingest only publicly available or licensed sources, and honour site terms, robots controls, and takedown requests.

- Minimise stored text (prefer links + timestamps; keep excerpts short and permission-aware).

- Apply retention controls and governance workflows aligned to applicable privacy regimes.

Refresh policy is a product decision, not an engineering detail

Different signals update at different rates; refreshing everything daily is often the fastest way to pay more for worse signal quality. We version refresh policies and monitor coverage so pages can output “insufficient data” rather than guessing.

The NLP pipeline is the travel intelligence engine behind the product

NLP works in production when it’s observable, repeatable, and boring (in the best way). This reliability work maps directly to our NLP solutions practice and is often supported by broader machine learning (ML) consulting services when teams need governance, evaluation, and iteration loops.

Production-oriented pipeline (7 steps)

- Collect text + metadata (time, source type, city mapping).

- Deduplicate and normalise.

- Detect language.

- Filter noise (spam, promos, irrelevant chatter, bot bursts).

- Assign categories (multi-label).

- Score sentiment per category.

- Aggregate into score + trend delta + confidence + evidence references.

GenAI is used for controlled narration, not for “finding the truth”

We do not let the model browse, invent, or fill gaps. In the AI-powered travel insights platform, the travel insights engine is a constrained generation layer: the model writes only from curated Insight Objects and must follow confidence-gated language rules.

This delivery pattern sits within our AI development services and is commonly implemented through a dedicated generative AI development services track when teams need repeatable, governance-first content generation.

Page template: ETT (Essentials / Trends / Tips)

- Essentials: Stable characteristics anchored in baselines and long-term aggregates

- Trends: Recent movement with explicit time windows and confidence labels

- Tips: Actionable guidance grounded in repeated patterns, not anecdotes

Confidence-gated language ladder (prevents overclaiming)

| Confidence level | Allowed phrasing | Disallowed phrasing |

| High | “Consistently reported…” | “Always / guaranteed…” |

| Medium | “Often mentioned recently…” | “Definitively shifted…” |

| Low | “Some signals suggest…” | “Clearly improving/worsening…” |

| Insufficient data | “Insufficient data to assess…” | Any narrative conclusion |

From insights to action: the AI travel coordination platform layer

Insight is valuable only when it changes a decision. We treat partner conversion as a UX constraint—not a reason to weaken evidence rules.

Two principles kept trust intact:

- The scoring layer is governed by evidence and confidence, not by conversion pressure.

- When signals are negative or thin, the page degrades gracefully (weakened language or “insufficient data”) instead of smoothing the edges.

Monitoring and acceptance criteria prevent “confident nonsense” at scale

A system is only trustworthy if leaders can govern it without reading logs.

What we monitor (business + pipeline health)

| Area | What we track | Why it matters |

| Business outcomes | Engagement and partner click-through (high level) | Proves utility |

| Coverage & freshness | Ingested / usable / scored volumes | Prevents silent staleness |

| Confidence posture | Low/insufficient confidence incidence | Flags risk & gaps |

| Noise & fraud | Spam/bot burst indicators | Protects score integrity |

| Generation guardrails | % outputs blocked by rules | Prevents hallucinations |

“Model choice is rarely the hard part; the hard part is the governance contract. We start with Buyer‑Ready Acceptance Criteria—evidence references, time windows, visible confidence, monitoring, and freeze controls—because if you can’t audit a destination claim, you can’t scale it without manufacturing ‘confidence’ you didn’t earn.”

— Oleg Boyko, COO, GroupBWT

Buyer-ready acceptance criteria (12 checks)

- Evidence references exist for every score/trend claim.

- Every trend has a time window and a minimum evidence threshold.

- Confidence labels are visible and tied to explicit rules.

- The system can say “insufficient data” (and stops there).

- Scoring logic is versioned with rollback capability.

- There is a “freeze city / freeze category” control for anomalies.

- Noise filtering is explicit (spam, promos, irrelevant mentions, bot bursts).

- Multi-label categorisation is supported.

- Monitoring covers business KPIs and pipeline health.

- Unit economics are measurable (cost per refresh / per volume processed).

- Generation writes only from curated Insight Objects.

- Partner constraints are designed into UX without fake certainty.

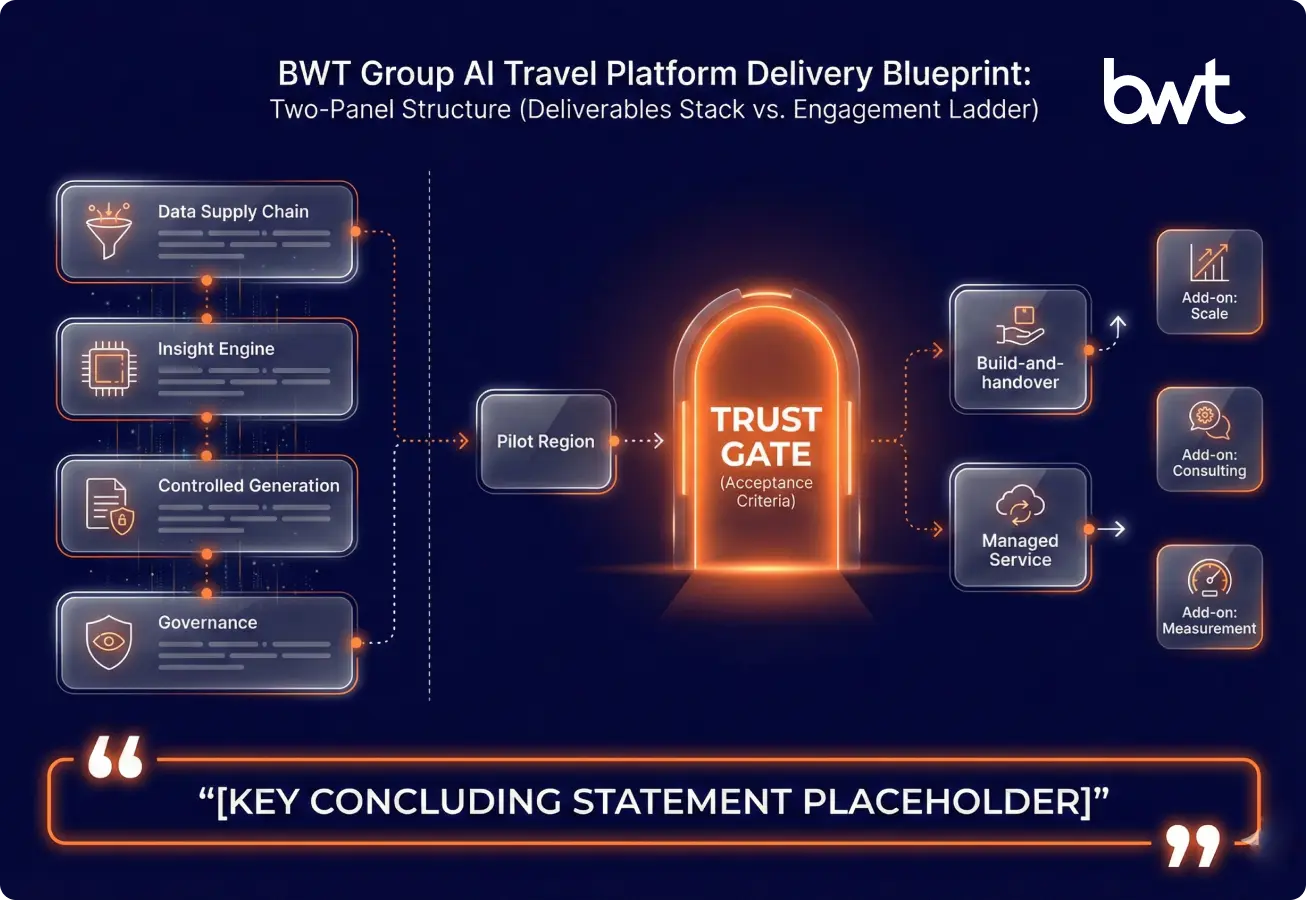

What GroupBWT delivered (and how to engage)

We don’t “add AI” on top of city pages. We build a governed supply chain that makes destination understanding safe to scale.

- Scale and freshness patterns (where volume requires it) are supported by our big data implementation company.

- End-to-end delivery as an AI-powered travel insights platform: scoring, controlled generation, monitoring, and runbooks.

- Advisory support through an AI consulting services company and measurement design through data science consulting.

If you’re evaluating AI-powered travel platform development, we can deliver this as build-and-handover or as a managed service, depending on governance and refresh requirements. For teams that need an AI platform for travel but must prove trust before launch, we start with a pilot region and explicit acceptance criteria.

Bottom line

This case shows how an AI travel research platform can quantify “city atmosphere” responsibly: analyse UGC as a signal, compute versioned Insight Objects with confidence posture, and generate narratives only from curated objects.

A travel intelligence platform built this way reduces hallucination risk, supports partner conversion, and stays governable as sources and trends change. An AI-powered travel insights platform is only as credible as its evidence references, confidence rules, and monitoring—insist on those artefacts before you scale.

Next step

Browse our work to compare architectures, governance approaches, and engagement models.

For a travel example focused on measurable efficiency outcomes, see how, with our help, a travel company optimized its Google Ads spending.

FAQ

-

What makes this different from an AI trip planner or a generic “LLM city summary”?

Trip planners generate itineraries. This pattern produces decision-grade destination understanding: category scores, trend deltas, and a visible confidence posture tied to evidence references. If the evidence is thin, the correct output is “insufficient data,” not a polished paragraph.

-

What is an “Insight Object” in plain English?

In an AI travel research platform, an Insight Object is the structured record a page is allowed to use: category → score → time window → confidence label → evidence references (with timestamps) → version ID. If a sentence can’t be tied back to a versioned object, it shouldn’t be published.

-

How do you prevent spam, promos, and bot bursts from distorting “atmosphere”?

We treat noise filtering as a first-class pipeline step: deduplication, relevance checks, and explicit detection of spam/promo/bot patterns happen before scoring. We also monitor anomalies (sudden volume spikes, repetitive phrasing, source health drops) and can freeze a city/category when signals look manipulated.

-

How do you reduce GenAI hallucinations in city narratives?

We don’t ask a model to “figure out the truth.” We constrain generation to curated Insight Objects and enforce confidence-gated language, so claim strength matches evidence strength. When confidence is low, language becomes conditional—or stops at “insufficient data.”