Introduction

Mixpanel installed. GA connected. A Looker dashboard from a hackathon three months back — opened twice, bookmarked by nobody. And every Monday, the product call still comes down to whoever argues loudest in Slack.

That’s a decision-making problem wearing a data costume.

Only 24% of companies act on the data they collect, according to DemandSage. The other 76% pay for dashboards and decide by gut anyway. The gap isn’t a lack of access to numbers — it’s the habit of using them before the runway burns down.

Data analytics for startups solves a narrower problem than most founders assume. The real work is building the muscle — and the infrastructure — that converts raw metrics into evidence you can act on this week. Tooling has never been cheaper. What actually kills teams: not knowing what to measure, investing in infrastructure too late (or too early), and accumulating technical debt that compounds faster than MRR.

This guide covers metrics, infrastructure, tool selection, and the traps that swallow otherwise sharp teams. A practical roadmap for building a data strategy for startup growth — pre-seed through Series B.

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment

Why Data Analytics Is Critical for Startups

You’re operating with limited resources, compressed timelines, and decisions that carry consequences way out of proportion to the information you had when you made them. That’s the startup condition. Data analytics for startups gives you a structured way to reduce that uncertainty — and direct resources toward activities that actually generate returns instead of activities that feel productive.

Deloitte’s 2026 State of AI in the enterprise report puts a number on the gap: 66% of organizations report productivity gains from analytics, but only 20% turned those gains into revenue growth. That means: collecting data is table stakes. The differentiator is acting on it — with discipline and speed, before competitors do.

Making Data-Driven Product Decisions

Product decisions at early-stage companies default to founder instinct. Intuition matters — but it’s not enough on its own, and most teams learn that the hard way.

In our delivery work, we see the same pattern repeat. A startup tracks its onboarding funnel. Discovers 60% of trial users bail at step three. Not because the product lacks value — the setup flow just confuses people. Without analytics, the team burns months shipping shiny new features while the actual bottleneck sits there in plain sight. Invisible. Expensive.

Tools like Mixpanel or PostHog surface that friction in days. Not months. Days. Startups using MVP development services for startups often instrument analytics from the first build — specifically to catch these blind spots before they calcify into permanent user behavior.

Reducing Burn Through Smarter Metrics

Cash runway is oxygen. You either measure how it’s being consumed or you’re flying blind until it runs out.

Tracking unit economics — Customer Acquisition Cost (CAC), Customer Lifetime Value (LTV), the LTV-to-CAC ratio — shows you exactly what each customer costs versus what they’ll generate. No guessing. No, “we think channel X is working.”

What you should monitor:

- Monthly Burn Rate — total cash out per month, no exceptions

- CAC by Channel — what it actually costs to acquire a customer from each source (not what the platform tells you)

- LTV/CAC Ratio — generally, below 3:1 signals a problem (though context matters — business model, stage, payback period, and gross margin all factor in)

- Payback Period — time to recover acquisition costs

- Churn Rate — customers lost per period, tracked cohort by cohort

That means: consistent monitoring lets you kill unprofitable channels early, double down on what works, and extend runway without raising additional capital. Most teams we work with discover at least one channel that’s burning cash for leads that churn within 60 days. Finding it is a spreadsheet exercise. Acting on it is a discipline exercise.

Iterating Faster Than the Competition

The existential reason to invest in analytics early comes down to one word: speed. A competitor who wields better data iterates at twice your pace. They catch problems faster, patch them within days, and stack those marginal gains week after week until the distance between you becomes uncatchable. Six months of that kind of compounding creates a moat no pitch deck can bridge. The startup that falls behind on data didn’t lack good ideas — somebody else just proved theirs quicker and shipped before the window closed.

Teams that grasp the importance of data analytics in startups 2026 and beyond tend to wire every product call, every campaign tweak, every pricing experiment into a tight feedback loop. Decisions that used to marinate for weeks happen in days. That kind of tempo is structural — once you have it, competitors find it brutally hard to replicate.

A side benefit worth mentioning: investors notice. MoM revenue growth, Net Revenue Retention (NRR), activation rates, engagement depth — these face scrutiny in every pitch. Teams that track them with discipline signal operational maturity. That reduces perceived risk more than any pitch deck ever will. But building analytics to impress investors is building the wrong thing for the wrong reason. Build it to move faster. The fundraising outcomes follow.

What Data Analytics Means for Early-Stage Companies

So what does data analysis for startups actually look like in practice — before you get anywhere near tools and frameworks? The answer might catch you off guard, because the challenges here bear almost zero resemblance to enterprise analytics.

Startup-Specific Analytics Challenges

Startup data analysis presents problems no enterprise playbook was designed to address:

- Low data volumes. Hundreds of users, not millions. Reaching statistical significance takes patience and creative test design — or you make wrong calls on insufficient evidence.

- Metrics that shift constantly. Every pivot changes what you should be measuring. The dashboard you built last month might be tracking a feature that no longer exists.

- No dedicated data team. Your product engineer doubles as analyst, dashboard builder, and data janitor. That’s normal. But it’s also a liability if nobody owns the tracking plan.

- Tool sprawl. Six platforms, none talking to each other, data silos growing quietly in every direction.

- Privacy compliance from day one. GDPR, CCPA, sector-specific rules — they all apply to you right now, regardless of headcount or revenue. Putting this off until “later” is how startups end up scrambling before a Series A audit.

Industry surveys keep surfacing a strange paradox: companies rate their own analytics strategy as strong, yet freely admit that infrastructure, data quality, and talent all lag behind. For startups, the disconnect runs even deeper. Closing it early creates one of the few structural advantages a small team can actually build.

Analytics vs. Data Science: When Each Matters

These terms get conflated constantly. They shouldn’t be. Analytics — dashboards, reporting, descriptive statistics — delivers value from day one. Works for non-technical teams. Costs almost nothing at the free tier. Data science — predictive models, machine learning, statistical modeling — requires more data volume, more technical depth, and more time to pay off.

Most early-stage startups see the highest ROI per dollar from analytics. Data science becomes relevant once you have clean pipelines, meaningful volume, and concrete use cases for prediction — typically around Series A or later. A data science consulting firm can assess whether you’re actually ready for that shift or just want to look sophisticated in pitch decks.

The Data Debt Warning: Startups often collect data ‘anyway’ for a year, thinking they’ll fix it when they hire a Data Scientist. This creates compounding data debt — the kind that’s expensive to unwind and easy to underestimate. When you eventually move toward machine learning (ML) consulting solutions, you’ll discover your historical data is too ‘noisy’ to be useful. Quality at the analytics stage isn’t just for current dashboards; it’s the ‘clean fuel’ required for the AI-driven growth you’ll need to survive in 2026.

MVP vs Scalable Data Infrastructure

Quick analytics setup or scalable infrastructure from the start? Neither extreme. The only answer that works in practice is a phased approach. Start scrappy — GA4, Mixpanel free tier, SQL against production. Then transition to a more robust stack — potentially through custom software development — as product-market fit starts to crystallize.

One principle you absolutely cannot skip: keep your event taxonomy clean and your naming conventions consistent from the very first tracked event. This costs almost nothing upfront. Ignoring it costs weeks of engineering time later when you’re trying to reconcile five different event names for the same user action.

“I have reviewed codebases where the same user action was tracked as signup, user_signup, sign_up, and create_account — all by the same team, over 14 months. Cleaning that up took longer than building the original tracking. Don’t fall for the ’10-minute naming convention’ myth. In reality, Event Drift — when your product evolves faster than your documentation — kills more analytics stacks than bad tooling. You don’t just need a naming convention; you need a Tracking Plan. It’s a living document that serves as the single source of truth between product and engineering. Without it, your Mixpanel will inevitably devolve into a graveyard of user_signup, Sign_Up, and signup_complete.”

— Dmytro Naumenko, CTO at GroupBWT

Case study — Real Estate Tech (Pre-Seed, 2025)

The problem: real-time mortgage affordability scores across multiple loan types for tens of thousands of MLS listings — on a near-zero budget.

The solution: deliberately minimal MVP stack — daily scraping for rate data, caching layer for API limits, calculator engine per loan type.

The result: 30,000+ properties scored in real time, infrastructure under $50/month — a 90%+ reduction from initial cloud estimates.

No warehouse or data team, but smart architecture choices, nothing else. Engagement continues in 2026 across new markets.

Also Read: 2025 Executive Guide to Prevent Web Scraping

Core Types of Data Analytics for Startups

Do you want to understand how important is data analytics in startups? Map each analytics category to the business outcome it protects or grows:

| Analytics Type | Key Metrics | What Breaks Without It |

| Product | DAU/MAU, retention curves, feature adoption | You ship features nobody uses. Churn stays invisible until it’s terminal |

| Marketing | CAC, conversion rates, channel attribution | You burn cash on channels that look good on last-click but deliver churning leads |

| Financial | MRR, ARR, burn rate, LTV, gross margin | You run out of runway without understanding why |

| Operational | Support response, deploy frequency | Inefficiency compounds silently — until it’s 30% of your burn |

Product analytics matters most at the early stage. Cohort-based retention curves reveal what’s actually happening beneath the surface-level numbers. See that curve flattening at 30, 60, 90 days? Good — something sticks. A cliff after week one? Hold off on scaling acquisition and fix the leaky bucket first. PostHog, being open-source and self-hosted, gives early teams complete ownership over their event data — no vendor lock-in, no surprise pricing jumps.

Marketing analytics connects spend to actual outcomes. Multi-touch attribution in GA4 spreads credit across the entire customer journey, which tends to expose channels that only appeared effective because they happened to capture the final click. When you need a broader lens that pulls marketing and product data into one decision layer, big data analytics as a service for business intelligence can bridge that gap.

Financial analytics for B2B SaaS revolves around MRR, ARR, expansion revenue, and churn. ChartMogul or Baremetrics give you real-time revenue visibility. Operational analytics — deployment velocity, incident response, support resolution — is less glamorous than growth metrics but directly impacts burn rate. And reliable data collection solutions underpin all four categories. Without clean input, no analytics layer produces output you can trust.

Data Strategy for Startups That Actually Scales

Building a data strategy for a startup requires phased execution — a governed supply chain for decisions, assembled one layer at a time. Dump everything into a single “big-bang” deployment and you’ll burn cash on tools nobody understands. Stay on spreadsheets too long and you’ll steer the company on stale hunches.

The data analytics strategies for impact startups that work best share a common trait: they match infrastructure complexity to the company’s actual stage. Below is a 5-step framework for assembling a data-driven growth engine that won’t collapse under its own weight.

Step 1: Define Business Objectives — Not Metrics

Most founders start with a list of 50 things they could track. Here’s the problem with that: more data points create more noise, and noise drowns out the signal you actually need. Focus instead on evidence that supports your top 3 strategic bets for the quarter.

A sharper framing looks like this: “We need to identify why 60% of users drop off at the payment screen” — rather than trying to capture everything every user does across every surface of the product.

The Rule: Track fewer metrics, but ensure they have an owner. If a KPI doesn’t have a specific action attached to its movement, delete it.

Step 2: Establish a Data Contract (The Taxonomy)

This is where 90% of startups fail. They treat event naming as a creative exercise for developers. It isn’t. It’s an engineering contract.

Before writing a single line of instrumentation code, you must define your Tracking Plan. This is the single source of truth that prevents user_signup from becoming User_Signed_Up three months later.

“The tracking plan is not documentation — it’s infrastructure. Teams that skip it spend 3x as much time debugging dashboards as building them. And when they finally want to run ML models at Series A, the data is too inconsistent to use.”

— Dmytro Naumenko, CTO, GroupBWT

When critical data sits in third-party platforms, behind APIs, or in unstructured sources, a professional data extraction services company can standardize that collection instead of burning your engineers’ time on brittle integrations that break every time the source updates its DOM.

For web-based sources specifically, bespoke web scraping pipelines reliably structure data into your analytics workflow.

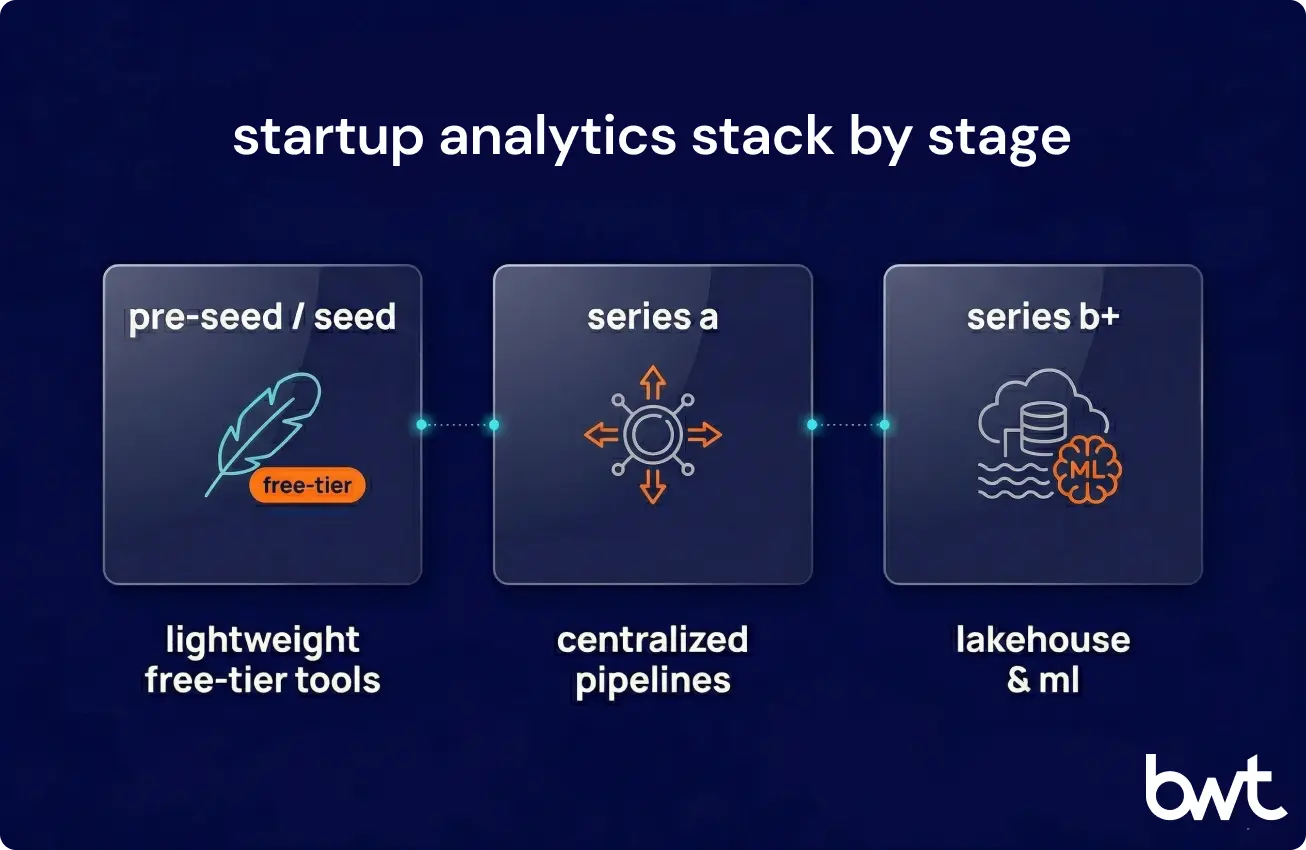

Step 3: Choose Tools Based on Your Stage — Not Your Ambition

Match your stack to your burn rate. Investing in Snowflake at the Pre-Seed stage isn’t “planning for growth” — it’s premature optimization that drains resources you don’t have yet.

- Pre-Seed / Seed: Use PostHog or Mixpanel (Free Tiers) + Metabase. This is enough to find Product-Market Fit.

- Series A: Centralize with Segment or RudderStack. Move to a Modern Data Stack (ELT) with dbt and BigQuery.

- Series B+: This is when you scale to a Lakehouse architecture and invest in custom ML pipelines.

Not sure where to start? That’s exactly where big data analytics consulting shortcuts months of expensive trial and error. The wrong architecture choice at Series A costs 6–9 months of rework at Series B.

Step 4: Implement Decision-Driven Dashboards

A dashboard full of numbers and no clear question behind them wastes everyone’s time. The ones that earn their place on a team’s screen answer something specific — and they drive action within 48 hours.

The best startup dashboards cover four non-negotiable zones:

- Executive: MRR, Burn, Runway. (The oxygen levels).

- Product: Activation funnel and retention cohorts. (The stickiness).

- Marketing: CAC by channel and LTV/CAC ratio. (The efficiency).

- Engineering: Deploy frequency and system health. (The velocity).

The Acid Test: If a chart doesn’t drive a decision within 48 hours, it’s noise. Consider removing it.

Step 5: Engineer for Compliance from Day Zero

Privacy is not a “checkbox for later.” GDPR and CCPA are architectural constraints.

If you’re building in 2026, you must decide: do you trust a 3rd-party vendor with your raw event data, or do you engineer a self-hosted stack like PostHog to maintain total ownership? The latter requires more engineering effort but eliminates the legal liability of “data leakage” during your first major due diligence.

Engineering Reality: Self-hosting PostHog for total data ownership is the gold standard, but for a Pre-Seed team, it’s a heavy ‘engineering tax’. If you can’t justify the infrastructure overhead yet, pivot to a Lean Compliance Strategy: focus on Zero-Party Data (asking users directly) and strictly minimize PII (Personally Identifiable Information) collection at the source. It’s significantly cheaper to engineer a compliant flow now than to perform a ‘data lobotomy’ on your warehouse six months before a Series A audit.

“Compliance is architecture. If you don’t build privacy into the data flow now, you’ll pay for it in 10x migration costs when the auditors arrive.”

— Oleg Boyko, COO at GroupBWT.

Data Infrastructure for Growing Startups

Past product-market fit, the lightweight tools hit their ceiling. This section covers the infrastructure layer that supports data analytics for startups operating at production scale — first warehouse through production-grade pipelines.

Data Collection and Tracking Setup

Good analytics starts with good collection. Set up a Customer Data Platform — Segment or RudderStack — to centralize event tracking across web, mobile, and backend. Define your event taxonomy before writing instrumentation code. Naming conventions. Property standards. Tracking plans with owners.

In practice, a data engineering company can get this foundation right from day one. That avoids the painful rework that catches teams off guard six months later — when they discover three different engineers tracked the same user action with three different event names and two different timestamp formats.

Data Warehousing and Storage

Cloud warehouses like Snowflake, BigQuery, and Amazon Redshift handle scalable storage alongside serious querying horsepower. If you’re picking your first warehouse, BigQuery’s pay-per-query model keeps risk low — you only spend money when you actually run queries, which means zero idle compute eating through your budget.

According to Fortune Business Insights, the global data analytics market is on track to hit roughly $496 billion by 2034 — a sign that tooling will only keep getting better and more accessible. When your data spans multiple formats — logs, documents, media files, structured events — it’s worth evaluating whether a data lake consulting approach, a pure warehouse, or a lakehouse hybrid makes the most sense for your workload. Choosing the wrong storage architecture at this stage tends to create migration headaches that drag on for years.

ELT and Modern Data Pipelines

In the volatile environment of an early-stage company, your questions change every week. ELT (Extract, Load, Transform) is the architecture best equipped to handle that volatility. Better suited to how cloud warehouses actually operate. For a detailed comparison, see our guide on ETL and data warehousing integration.

| Characteristics | ETL (Traditional Approach) | ELT (Modern Cloud-Native Approach) |

| Flexibility | Low (Must know schema upfront) | High (Load now, transform later) |

| Speed to Insight | Slow (Builds pipelines first) | Fast (Queries raw data immediately) |

| Cost of Error | High (Requires pipeline rebuild) | Low (Just update a SQL query in dbt) |

Real-Time vs Batch Processing

| Dimension | Real-Time | Batch |

| Latency | Milliseconds to seconds | Minutes to hours |

| Where it matters | Fraud detection, live dashboards, and personalization | Reporting, model training, and warehouse loads |

| Tools | Kafka, Flink, AWS Kinesis | Airflow, dbt, cron |

| Cost | Higher — and stays higher | Lower, more predictable |

For most startups, batch processing — hourly or daily runs — delivers sufficient freshness at a fraction of the cost. Don’t build real-time because it sounds impressive. Build it because a product feature genuinely demands it. Scaling to big data analytics for startup companies happens gradually — each infrastructure layer building on the last. A well-designed big data pipeline architecture lets you grow incrementally, adding capacity and complexity as the business warrants it, without tearing down what already works.

Case study — B2B Sales Intelligence (2025–2026)

- The problem: scaling data collection from one source to enterprise-grade coverage.

- The solution: isolated databases per source (so one slow feed never blocks others), shared delivery library (new-source onboarding went from weeks to days), automated monitoring replacing manual status reports.

- The result: 14M records via manual file delivery at seed → 340M+ records (477GB+) across 10+ sources via automated pipelines.

This kind of big data implementation services engagement scales because the foundation was engineered for growth from the start.

Big Data Analytics for Startups: Scaling Beyond the Basics

At some point, the scrappy stack that got you through the seed stage starts groaning under the weight of growing data volumes, more complex queries, and cross-functional demands. That inflection point is where data analytics for startup teams becomes a genuine infrastructure challenge — and where the architecture decisions you made (or avoided) earlier either pay dividends or haunt you.

Data analytics for startups at this stage means juggling multiple data sources, managing data quality at scale, and keeping query performance acceptable as table sizes balloon. The tooling exists — what separates teams that scale smoothly from those that stall is typically governance, pipeline reliability, and a clear ownership model for each data asset.

For broader big data and analytics services, aligning tool selection with infrastructure prevents expensive mid-course corrections.

Best Data Analytics Tools for Startups

Picking the right tools early saves months of rework later. The best choices for an early-stage company balance capability with cost and — this part gets overlooked — how quickly you can pull a useful insight from them on day one.

Product Analytics

PostHog stands out for early teams because it’s open-source and self-hosted, meaning you own your data end-to-end and your bill doesn’t spike as tracked users grow. Amplitude goes deeper on behavioral analysis — session replays, complex funnels, sophisticated cohort breakdowns — though pricing can creep up once you pass certain usage thresholds. Mixpanel offers clean funnels and a generous free tier. Heap auto-captures everything without manual instrumentation; the tradeoff is noisier data that can be harder to wrangle.

Business Intelligence

Metabase deserves a serious look if you’re pre-Series A — it’s open-source, free when self-hosted, and surprisingly capable for SQL-based dashboarding. Looker fits better once multiple teams need governed metrics definitions and a shared semantic layer; think Series B and beyond. Sigma Computing has a spreadsheet-like interface that finance teams gravitate toward. Tableau remains the visualization heavyweight, though licensing costs put it out of reach for most seed-stage budgets.

AI-Powered Analytics

ThoughtSpot lets non-technical users query data using plain English. Pecan AI handles no-code predictive modeling. MindsDB creates AI-powered tables that live directly inside your existing database. All three are worth evaluating once you hit Series A and your data foundation is solid enough to support them.

Common Mistakes Startups Make with Data Analytics

Tracking Too Many Metrics

When everything is a priority, nothing is. Target 5–7 metrics — North Star plus supporting — that tie directly to outcomes. Red flags: dashboards with 20+ charts nobody opens. Team members are quoting different numbers for the same question. No clear owner for any metric. Weekly reporting that takes longer than the meeting itself.

Ignoring Data Quality

Bad data produces bad decisions. That’s worse — significantly worse — than having no data at all. Gartner’s March 2026 predictions report estimates organizations will abandon 60% of AI projects due to insufficient data quality. That means: duplicate events, missing user IDs, inconsistent naming, timezone mismatches — these aren’t minor annoyances. They’re decision-corrupting liabilities. Set up automated validation checks before they bake into your dashboards.

“The founders who scale fastest are the ones who ask ‘what decision does this metric change?’ before they build a single dashboard. I have seen teams track 40 metrics and act on zero. I have seen others track five and use them to cut CAC in half. The difference is never the tool — it is the question behind the number.”

— Eugene Yushenko, CEO at GroupBWT.

Delaying Infrastructure Investment

Too many startups postpone data infrastructure until the technical debt becomes suffocating. Don’t. The right time to invest in data analytics for startups is right after initial product-market fit — the Seed to Series A window. The longer you delay, the steeper the migration cost — technical debt in data infrastructure compounds quickly.

Data Science for Startups: When to Level Up

Basic analytics covers most early-stage needs. But at a certain scale, data science for startups shifts from a nice-to-have to the competitive edge that separates companies still growing from companies stuck on a plateau. Recognizing the right moment to jump from descriptive to predictive — and building reliable data science for startups data pipelines that feed those models — is central to how startup companies scale with data analytics over time.

Predictive Analytics and Segmentation

Once you’ve accumulated twelve-plus months of historical data, predictive modeling starts unlocking real value. Time-series forecasting tools like Prophet and ARIMA can project MRR trends with surprising accuracy. Classification models pinpoint users who are drifting toward churn — giving your retention team a window to intervene before those accounts quietly go dark.

Clustering algorithms, meanwhile, carve your user base into distinct segments: high-value power users, price-sensitive trialists, enterprise prospects who behave differently from everyone else. Those segments feed personalized onboarding flows, sharper campaign targeting, and even dynamic pricing experiments. Getting all of this working requires a warehouse, a transformation layer like dbt, and reverse ETL to push insights back into your CRM, email platform, and support tools — so the people on your team who actually talk to customers can act on model outputs without waiting for a weekly report.

AI-Driven Product Features

The most advanced play: embedding machine learning (ML) consulting solutions directly into the product. Recommendation engines. Intelligent search. Anomaly detection. Conversational data queries. For startups evaluating custom AI development services or AI consulting, the most practical entry point is often exploring data science as a service options for startups — specialized providers that ship models and pipelines without you having to hire a full data science team.

Case study — Travel-Tech Platform (2025)

- The problem: a four-person founding team with zero NLP expertise needed AI-powered city ratings from public data and social media sentiment—a use case requiring natural language processing services they couldn’t build in-house.

- The solution: a two-stage classification pipeline. A lightweight “is this a review?” filter filtered out ~80% of irrelevant content before the expensive NLP analysis ran. That cut processing costs 5x.

- The result: 30+ cities rated across AI-powered categories, entire infrastructure under $1,100/month. Platform continues scaling in 2026.

Startups that demonstrate data maturity early — clean pipelines, governed access, reproducible models — tend to land enterprise deals and attract acquisition interest more easily. Data infrastructure has increasingly become a due diligence checkbox: something acquirers and investors expect to see in place, rather than a point of differentiation on its own.

Benefits of Data Analytics for Startups

Investing in analytics produces compounding returns. Not abstract returns. Measurable ones you can point to in board meetings.

Faster product iteration

Teams running analytics-driven prioritization ship 2–3x more high-impact features per quarter than teams stuck in roadmap debates. Retention curves and usage data replace opinion cycles. The highest-leverage fix ships in days, not weeks.

Measurable retention lifts

Cohort analysis — tracking retention across user groups over time — routinely surfaces 15–30% improvement opportunities hiding in activation funnels. That’s the single most valuable form of data analysis for startup product teams.

Lower burn rate

We watched one startup slash its monthly CAC by 40% after their analytics surfaced something nobody expected: the second-highest-spend acquisition channel was delivering leads that churned at 6x the rate of organic sign-ups. That kind of insight stays completely invisible without proper tracking. Real money saved — the kind that actually extends runway.

Stronger fundraising outcomes

Startups with mature analytics consistently see shorter due diligence and better term sheets. Investors pay a premium for teams that deeply understand unit economics and growth mechanics.

Partnering with a Data Analytics Provider

Not every startup should build its data infrastructure in-house. At a certain point, the build-versus-buy math tips decisively toward external expertise — and ignoring that math is how teams lose 6+ months of engineering velocity on plumbing instead of product.

A senior data engineer in the US earns $150,000–$200,000/year loaded, plus a 2–3-month ramp-up before the first pipeline ships. A specialized partner delivers production-ready infrastructure in 6–8 weeks at a fraction of that cost. (Full disclosure: this is what we do at GroupBWT. The case studies in this article are drawn from our client work.)

“When we run the numbers with founders, the comparison is straightforward: $18,000–$25,000 for a six-week external buildout versus $60,000–$80,000 in loaded engineering cost for the same result built in-house over four months — plus the opportunity cost of four months without shipping product features. Most choose to outsource the plumbing and keep their engineers on what differentiates the company.”

— Oleg Boyko, COO at GroupBWT.

An experienced partner handles architecture design, best real-time data warehouse solutions setup, pipeline automation, dashboard implementation — production-ready systems, deployed in weeks. Beyond the initial build, the right partner maps a multi-year analytics roadmap: infrastructure scaling, team hiring sequence, and the transition from descriptive to predictive analytics.

Ready to build your data stack? Talk to our data engineering team → or explore how our clients use analytics to scale →

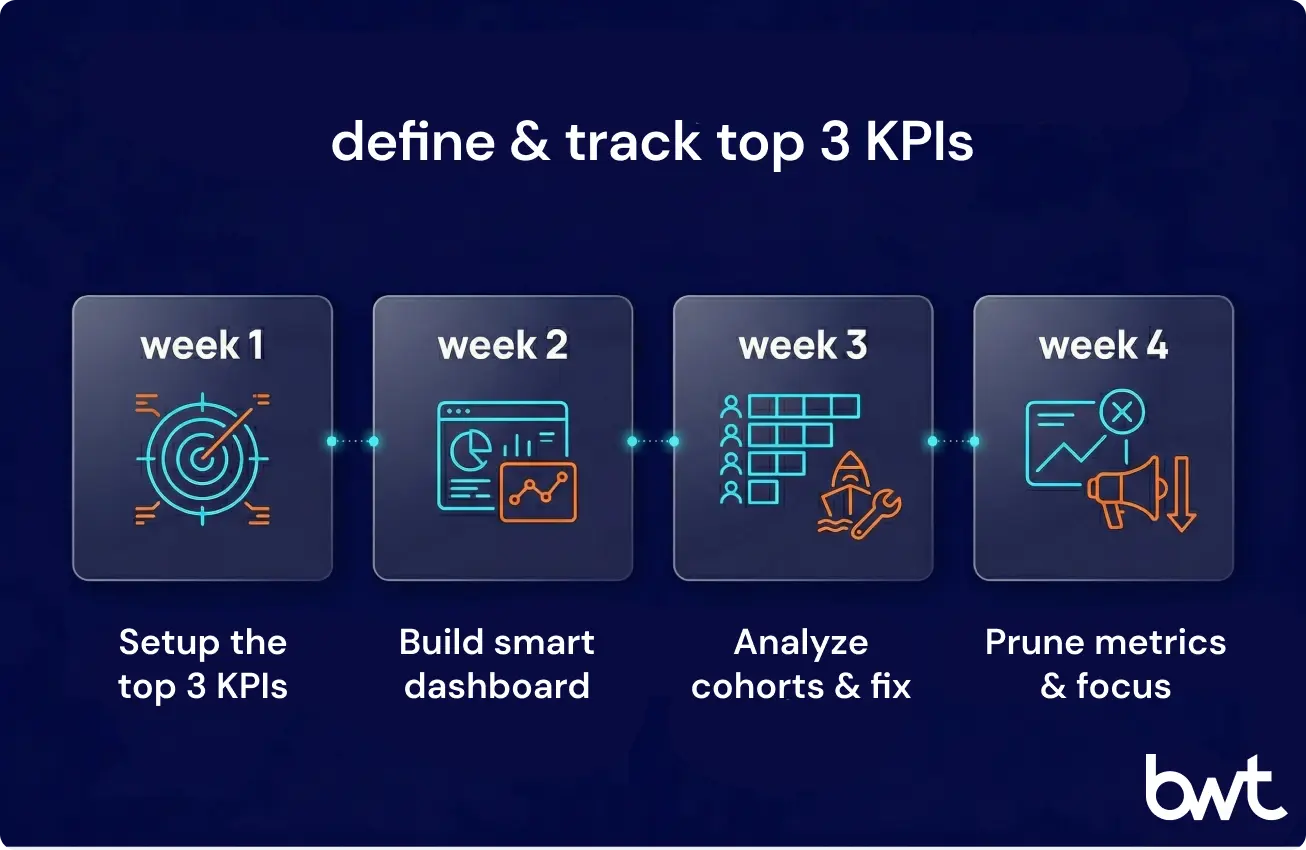

Conclusion: 30-Day Starter Checklist

Most data analytics for startup initiatives don’t fail because of wrong tool choices. The real killer is that teams never develop the habits that turn data into action. Here’s a 30-day plan to avoid that trap:

Week 1: Pick your top 3 KPIs. Get them instrumented in a free tool — PostHog, Mixpanel’s free tier, or GA4. Before you track a single event, write down your naming convention and stick to it.

Week 2: Build one dashboard that answers a specific question your team debates every week. Give it 48 hours. If nobody opens it, tear it apart and redesign around a sharper question.

Week 3: Run a cohort analysis on day-7 retention. Find the steepest activation drop-off. Ship one targeted fix.

Week 4: Kill the metrics nobody looked at. Double down on the ones that drove a decision.

You have a structural advantage over enterprises here. Smaller teams. Shorter feedback loops – less inertia. The data-to-decision gap can close in weeks — not quarters. The prerequisite is discipline. And honesty about what the numbers actually say, even when they contradict the hypothesis you’ve been pitching for six months.

Through Series A — yes. PostHog and Mixpanel have point-and-click event setup. Metabase builds dashboards without SQL. Google Sheets handles ad-hoc analysis for months. The bottleneck isn’t technical skill. It’s discipline. Someone needs to own the tracking plan, review dashboards weekly, and kill metrics nobody uses. Engineers become necessary when you’re joining data across three or more systems or blowing past free-tier event limits.

Tracking vanity metrics. Sign-up totals, page views, follower counts. They look great in pitch decks. They make zero operational decisions. A better approach: for every metric on the dashboard, write down what action you’d take if that number shifted 20% in either direction. If you can’t name a concrete action, that metric is taking up space you could use for something useful. Delete it.

A properly instrumented activation funnel surfaces its first useful insight within 7–10 days — usually a drop-off point nobody predicted. Patching that single friction point tends to lift conversion 15–30%, based on what we’ve observed across engagements. The compounding effect matters even more: teams that review their analytics every week and ship at least one data-informed improvement per sprint typically see measurable revenue impact within 60–90 days.

Depends on what you’re building. If your product is a data product — BI tool, analytics platform, data marketplace — build in-house. That capability is your moat. If analytics supports the product (say, a SaaS that needs dashboards, pipelines, and data governance), outsource the initial build and bring it in-house once the foundation stabilizes and your team reaches 2–3 dedicated data people. The scenario you want to avoid at all costs: a half-built internal system that chews through engineering time for months and still needs a ground-up rebuild when requirements inevitably shift.

ETL processes data before loading it into the warehouse. You need to know your questions up front. ELT loads raw data first, then transforms it within the warehouse using tools like dbt. For startups, ELT is almost always the stronger choice — more flexible (rerun transforms when requirements shift), more auditable (everything in version-controlled SQL), better suited to how BigQuery and Snowflake actually operate. That means: you don’t need to predict every question before you start collecting data.

Data Engineering: From Raw Web to Data Product

We develop and manage custom data solutions, powered by proven experts, to ensure the fastest delivery of structured data from sources of any size and complexity.

We offer:

- Custom Web Scraping & Development

- 15+ Years of Engineering Expertise

- AI-Driven Data Processing & Enrichment