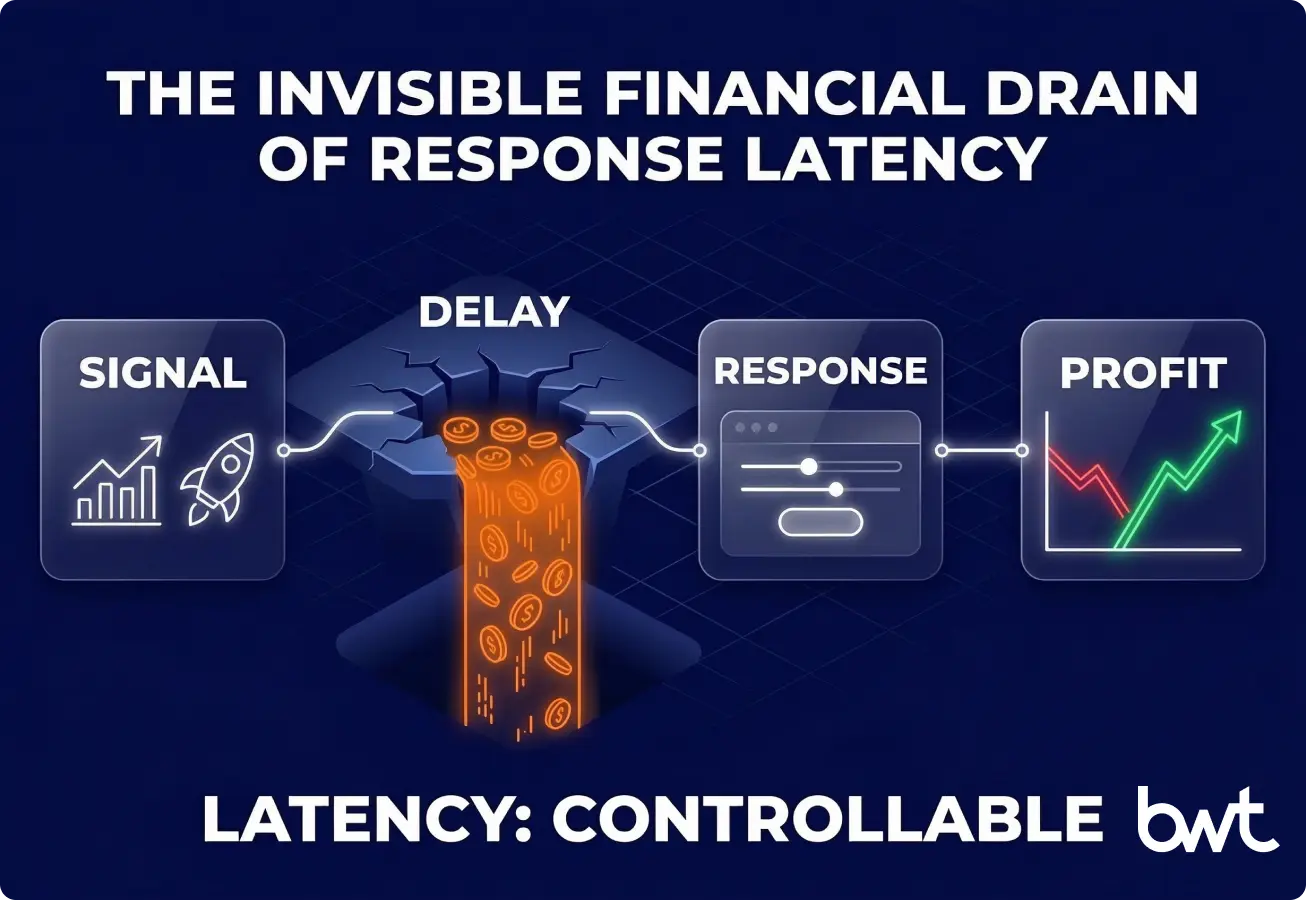

Ecommerce data aggregation only pays off when it reduces the time between a market change and a margin-safe action.

If it can’t ship actions safely, it becomes a reporting project that looks “data-driven” while your P&L keeps bleeding through promo lag, stockout misses, and reactive discounting.

This guide explains how we move from “collecting rows” to “automating decisions” with a 3‑Gate Safety Protocol and an operating contract (Latency Ledger) that teams can actually run. Results vary by category, systems, and readiness—but the control pattern is consistent.

Glossary

Data aggregation for ecommerce is the discipline of collecting retail signals (prices, promos, stock, assortment, reviews), matching the same products across sources, and normalising fields so downstream systems can act without creating margin leaks.

Decision latency is the elapsed time between a market event (price change, promo launch, stockout) and your approved response going live.

A match confidence score is a probability (0–1) that two listings from different sources represent the same product/SKU/variant.

A safety gate is a mandatory check that must pass before a decision is allowed to update price/promo/content; failed items go to an exception queue with evidence.

Learn the mechanics in our step‑by‑step guide on how to aggregate data.

The “Decision Latency Tax” shows up as a margin loss you can actually measure

When you react late, you either miss full‑margin hours or you discount after the market has already moved back.

Two common patterns:

1) The stockout opportunity (margin you didn’t take)

A competitor goes out of stock at 10:00 AM, but you detect it tomorrow.

Business outcome: you kept discounting against a “ghost competitor” for ~24 hours.

2) The promo lag (discounting you didn’t need)

A competitor launches a localized 20% coupon; you match it 48 hours later—right as their promo ends.

Business outcome: you gave away margin after the threat was already gone.

A mini Decision Latency Calculator (copy into a sheet)

This won’t be perfect—but it forces the right conversation with finance.

| Input | What to use | Why a CMO cares |

| A. Impacted orders/day | Your top-SKU or top-cluster volume | Converts “data issues” into revenue risk |

| B. Contribution margin/order | Post-shipping, post-fees | Moves from vanity metrics to P&L |

| C. Hours late (avg) | 24, 48, etc. | This is the controllable variable |

| D. Events/month | Stockouts + promos + price moves | Frequency makes latency compound |

Estimated monthly latency cost ≈ (A × B) × (C / 24) × D

Use it as a baseline, then refine with real elasticity once you have clean data.

The 3‑Gate Safety Protocol is margin insurance, not “extra QA”

Automation fails in one of three ways: bad data, bad cost, or bad deltas.

That’s why the protocol has three explicit gates—each one tied to a P&L failure mode.

If any gate fails, the system must alert + route to an exception queue with source evidence (URL, timestamp, parser version, match ID) instead of pushing an action.

The 3 safety gates (what each one checks, and why it matters)

| Safety Gate | What It Checks & Key Thresholds | If It Fails |

| Gate 1 — Data Validity & Freshness | Freshness SLAs (≤ 1 hr for top sellers), null-rate spikes (> 2× baseline), duplicate rows, outlier prices (> 3σ), parser drift, promo-field consistency | Block action, raise incident, re-parse from raw snapshot — prevents wrong moves from stale or broken data |

| Gate 2 — Cost & Margin Floor | Landed-cost availability, fee assumptions, margin floor enforcement (e.g., ≥ 18%), MAP compliance, shipping-threshold logic | Block action, route to finance/pricing owner — prevents accidental loss leaders and margin erosion at scale |

| Gate 3 — Delta Limits & Policy Constraints | Max price delta (≤ 5%/change, ≤ 10%/day), match-confidence threshold (> 0.98), inventory-aware brakes, competitor-OOS context, segment-level approval rules | Degrade to human-in-the-loop, log reasoning — prevents price shocks, brand damage, and overreaction to noisy signals |

CMO note: Ask your team one question: “Which gate prevents a bad move from hitting revenue this week?”

If they can’t answer in one sentence, the system isn’t safe enough to automate.

At GroupBWT, we treat this as a control loop (collection → truth → action) delivered as custom pipelines or data aggregation services.

Signals should exist because they trigger a decision, not because they’re easy to collect

Good ecommerce data aggregation starts with a workflow you’re willing to own, then works backwards to the minimum signals required.

“Crawl everything” looks thorough—and burns budget while creating exception noise.

| Signal | What it drives | Common pitfall | Business impact if you get it wrong |

| Price & promos | Repricing exceptions, promo QA | Mixing “was” vs “sale” price | Undercut yourself or miss violations |

| Availability | Stock alerts, buy-box defence | Cached “in stock” false positives | Waste ad spend; chase phantom stockouts |

| Assortment | Gap analysis, variant coverage | Taxonomy mismatch across sources | You “find gaps” that are just mapping errors |

| Reviews | CVR drivers, demand shifts | Sentiment without context | You react to noise (outliers) instead of identifying and fixing repeated issues that impact the bottom line |

| Content quality | Listing QA at scale | Comparing different templates | False positives; teams stop trusting alerts |

If review-driven alerts matter, treat reviews as a text dataset—not a star-rating feed. See web scraping for sentiment analysis.

If listing quality and attribute completeness are your bottlenecks, you need a pipeline built for complex product data rather than simple price feeds. Learn more about end-to-end content aggregation solutions.

SLAs only work when they are written as revenue contracts

An SLA is not a buzzword; it’s the only way to stop “freshness” from becoming a debate.

Definition: A Service Level Agreement (SLA) is the target you set for data freshness and incident response (e.g., “top-seller price snapshots ≤ 1 hour old; parser breaks triaged within 2 hours”).

Typical sources:

- Marketplaces (Amazon, eBay, Walmart): prices, sellers, buy-box, stock, promos

- Competitor DTC stores: pricing, bundles, shipping thresholds, content standards

- Review platforms: volume shifts, recurring issues, feature requests

- Price comparison sites: category baselines

- Supplier/distributor catalogues: cost, lead times, substitutions, discontinuations

If you need broad marketplace and store coverage with clear ownership and compliance, start with a disciplined collection plan—this is the baseline behind our ecommerce data scraping services.

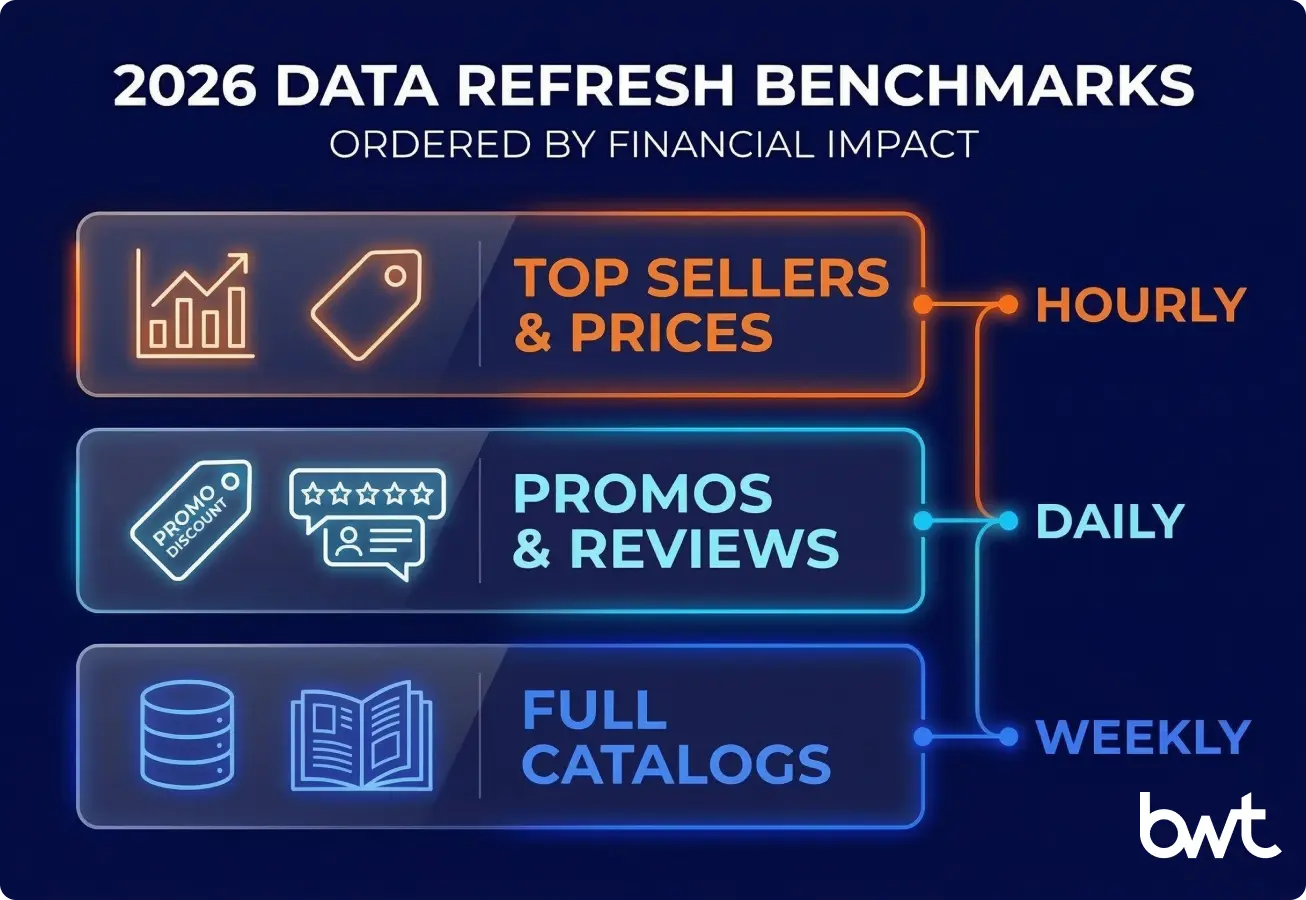

Baseline refresh guidance (2026)

Use this as a starting point, then tune by workflow ROI.

| Workflow decision | Min refresh | Why it matters (money impact) |

| Top-seller price collection | Hourly | Late detection forces reactive discounting |

| Repricing exceptions (human review) | 4 hours to resolve | Hourly collection is useless if exceptions sit for days |

| Promo monitoring | Daily | Catching day 1 prevents week-long leakage |

| Review trend alerts | Daily | Early signals beat post-mortems |

| Supplier catalogue deltas | Weekly–monthly | Change velocity is lower; accuracy beats speed |

The Latency Ledger turns “data requests” into an operating contract

When every workflow has an owner, a latency target, and guardrails, you stop arguing about data and start improving outcomes.

| Workflow | Target latency | KPI + non-negotiable guardrails |

| Top-seller repricing (automated) | 1–2 hours | Gross margin %, buy-box; hard margin floor; max daily delta; inventory-aware rules |

| Repricing exceptions (human-in-the-loop) | 4 hours | Approval thresholds; kill switch; audit trail for every override |

| Promo monitoring | 24 hours | Promo compliance; block actions if match confidence < threshold |

| Stockout alerts | 8 hours | Stockout rate; start with top SKUs; dedupe by seller + fulfilment type |

| Content QA tickets | 72 hours | CVR + completeness; template-aware rules; false-positive rate target |

“My marketing rule: if a signal doesn’t have an owner, a KPI, and a next action, it’s not intelligence—it’s trivia. That’s the difference between market monitoring and revenue control.”

— Olesia Holovko, CMO, GroupBWT

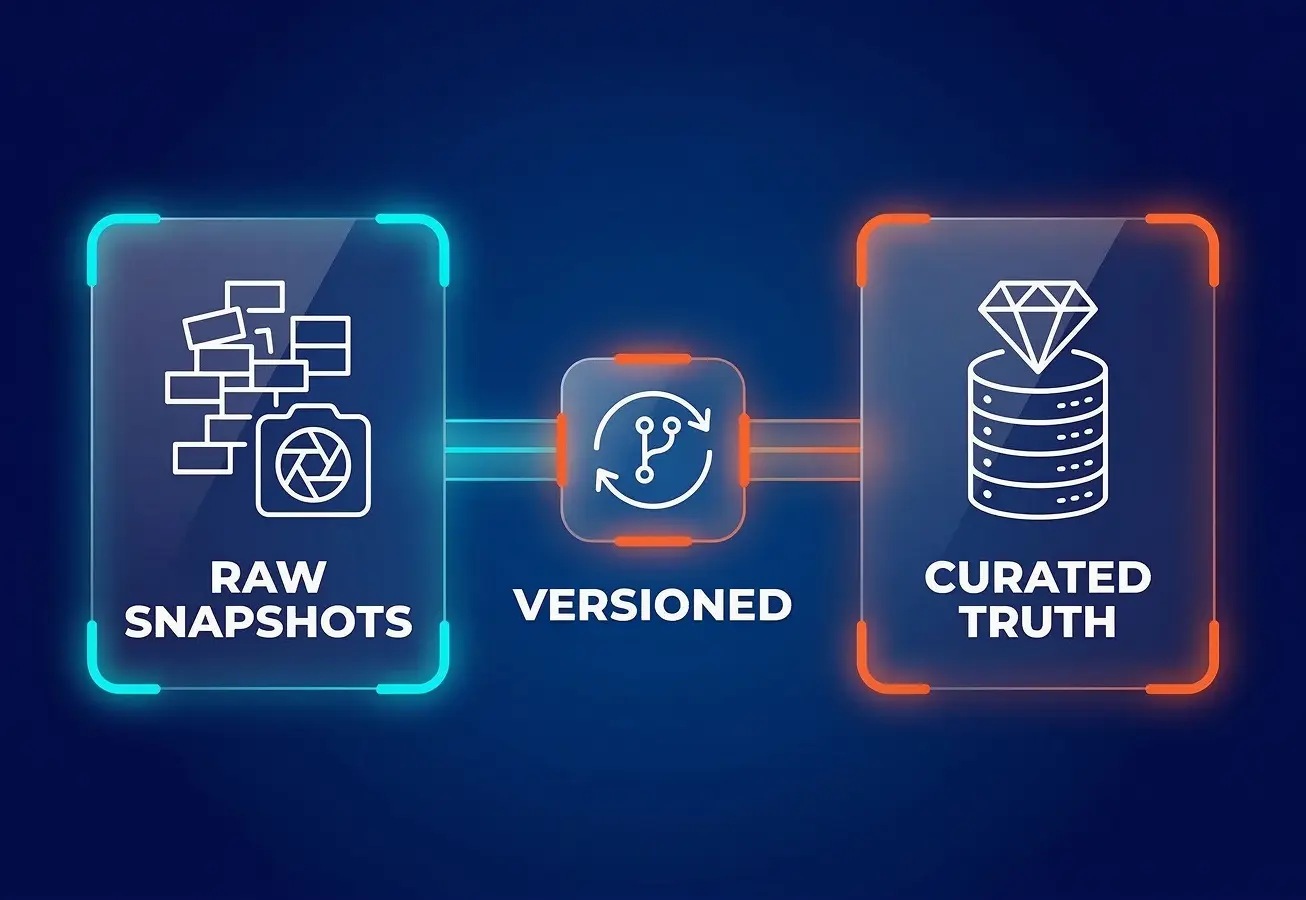

Architecture should separate collection, product truth, and actions so that changes are reversible

A scalable platform is a pipeline with controls—not a scraper with storage.

We implement this separation as a repeatable data aggregation framework so teams can audit, rollback, and reprocess when sources change.

Pipeline layers that survive real-world volatility:

- Collection layer (get snapshots): Prefer official APIs and partner feeds; add compliant crawling only where needed. Design for retries, rate limits, and source-specific parsing.

- Match + normalise (create product truth): Recognise the same product across sources even when naming, language, and pack sizes differ. Store match confidence + audit log.

- Warehouse layer (store raw + curated): Keep raw snapshots immutable so you can re-run parsing later without re-collecting. Publish curated business-ready tables keyed by source + timestamp.

- Decision layer (ship actions): Push curated data into BI and operational systems (pricing engine, ERP, PIM). Alert on anomalies (price swings, freshness breaches, match-rate drops) before they become margin leaks.

Parser-change monitoring is the practice of detecting when a marketplace layout or API field changes so that extraction doesn’t silently degrade.

“Engineer aggregation like a payment system: assume upstream fields will break, version everything, and make failure visible. Silent drift is more expensive than downtime because it corrupts decisions.”

— Dmytro Naumenko, CTO at GroupBWT

Matching is probabilistic, so automation needs confidence thresholds and brakes

Wrong matches create automation-speed mistakes that look like “mysterious margin leaks.”

Treat matching as a probability score—not a checkbox.

Operational guardrails that hold up in production:

- Prefer GTIN/UPC/EAN where coverage is strong, but don’t assume it’s universal.

- Store match confidence and route low-confidence items to a review queue.

- Require an audit trail: match ID, confidence, source timestamp, and decision rule.

- Freeze automation when match-rate drops, null-rate spikes, or freshness breaches occur.

- Re-audit a “golden set” of verified pairs weekly to catch drift.

Build vs buy should be decided by differentiation, not engineering ego

Most ecommerce data aggregation programs win with a hybrid path: prove value fast, then own the product-truth layer where differentiation compounds.

| Option | Best for | Where it breaks | What GroupBWT typically recommends |

| SaaS | Fast pilot in standard categories | Limited custom matching; shallow ERP/PIM integration; opaque QA | Pilot quickly, but keep your long-term “truth layer” portable |

| Custom build | Differentiated workflows + control | Engineering + on-call + source changes | Own matching + audit + guardrails; automate confidently at scale |

| Outsource ops | Wide coverage without a scraping team | Less control; dependency risk | Use an SLA-backed partner for collection, while you own the decision rules |

For more on collection mechanics and trade-offs, read ecommerce data scraping.

When this approach doesn’t fit, fix ownership and cost data first

If you can’t act, aggregation will only create better reports—not better outcomes.

Expect poor ROI when:

- You have no owner who can actually change price/promo/content.

- Your pricing system can’t deploy changes more than once a day.

- Your catalogue has no identifiers (GTIN/UPC/EAN) and no process for human review.

- Legal/compliance constraints prevent collecting the signals you need.

- You don’t have reliable cost / landed cost data to enforce margin floors with confidence.

In those cases, start by fixing process and tooling (ownership, PIM hygiene, pricing rules) before scaling collection.

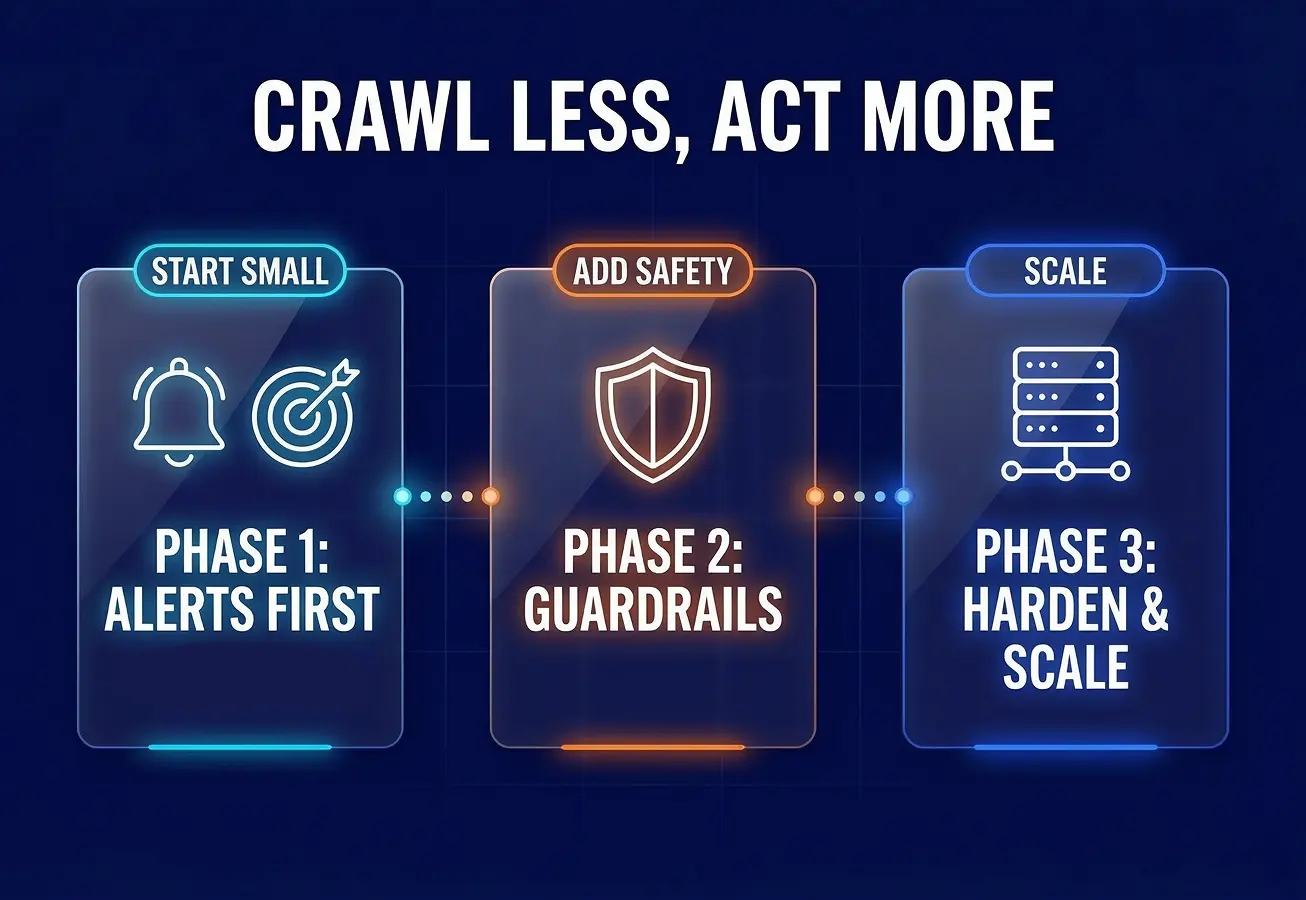

A 30–60–90 rollout proves value without overbuilding

The minimum viable system is one category + one workflow + one KPI.

Days 1–30: prove value safely

- Pick 1 category + 1 workflow (repricing exceptions or promo monitoring).

- Define an owner, KPI, and target latency (use the Latency Ledger).

- Implement collection → match → curated dataset + basic QA metrics (freshness, match-rate, null-rate).

- Ship alerts first, not automatic actions.

Days 31–60: integrate with guardrails

- Integrate curated feeds into pricing/PIM/ERP as a controlled input (feature flag + audit trail).

- Add guardrails: margin floor, max daily change, inventory-aware rules, and approval thresholds.

- Log every decision (“why this fired”) so teams can debug outcomes.

Days 61–90: harden, then scale what moves the KPI

- Expand SKUs/sources only where the KPI lift is measurable.

- Add freshness + parser-change monitoring to SLA dashboards.

- Run weekly rule reviews (adjust rules, not just code).

Case study: 50k SKUs, 85% faster price matching, +2.3% gross margin recovery

This is an anonymised, composite case based on common patterns we see across ecommerce engagements. Individual results vary by category, margin structure, channel mix, and operational readiness.

Client profile

- Consumer Electronics retailer

- ~50,000 SKUs across 6 categories

- 3 primary marketplaces monitored + 12 DTC competitors

- Prior process: daily exports + spreadsheets + manual approvals

Starting point (baseline)

- Price/promo detection to action: 24–48 hours

- Promo mismatch detection: ~5–7 days (often found after promo ended)

- Exception queue: unowned, >1,200 items/week, high false positives

Intervention

- Implemented daily promo snapshots + hourly top-seller price snapshots

- Added matching with confidence scoring; auto-actions only when confidence > 0.98

- Rolled out the 3‑Gate Safety Protocol (freshness → cost/margin → delta limits)

- Assigned one promo owner with a 24h triage SLA and evidence-rich alerts (URL + timestamp + rule “why”)

Measured outcomes (Q1)

- Price-matching lag reduced by ~85% (from 24–48h → ~2–6h on top SKUs)

Note: This 2–6h latency includes the full cycle: hourly collection, automated data processing, and the mandatory safety gate/approval delay before the price update hits the storefront.

- Promo mismatch detection latency: ~5–7 days → <24 hours

- “Is this real?” escalations down ~40% after adding source evidence + audit trail

- Gross margin recovery: +2.3% GM in the monitored categories (primarily by avoiding late, unnecessary discounting)

What we did not automate (on purpose)

- Low-confidence matches (<0.98)

- Categories with unreliable landed cost inputs

- Large price deltas beyond policy limits without approval

Compliance is a reliability requirement, not a legal footnote

If you can’t prove a compliant path to data, you can’t safely use it for automated commercial decisions.

This is not legal advice; involve counsel for jurisdiction-specific guidance.

Practical compliance checklist:

- Prefer official APIs when available (lower ToS and stability risk).

- Respect robots.txt under the Robots Exclusion Protocol (RFC 9309).

- Don’t bypass authentication, access controls, or anti-bot measures.

- Minimise personal data: reviews can contain usernames/personal data (GDPR/CCPA risk).

- Keep immutable logs for lineage: source, timestamp, parser version, match version, decision rule.

- Define retention and deletion policies for raw snapshots and derived datasets.

Primary sources to start from:

- RFC 9309 (IETF) for robots.txt

- GDPR: Regulation (EU) 2016/679

- CCPA/CPRA (if applicable)

- EU DSA: Regulation (EU) 2022/2065 (relevant in marketplace/platform contexts)

Conclusion: close the loop so market change becomes an internal action cycle

If you’re planning data aggregation for ecommerce, start with one workflow, one owner, and one SLA—then add gates and automation only where the KPI lift is measurable.

That’s how you cut decision latency without creating a bigger, faster margin leak.

Practical takeaway: launch checklist (copy/paste)

- 1 category + 1 workflow + 1 KPI selected

- Owner named + SLA written (freshness + incident response)

- Decision Latency Calculator completed with real numbers (use the mini version above)

- Match confidence scoring + exception queue defined

- Guardrails implemented (margin floors, max deltas, kill switch)

- Audit trail enabled end-to-end (source → match → rule → action)

- Weekly review cadence scheduled (rules, not just code)

GroupBWT helps teams design, build, and operate pipelines that stay stable when sources change—without overpromising accuracy or cutting compliance corners.

Copy the checklist and run the mini calculator first. If you want, share your inputs (category, current lag, margin floor, and exception volume), and we’ll sanity-check whether your current plan is safe to automate—and what should stay human-in-the-loop.

FAQ

-

How much revenue am I losing to the “decision latency tax” each month?

Estimate it using impacted orders/day × contribution margin/order × (hours late / 24) × events/month, then refine with elasticity once your data is clean.

-

What guardrails are non-negotiable for safely automating pricing?

Freshness gates, match-confidence thresholds, hard margin floors, max daily deltas, inventory-aware brakes, a kill switch, and an audit trail.

-

Should I build a custom pipeline or invest in a SaaS solution for ecommerce data aggregation?

Use SaaS to prove value fast, but plan to own the product-truth layer if matching, integrations, and auditability are strategic.

-

What are the 2026 legal and compliance benchmarks for retail data collection?

Prefer official APIs, respect RFC 9309 (robots.txt), don’t bypass access controls, treat reviews/usernames as personal data where applicable (GDPR/CCPA), and keep immutable lineage logs.

-

How do I get high-precision product matching without claiming “99% accuracy”?

Separate precision from recall and automate only above a high confidence threshold (e.g., >0.98); route everything else to a review queue and audit drift with a golden set.