According to BCG, more than 80% of companies are deploying Gen AI in their engineering process. Yet real transformation happens only when AI is integrated end-to-end across engineering.

Only systemic, end-to-end integration of an AI development solutions across the entire lifecycle—from architecture and data pipelines to testing, deployment, and governance drives real transformation.

We’ve validated this thesis in dozens of industries, including insurance, travel tech, fintech, and e-commerce. In this article, we will show how systemic integration works, where it falters, and what it takes to succeed, based on our generative AI development company’s practice.

Why Software Engineering for AI Enabled Systems

AI-enabled engineering means that the algorithms participate in decisions that used to require senior engineers and identify security vulnerabilities before code is released.

Just as importantly, building applications specifically designed to host, serve, and scale AI models in production.

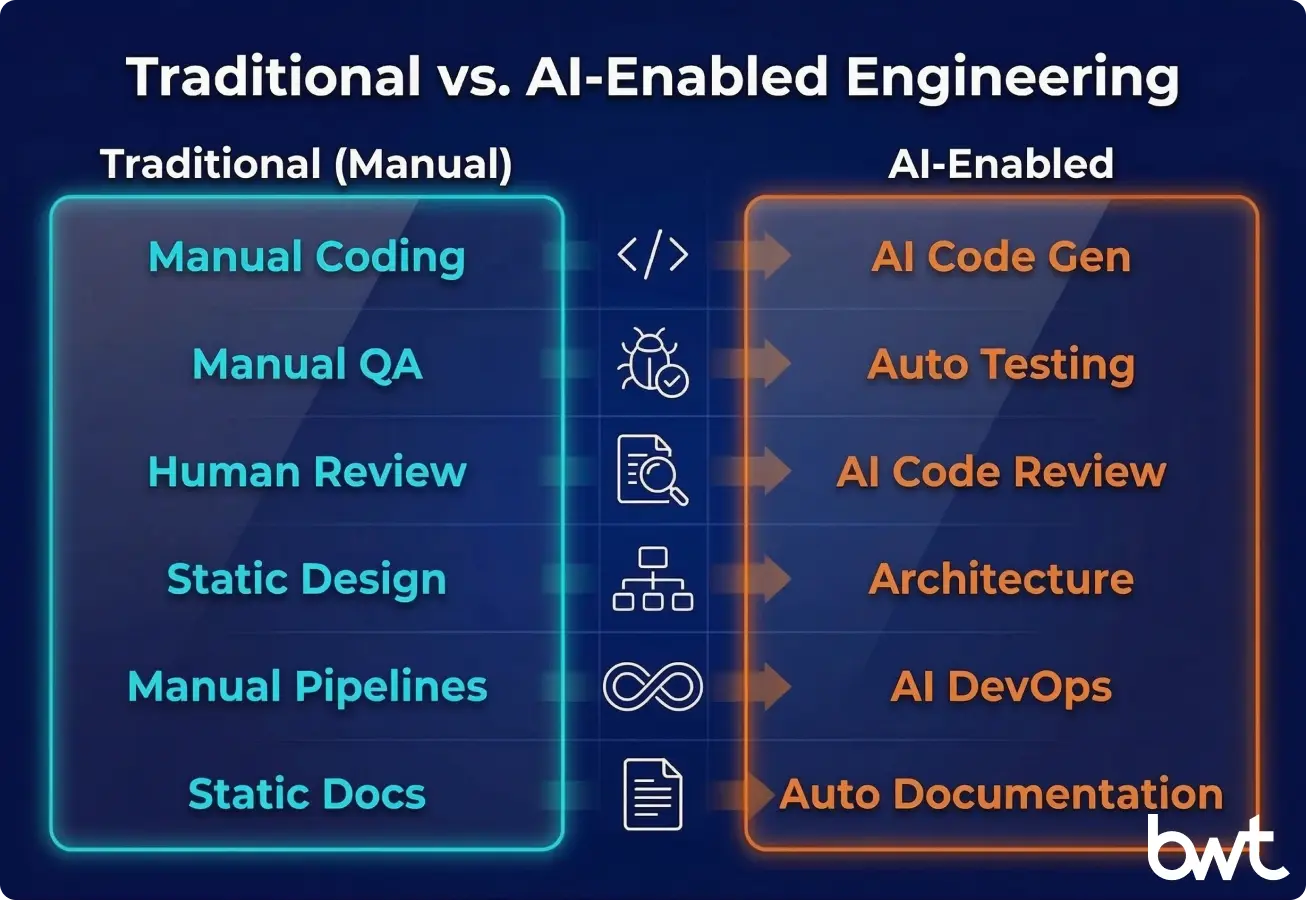

Traditional software development follows a sequential chain: requirements (what the software should do), design, code, test, deploy (make live).

In this process, each stage depends on human decision-making and manual review. This approach doesn’t remove people but makes every stage smarter.

From Traditional Development to AI-Enabled Software Engineering

| Aspect | Traditional Development | AI Engineering |

| Code generation | Developer writes from scratch or copies boilerplate; ~40 lines/hour for complex logic | LLM drafts initial code from a prompt; developer reviews and refines — cutting first-pass time by 30–50% |

| Testing | QA writes test cases manually; coverage gaps are found late in production | AI generates test suites from code diffs, flags untested edge cases before merge |

| Code review | 2–3 peers review asynchronously; turnaround measured in hours or days | AI pre-review catches style violations, security issues, and logic errors in minutes; human review focuses on architecture |

| Architecture | Senior architect makes decisions based on experience and whiteboard sessions | AI scans codebase patterns, suggests components, and flags anti-patterns — the architect decides faster with data |

| DevOps | Static CI/CD scripts; failures diagnosed manually from logs | Self-healing pipelines with predictive failure detection; auto-rollback on anomaly |

| Documentation | Written after the fact (if at all); outdated within weeks | Auto-generated from code context and PR descriptions; updated on every commit |

Organizations embedding AI throughout product development see significant productivity and quality gains—but only with systematic integration, not isolated tool adoption.

AI-Enabled Engineering Transformation at Scale

In any such project, tool-only efforts fall short because true transformation touches everything from culture to code.

Why Tool Rollouts Fail — and What Works Instead

Watching migration logs catch issues he used to hunt for by hand was his turning point. The data engineering services migration only succeeded because we paired the technical work (automated data reconciliation between old and new systems) with team retraining and new workflows. The technology itself was table stakes; seeing trusted team members shift their perspective made change management the real differentiator.

“I’ve seen teams with perfectly good CI/CD pipelines reject AI-generated code — not because the output was wrong, but because nobody rebuilt their review process to handle it. You can’t push AI outputs through a workflow designed for a three-person peer-review cycle. The process has to change before the tooling pays off.”

— Oleg Boyko, COO at GroupBWT

AI Governance as a Daily Practice

For a major European insurance group, we built a market intelligence system where AI governance wasn’t an afterthought — it was a core feature. The dashboard tracked LLM and search API consumption in real time, giving teams visibility into what the system was doing and what it cost — a textbook example of data governance consulting services applied to AI workloads. The LLM pipeline used a two-tier filtering approach: a lightweight prompt scored each headline’s relevance on a 10-point scale, and only articles with a score above 7 were moved to full analysis. This approach cut token costs by 40% while delivering analytics to 2,000+ employees across the organization.

This case directly illustrates our core thesis: it wasn’t the LLM that created the value — it was the full system around it. Filtering logic, cost monitoring, governance dashboards, and a delivery pipeline that reached thousands of end users. The model was one component of many.

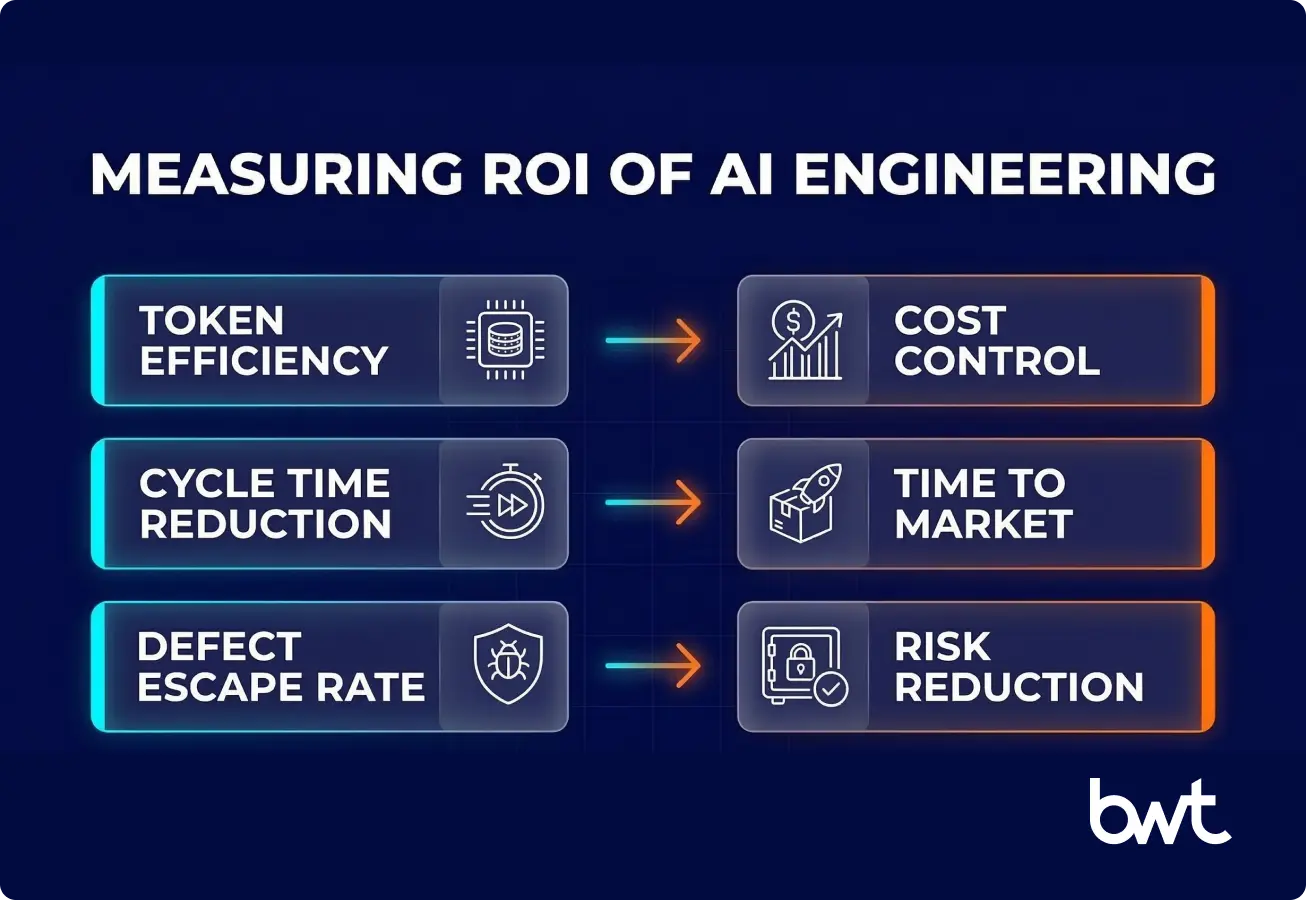

Measuring ROI of AI-Accelerated Engineering

Roughly 50% of CIOs cannot quantify GenAI’s impact on engineering. In the absence of clear data analytics services, these budgets are the first to be cut. To bridge the gap between technical progress and executive value, we anchor each engineering KPI to a business outcome that matters to CFOs. For example, pairing ‘defect escape rate’ with the average cost of a critical production bug translates quality improvements directly into dollar savings. One side-by-side case: at a recent client, reducing escaped defects by 12 per quarter cut estimated rework costs by $180,000 annually, based on the organization’s cost-per-bug benchmarks.

Four metrics we track across every engagement:

- Token cost efficiency — measuring cost per useful output (results that solve a task) compared to total API spend (money paid to access software interfaces used by AI models). This reveals whether your models are working or just burning credits (spending money without value).

- Cycle time reduction — comparing the time needed to finish equivalent tasks before and after AI implementation. This metric measures true speedup (not just perceived faster delivery).

- Defect escape rate — comparing bugs found in production (after release) to those caught by automated QA (computerized quality assurance tools). This tells you if improvements in quality are real or just appear to be.

- Developer satisfaction — surveys and retention data. Teams that feel augmented (not replaced) ship better code and stay longer.

“Half of CIOs can’t quantify AI’s impact — and I’ve watched that uncertainty kill budgets. The fix isn’t more dashboards. It’s framing AI results in the language your CFO already uses: cost avoidance per release cycle, defect costs saved before production, and time-to-revenue for new features. If you can’t tie AI to a line item they already track, you’ll lose the budget to something they can measure.”

— Olesia Holovko, CMO at GroupBWT

Agentic AI-Enabled Product Engineering

This approach acts proactively. The system receives a goal, breaks it into subtasks, executes them, self-corrects on failure, and delivers results. (Agentic means the AI can act with some autonomy, not just respond to prompts.)

A standalone tool can’t do this. Only an engineered system with error-handling, retry logic, and isolation built into its architecture can.

AI-enabled engineering assistants cases

Three cases that show various stages of the software lifecycle — and how each one proves that systemic integration matters more than any single tool.

AI-Powered NLP Modernization

Duplicate-detection accuracy jumped by 35% overnight after we delivered an upgrade to a sleep-tech e-commerce platform. We replaced a 3-year-old string-comparison system (which matched text strings) with a semantic search powered by Natural Language Processing (NLP).

The old system matched reviews by fuzzy text similarity (loose matches). The new one understands meaning, catching ‘masked’ duplicates where the same review appeared in different languages that basic string matching missed. Cloud translation API integration (automated language translation) was cost-optimized to enable the system to scale and expand to new markets without budget blowouts. This is intelligent engineering at its most practical: replacing a brittle (fragile) legacy system with an intelligent one that improves as data grows.

Two-Stage LLM Pipeline for Cost-Efficient AI

For an AI travel discovery platform, we engineered an NLP/LLM pipeline that collects user-generated content from 9 sources and runs a two-stage classification. Stage one: a cheap filter discards irrelevant content (akin to a bouncer at the door). Stage two: only pre-qualified content is subjected to expensive categorization and sentiment analysis. The result: up to 60% savings on AI API costs. The pipeline tracks each piece of content through a state machine — every item has a status code showing exactly where it is in the processing chain. This engineering discipline for AI workloads is what separates a prototype from a production system.

AI-Assisted Validation at Telecom Scale

For a European telecom operator planning a €7 billion infrastructure rollout, the core engineering challenge was not the network hardware; it was the address data. Deploying fiber to 22 million locations means knowing exactly which buildings exist, where they are, and whether existing records stay accurate. Imagine sending crews and equipment to the wrong street because of a single bad address, racking up unexpected costs, and damaging customer trust.

We built an AI-powered validation pipeline that cross-referenced multiple geospatial data sources, flagged inconsistencies that human reviewers would have missed, and prioritized deployment zones based on data certainty scores. The system detected thousands of duplicate and misclassified addresses, each one a potential trigger for costly deployment errors or negative headlines. The engineering challenge here was to build the validation logic, confidence scoring, and exception routing into a single pipeline, rather than just run a one-off model in a spreadsheet.

Benefits of AI-Accelerated Engineering

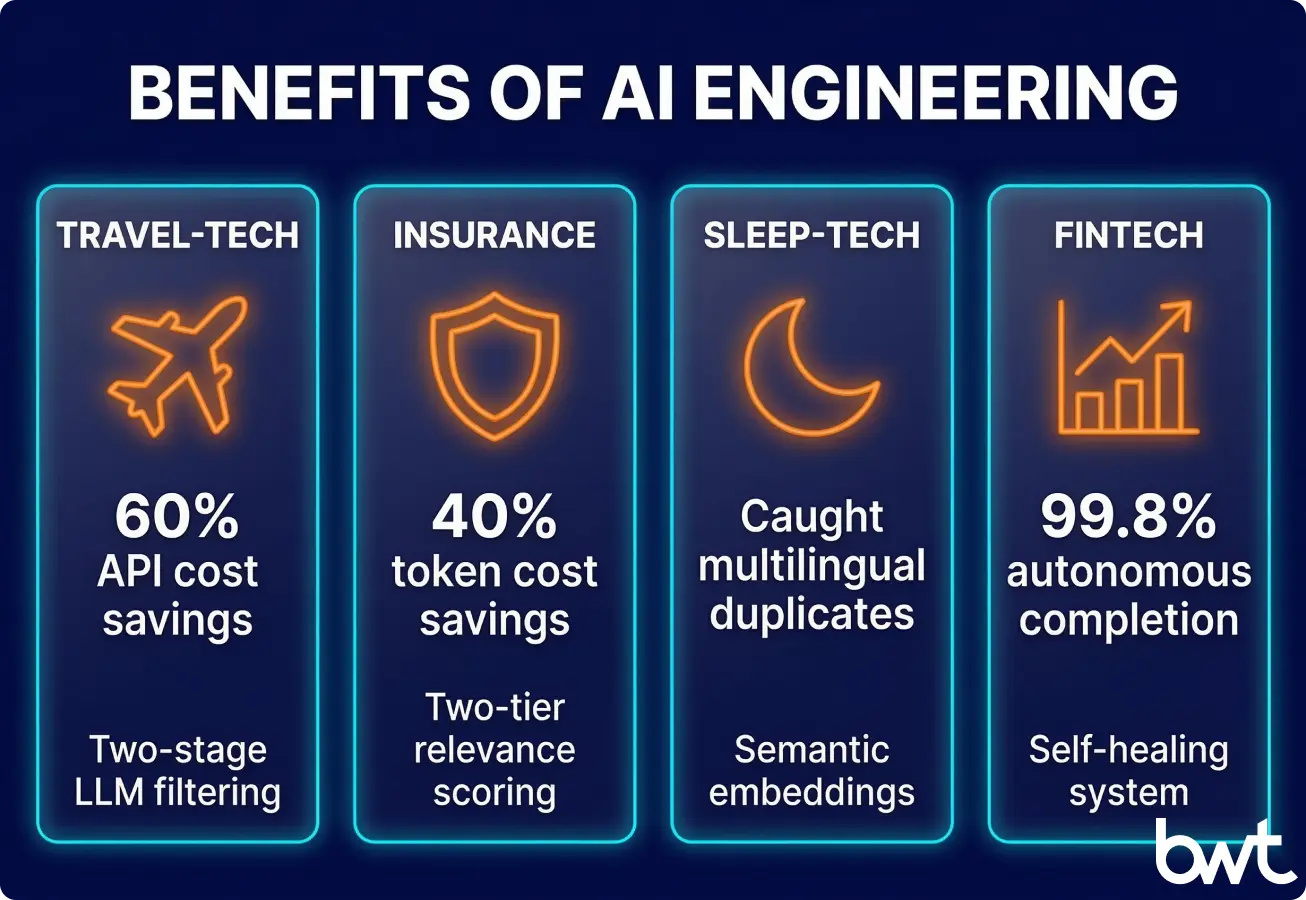

Concrete results from our portfolio:

| Project | Result | How |

| Travel-tech platform | 60% savings on API costs | Two-stage LLM filtering — cheap pre-filter before expensive analysis |

| Insurance market intelligence | 40% savings on token costs | Two-tier relevance scoring with governance dashboard |

| Sleep-tech NLP modernization | Caught multilingual duplicates in the legacy system that were missed | Semantic embeddings replacing 3-year-old string matching |

| Financial data automation | 99.8% task completion, fully autonomous | Self-healing system with automatic failover and audit trails |

| Telecom infrastructure rollout | 22M addresses validated, costly deployment errors prevented | Geospatial cross-referencing with confidence scoring |

| Enterprise data migration | 12-year legacy SQL Server → Lakehouse, zero data loss | Automated reconciliation between old and new systems + team retraining |

Organizations on modern tech stacks with mature DevOps see GenAI velocity gains nearly 50% higher than those on legacy systems. AI accelerated engineering delivers returns — but only when the models are embedded in well-engineered systems, not dropped into broken processes. In each case above, the model was a single component. The engineered system around it was the product.

Difficulties and Constraints of AI-Enabled Engineering

Six blockers show up in nearly every engagement: the “toil paradox” (AI automates the fun work but leaves testing and security as bottlenecks), stalled adoption, unclear ROI, accumulated tech debt, rapid tool churn, and plain organizational resistance. Three deserve closer attention.

Security and Intellectual Property Risks

Models developed on public code can introduce copyrighted patterns, leak proprietary data through prompts, or generate plausible but insecure code. One of our longest-running engagements — a 5-year identity verification platform spanning multiple major product releases — demonstrates what mature security looks like: ML at the core, but with security-by-design architecture and strict compliance boundaries from day one. There are no shortcuts here.

Model Dependability and Trust

40% of engineers cite hallucinations and a lack of trust in model output as their top three challenges. Our two-tier filtering approach exists precisely for this reason: raw model output never reaches production decisions without a validation layer. The cheap first pass catches obvious errors; the second pass catches subtle ones. Neither runs without supervision in critical paths.

“The mistake most teams make is treating AI validation as a testing problem. It’s an architecture problem. You need observability, fallback modes, and state tracking baked into the pipeline itself — so when a model hallucinates at 2 a.m., the system degrades gracefully instead of shipping garbage downstream.”

— Alex Yudin, Head of Data Engineering at GroupBWT.

Automation Without Oversight

One of our fintech engagements is an instructive example. Replacing a legacy transaction core under strict financial regulation required 36 months of phased work — swapping critical systems while they remained in production. Automated orchestration handled the technical sequencing, but every regulatory checkpoint required human assessment. The lesson: in regulated environments, the question isn’t “can we automate this?” but “should we automate this?” The answer we arrived at: automate the sequencing while keeping humans in the loop at every compliance gate. The project shipped on time with zero regulatory findings — precisely because we didn’t try to automate the judgment calls.

Is AI-Enabled Engineering Right for Your Organization?

Before you jump to the checklist, pause for a quick self-check: Where does your team currently stall—on deployment frequency or on data quality? Identifying your real friction point will make the next steps more useful.

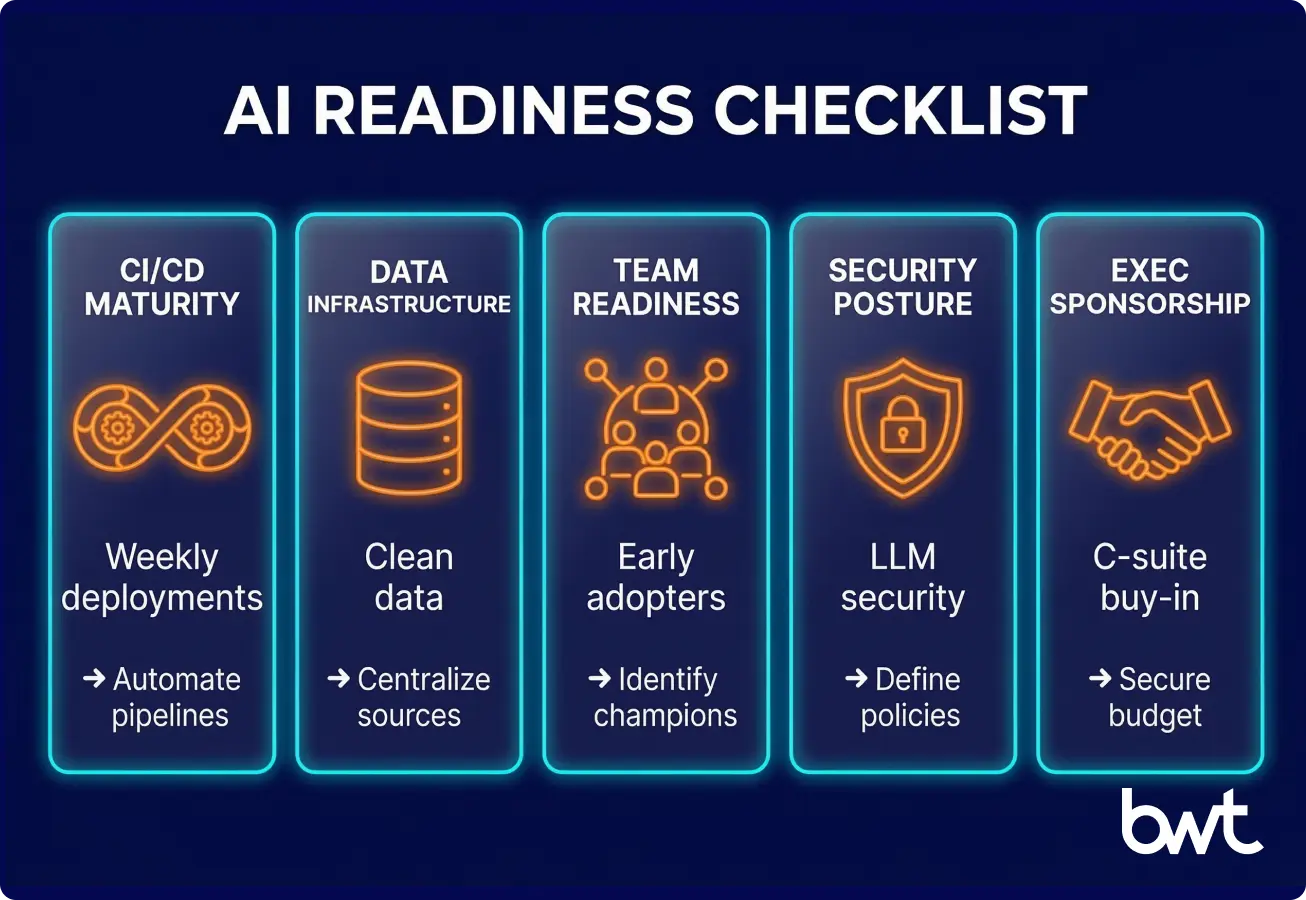

Readiness Checklist

- CI/CD maturity. These tools accelerate existing pipelines — they don’t create them. Action: If you deploy less than weekly, fix that before investing in automation.

- Data infrastructure. Automation amplifies what exists — including AI training data problems. Action: Clean one data source fully and run a pilot workflow on it before committing to a larger rollout.

- Team readiness. Only 20% of enterprises hit 75%+ adoption. Action: find 3–5 early adopters and build the pilot around them. Adoption spreads peer-to-peer, not top-down.

- Security posture. LLM workloads introduce risks that traditional AppSec doesn’t cover — prompt injection, training data poisoning, insecure code generation, and model output manipulation (see OWASP LLM Top 10 for the full taxonomy). Action: run a dedicated risk assessment. Most security systems weren’t built for LLM-generated code.

- Executive sponsorship. Without C-suite backing, transformation stalls at the pilot phase. Action: frame the business case as cycle time reduction and defect cost avoidance — not “adoption metrics.”

Working with an Engineering Partner

Building AI-enabled software engineering capabilities in-house can feel daunting when you think of it as a single 12 to 18-month push. Instead, we guide clients through three progressive horizons. The first is pilot: launch a focused initiative with clear objectives and measurable impact. Next comes scale: expand the successful pilot to other teams and workflows. Finally, embed AI adoption into the organization’s DNA, with continuous improvement and capability building at every level.

Partnering with AI consulting services accelerates this trajectory, but only if your partner staffs cross-departmental teams (AI/ML, data engineering, DevOps, QA, domain experts) rather than parachuting in isolated ML consultants. 85% of CIOs expect automation to reshape engineering roles, and 43% cite entirely new skill requirements. The organizations building these blended teams now gain a structural advantage. Our most impactful engagements follow this path across 2 to 5 years: starting with focused pilots that deliver real ROI, then scaling through a dedicated enablement function.

Summary

We opened with a gap: 80% of companies use AI for coding, yet most achieve only single-digit improvements. We argued that the gap exists because most organizations treat it as an add-on tool rather than an engineering transformation. And we showed — through four detailed cases — that the systemic integration of AI across the entire lifecycle delivers 40–60% cost reductions, 99.8% autonomous task completion, and capabilities that legacy approaches simply can’t match.

A singular rollout of coding tools yields roughly 30% of potential value. A holistic engineering transformation unlocks more than a 2X increase in output. The difference isn’t the model — it’s the engineering around it.

FAQ

-

What is AI-enabled engineering, and how does it differ from traditional software development?

This approach means artificial intelligence isn’t just sitting inside your code editor autocompleting brackets — it’s woven into testing, deployment, monitoring, the whole thing. Traditional development? Every stage depends on a person doing the work by hand: architecture, code, review, release — humans all the way down.

The AI-enabled approach hands off chunks of that grind to models. But — and this is the part people skip — you don’t need to jump straight into agentic workflows and multi-layer pipelines on day one. Start with a single API call and a skillfully created prompt. You’ll be surprised how far that gets you. The fancy patterns can wait until you actually hit a wall.

-

What are the biggest benefits for engineering teams?

You ship faster. That’s the headline. Teams that do this carefully see improvements of 16–30% in time-to-market. Code quality goes up, too, because even a basic LLM check catches bugs that human eyes miss — especially at 3 AM when everybody’s running on caffeine and stubbornness.

Now, about money. Yes, you can save a lot. But you don’t necessarily need a two-tier model filtering setup to get there. One model works fine for starters. Sure, your token bill will be higher — but you’ll save dozens of hours not maintaining request routing logic between different APIs. Sometimes simplicity is the optimization. Funny how that works.

Teams actually enjoy their jobs more when automation handles the repetitive stuff. That’s not a footnote — it matters.

-

How does the code delivery pipeline change?

The textbook “agentic” approach goes like this: the system receives a goal, breaks it into subtasks, executes them, checks its own work, and fixes mistakes. Sounds fantastic for a conference talk. In practice? It’s a black box that’s brutal to debug and even harder to explain to a client who just wants to know why their feature is late.

The simpler alternative: a linear script with clearly defined steps. The model handles one specific operation — generating code, running a refactor, writing a test — and a human decides what happens next. Human-in-the-loop instead of full autonomy. Predictable, controllable, explainable.

Could you build a system with 99.8% autonomous task completion? Probably. But ask yourself honestly: do you need that right now, at this stage of your project?

-

What about security?

The risks aren’t theoretical. The OWASP LLM Top 10 lays them out pretty clearly: prompt injection, training data poisoning, sensitive information leaking through prompts — teams deal with this stuff daily.

Generated code that looks perfectly fine, passes all syntax checks… and fails silently in production. Or worse — it runs, but does something subtly wrong. That’s the kind of bug that eats your weekend.

Automated validation layers are great in theory. But if you’re a small team, start with manual code review. I mean it. Every single line the model produces should pass through a developer’s actual eyes. It’s slower, obviously. But you’ll know exactly what’s going into your product. Add automated checks later, once the volume of generated code exceeds what your team can review by hand.

-

What’s the fastest way to prove ROI to leadership?

Keep it dead simple. Pick one workflow — just one — where you have definite metrics: task execution time, defect count, cost per sprint. Run a 4–6 week pilot with a small team.

Track everything from day one. Hours saved, defects caught earlier, and token costs. Then show your CFO the numbers in language they already think in: “we saved X hours per release” or “cost per defect dropped by Y%.”

One concrete pilot with hard data beats a 50-slide strategy deck every single time. And believe me — I’ve sat through enough of those decks to know.