Likes and retweets confirm that something happened, but the reason behind the engagement stays hidden. Sentiment analysis on social media reads the emotional tone in comments, reviews, and public posts, then converts that tone into a signal that teams can act on.

Brands that actively monitor sentiment tend to catch reputational threats significantly earlier than those relying on standard analytics alone. Based on 15+ sentiment and NLP deployments across industries from cosmetics to financial services, GroupBWT has built the data pipelines, infrastructure, and classification systems of web scraping for sentiment analysis that power this work in production. This guide distills that experience into a practical playbook.

Why Sentiment Matters for Brands and Businesses

Organizations that perform social media sentiment analysis report measurably higher customer retention, and their product teams iterate based on actual user feedback rather than assumptions. Early detection of reputational threats follows as a natural consequence. But what does this look like in practice? The following case illustrates the difference between having sentiment data and not having it.

Problem → Solution → Result: A European cosmetics brand needed visibility into customer reactions across 13+ retail partners. Manual review tracking took weeks. GroupBWT designed a customer data platform that pulled reviews from third-party services alongside the brand’s own properties. Each review was matched to the correct product through GTIN/EAN codes, and Net Sentiment Score per SKU served as the primary KPI — when that score dropped below a defined threshold, the system flagged it for immediate review. Messaging adjustments happened before negative trends could solidify.

Consider a post that generates 10,000 interactions. Those interactions could represent a strong endorsement or a wave of frustration. Engagement volume, on its own, cannot make that distinction.

How Sentiment Analysis Differs from Social Media Analytics

| Dimension | Social Media Analytics | Sentiment Analysis |

| What it measures | Impressions, reach, clicks, share counts | Emotional tone behind each interaction (positive / negative / neutral) |

| What it answers | “What happened?” | “How do people feel about what happened?” |

| Spike in mentions | Shows the spike | Reveals whether the spike is excitement, confusion, or backlash |

| Decision impact | Informs channel strategy | Informs crisis response, product changes, messaging |

Through sentiment analysis social media teams access the emotional layer that engagement metrics miss. As platforms grow noisier, mention volume becomes a less reliable indicator than emotional direction.

How Social Media Sentiment Analysis Works

Three components power every sentiment system: data collection, text preprocessing, and classification. Effective sentiment analysis of social media data depends on getting each of these stages right. The section below covers the technical foundation of each component. The step-by-step implementation guide follows.

Data Collection from Social Platforms

APIs — Most platforms offer programmatic access. Reddit provides OAuth-based endpoints for traversing posts and comments. X (formerly Twitter) has tiered API access with persistent rate limits. Third-party review aggregation APIs are used to pull product ratings from retail networks, sometimes across dozens of locales simultaneously.

Technical note: Each platform demands its own API integration work. OAuth authentication, pagination logic, rate-limit management — none of this is standardized, so teams should budget time for per-platform implementation.

Web scraping — When APIs fall short (and they often do), scraping fills the gap. For a global management consultancy, GroupBWT explains how to scrape data from social media — one of the most reliable methods for complete data coverage without enterprise-tier API access. At peak cycles, this infrastructure processed hundreds of thousands of posts per month.

“APIs are never the whole story. Every platform we’ve integrated — Reddit, X, Trustpilot, regional review sites — eventually hits a wall: rate limits tighten, endpoints deprecate, data fields vanish between versions. We’ve learned to run scraping and API access side by side from the start. That way, when one channel goes sideways — and it will — the pipeline doesn’t go down with it.”

— Alex Yudin, Head of Data Engineering, GroupBWT

Social listening tools — Brandwatch, Sprout Social, and Hootsuite Insights bundle monitoring, processing, and visualization of brand data scraping into a single interface. These work well for teams that need results quickly without custom infrastructure. Scale, however, changes the equation — when GroupBWT built a sports engagement index spanning 200+ accounts across 15 countries, the project required web scraping infrastructure that went beyond what off-the-shelf tools could handle.

Text Preprocessing and Cleaning

A typical social media post might contain misspellings, slang, two or three emojis, a handful of hashtags, and words from more than one language. Classification models cannot process text that messy in its raw state. The preprocessing stage addresses this through tokenization (splitting text into individual words or phrases), removal of stop words (common filler words like “the” and “is”), and normalization of abbreviations and casing so that “GREAT” and “great” register the same way.

In practice, this gets complicated when languages mix. One GroupBWT pipeline for multilingual product reviews followed the sequence: tokenization → language detection → translation → vectorization. The language detection step proved critical — you cannot apply a monolingual English model to German text and expect reliable results.

A high-impact tip: filtering at the collection stage saves significant resources downstream. In one engagement for a top-four consulting firm, pre-collection filters reduced storage by approximately 40%.

Sentiment Classification Methods

Three broad approaches, each with different tradeoffs:

Rule-based — Predefined dictionaries of positive/negative words. Fast and transparent, but breaks under sarcasm and domain-specific language.

Machine learning + LLM hybrids — In one GroupBWT project analyzing 150K+ company profiles on a major B2B software review platform, we deployed a two-stage architecture: a high-speed relevance classifier filtered incoming data first, then a scraping in data science records that passed the gate. Result: over 90% accuracy at 5–10x lower processing cost.

Transformer and LLM models — DistilBERT (explained in Step 3) handles high-volume classification at a fraction of BERT’s compute cost. For non-English sentiment, language-specific models — among the established options, BETO for Spanish, chinese-bert-wwm for Mandarin, AraBERT for Arabic — outperform the translate-then-classify shortcut. In GroupBWT’s internal testing, generative LLMs like GPT-4o, Claude, and Gemini showed stronger results on sarcasm and implied sentiment, though accuracy varies by domain. Because LLM cost per query is considerably higher, most production pipelines route straightforward classification through transformer models and reserve LLMs for ambiguous cases.

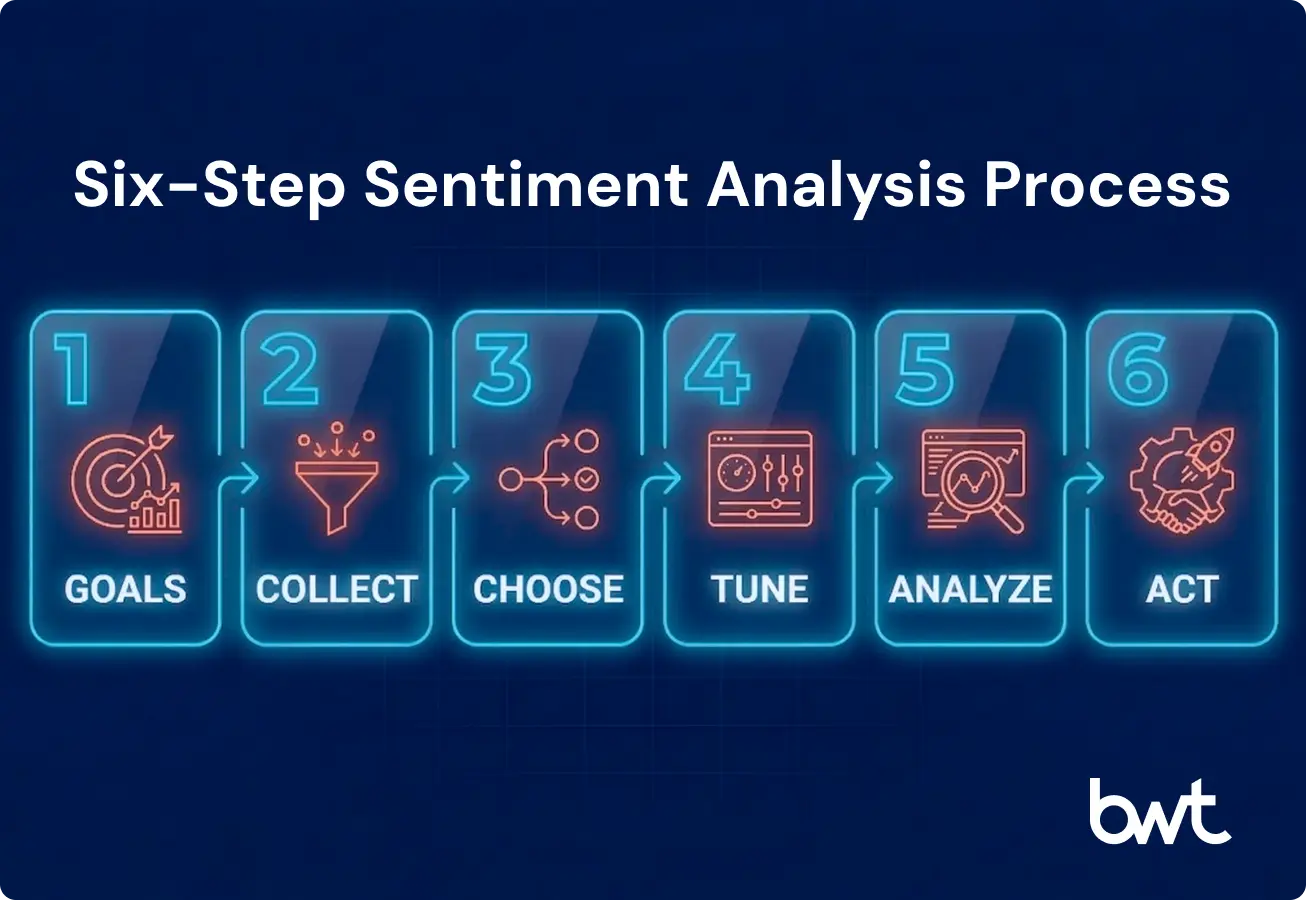

How to Do Social Media Sentiment Analysis: Step by Step

Each step below draws on production pipeline architecture from GroupBWT engagements.

Step 1: Define Business Goals and KPIs

Before touching any data, scope the objective. Are you tracking brand perception after a campaign? Monitoring product feedback across markets? Detecting early signs of a PR crisis?

KPIs should tie directly to business outcomes. Net Sentiment Score — calculated as the percentage of positive mentions minus negative mentions, divided by total mentions — gives a single trackable number. Sentiment trends over time and sentiment segmented by product or region add depth. Vague goals like “understand our audience” do not give engineering teams enough to build against.

“The most expensive mistake I’ve seen teams make isn’t choosing the wrong model — it’s starting data collection without a clear KPI framework. We had a client who spent four months building a multilingual scraping pipeline before realizing they needed sentiment segmented by product line, not by market. The architecture had to be partially rebuilt. Fifteen minutes of upfront alignment on what the dashboard needs to show would have saved eight weeks of rework.”

— Oleg Boyko, COO, GroupBWT

Step 2: Collect Relevant Social Media Data

Platform choice varies by market. In the Middle East, Snapchat and X dominate public conversation — not Instagram. In China, Weibo and Douyin replace the Western stack entirely.

As a social media sentiment analysis example: GroupBWT built a UGC collection pipeline for a Tripadvisor scraping, pulling data from Reddit and X. Each platform uses different authentication, pagination, and data structures, so normalization had to happen before anything could merge into a shared format.

Technical note: Multi-platform projects benefit from normalizing all source data into a single schema before analysis begins. In GroupBWT’s experience, teams that skip this step encounter compounding format mismatches downstream, which can double the integration effort.

Step 3: Choose a Sentiment Analysis Method

The right approach depends on data volume, language requirements, and available budget.

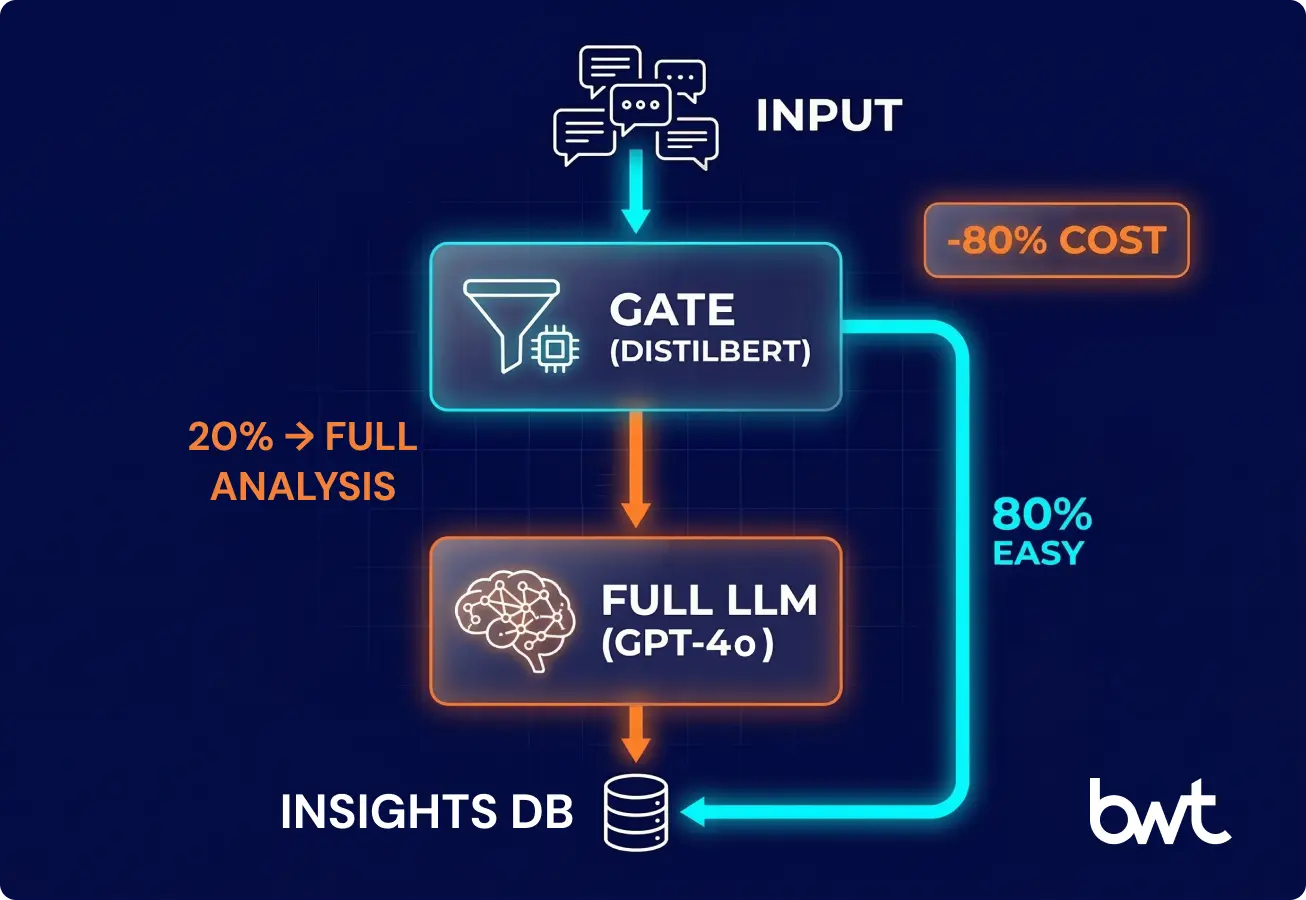

The GroupBWT Efficiency Standard — a two-stage classification architecture:

- Stage 1 — Relevance gate. DistilBERT or a comparable lightweight classifier screens each incoming post. DistilBERT is a compressed variant of the original BERT model, optimized to run faster at lower compute cost. Its job at this stage is binary: determine whether a post is relevant to the analysis scope or whether it is noise. Posts that fail the relevance check are discarded before they consume further processing resources.

- Stage 2 — Deep analysis. Posts that pass the gate, particularly ambiguous or high-value records, are routed to a full LLM such as GPT-4o. At this stage the model evaluates sarcasm, contextual tone, and implied sentiment that a simpler classifier would miss.

GroupBWT has deployed this two-stage architecture across multiple client engagements. In each case, NLP processing costs dropped by up to 80%, and classification accuracy remained at production-grade levels.

Step 4: Train or Configure Your Model

Pre-trained models deliver acceptable accuracy for general English content. Limitations surface when data includes domain-specific vocabulary, multiple languages, or niche platform conventions. At that point, customization is not an optimization — it is a prerequisite for reliable output.

Problem → Solution → Result: A sleep-technology brand’s reviews contained terms like “sleep latency,” “pressure relief,” and “edge support” that general-purpose models misclassified. GroupBWT fine-tuned the NLP models on domain-specific training data, after which the classifier handled specialized vocabulary accurately.

Two configuration principles:

- Lock down output format. When using LLMs for classification, constrain responses to a fixed JSON schema. This prevents the model from generating freeform text that breaks your database or downstream systems.

- Match model cost to task complexity. Use lightweight transformer models for clear-cut positive/negative cases. Reserve expensive LLM tokens for ambiguous or sarcastic content. This reduces inference costs by 3–5x.

Step 5: Analyze and Interpret Results

A raw sentiment score in isolation has limited operational value. Running sentiment analysis on social media data at scale demands that scores be broken down into categories granular enough to inform specific business decisions.

For a city recommendation engine, our team categorized sentiment across five dimensions: gastronomy, transportation, safety, attractions, and events. A city could score positively on food and nightlife while registering negative sentiment on transportation. If the system returned only one aggregate score per city, this kind of nuance would be lost, and the recommendations would suffer as a result.

Metabase, Tableau, and similar dashboard tools serve as the visualization layer on top of the pipeline — monitoring processing volumes, flagging failing stages, and surfacing anomalous results by source or region.

Step 6: Turn Insights into Action

Sentiment data that remains in a dashboard without triggering operational response generates no return on investment.

Problem → Solution → Result: In a tourism project, GroupBWT mapped sentiment clusters to customer journey stages: transportation, accommodation, entertainment, dining. Negative sentiment around transportation did not just flag a general problem — it pointed to the exact stage where the experience broke down and what operational fix was needed. That precision is the difference between data collection and business intelligence.

Advanced sentiment analysis on social media monitoring requires closed feedback loops: a sentiment drop triggers an alert, the alert initiates investigation, investigation leads to corrective action, and subsequent sentiment data measures whether the fix worked.

Tools for Social Media Sentiment Analysis

No-Code and SaaS Tools

For teams without data engineering resources, SaaS platforms offer the fastest path to sentiment analysis for social media:

| Tool | Best For | Key Strength |

| Brandwatch | Enterprise social listening | Comprehensive platform coverage, AI-powered insights |

| Sprout Social | Mid-market social teams | Integrated publishing + analytics + sentiment |

| Hootsuite Insights | Multi-platform monitoring | Broad channel support, team collaboration |

| Talkwalker | Global brand monitoring | 150+ languages, image recognition |

| MonkeyLearn | Custom text analysis | No-code ML model builder |

These platforms handle collection through visualization in one interface, which is why non-technical teams gravitate toward them. The tradeoff is flexibility: SaaS tools constrain data export, model customization, and integration with external systems.

Python Libraries and NLP Frameworks

NLTK — The foundational NLP toolkit. Its VADER analyzer handles social media text well out of the box, including slang, emoji patterns, and capitalization. Best for prototyping.

spaCy — Production-grade NLP with fast tokenization and pre-trained multilingual models. Built for pipelines where speed matters.

Transformers (Hugging Face) — Thousands of pre-trained models including domain-specific variants for Arabic, Spanish, Mandarin, and more. This is where the most accurate sentiment analysis in social media tracking lives — fine-tuned models trained on platform-specific data.

Choosing Your Stack: A Quick Comparison

| Feature | No-Code (SaaS) | Custom Python Pipeline | Enterprise AI Platform |

| Speed to Value | Days | Weeks–Months | Months |

| Flexibility | Low (templates) | High (full control) | High (modular) |

| Cost Structure | $100–$1K+/mo subscription | Engineering time (internal) | $50K–$150K+ project investment |

| Multilingual Support | Varies by vendor | Full (with effort) | Full (built-in) |

| Sarcasm / Nuance | Basic | High (via LLM routing) | Highest |

| Best For | Marketing teams | Data engineers | Global corporations |

Enterprise AI Platforms

Organizations processing reviews from 30+ locales or managing 300,000+ records per collection cycle require enterprise-grade platforms that integrate custom model training with scalable infrastructure and CRM/BI connectors.

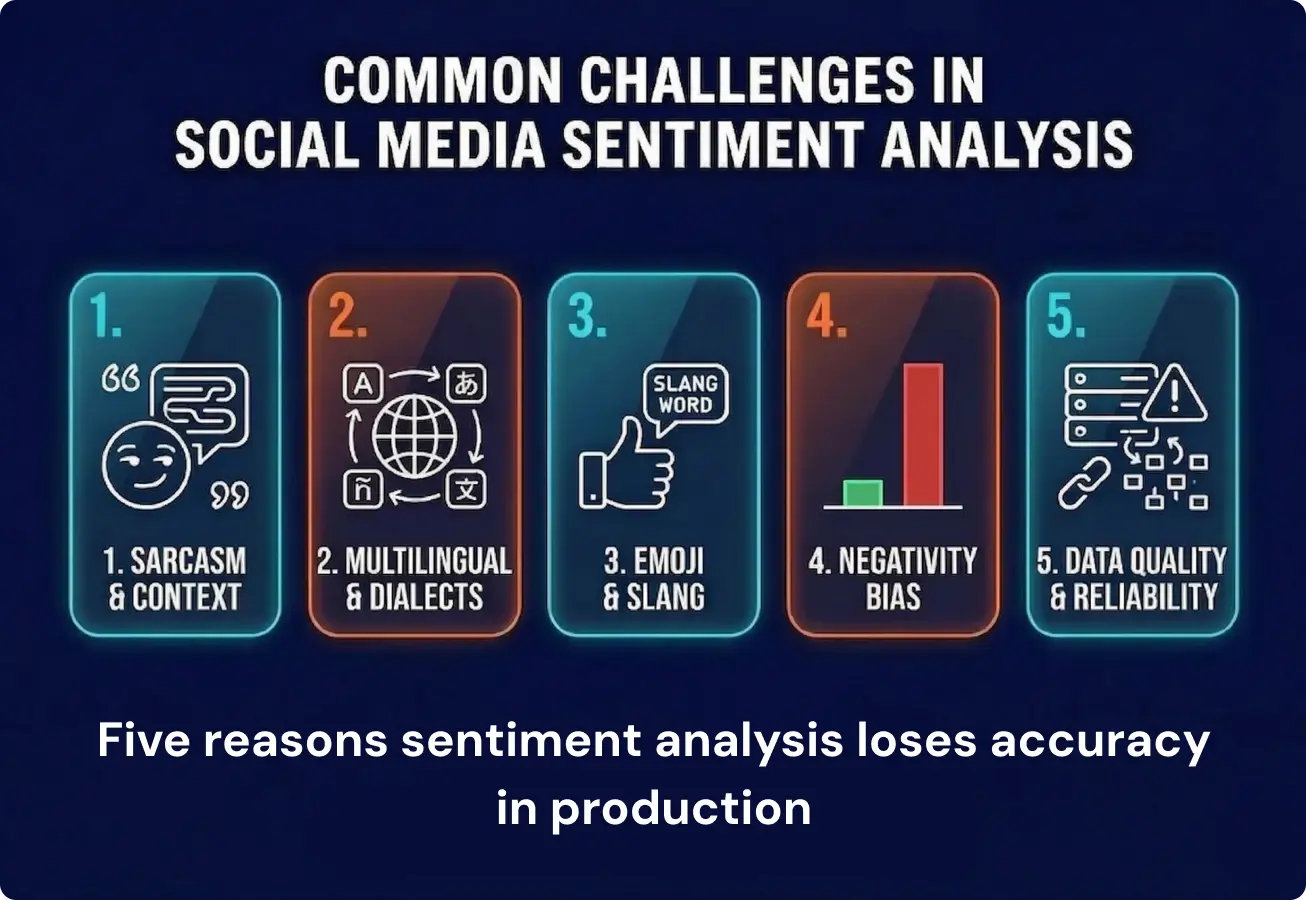

Common Challenges in Social Media Sentiment Analysis

Sarcasm and Context Understanding

“Oh great, another update that breaks everything.” Human readers catch the sarcasm instantly. Automated classifiers often do not. Sarcasm remains one of the hardest unsolved problems in sentiment analysis of social media. Transformer-based models improve detection over dictionary approaches, but accuracy still drops on short, informal posts. LLMs show promising results on sarcastic content in controlled tests, though performance varies across domains and languages — one reason hybrid pipelines (transformer models for volume, LLMs for tricky cases) are gaining traction.

Multilingual Data

Global brands cannot limit sentiment analysis to English, but multilingual work introduces complexity most teams underestimate. Dialect variation alone is a significant factor: Latin American Spanish and Castilian carry different emotional weight for identical words. Simplified and Traditional Chinese diverge in colloquial tone. Arabic splits across Gulf, Egyptian, and Levantine dialects, each with distinct slang and sentiment markers.

The most reliable approach from GroupBWT’s multilingual projects: a cascade architecture with language detection first, then routing to a language-specific classifier. Among the established options — BETO for Spanish, chinese-bert-wwm for Mandarin, AraBERT for Arabic — these models consistently outperform translate-then-classify shortcuts, especially for informal social text where translation flattens nuance.

“Language detection sounds trivial until you hit code-switching — a single review that starts in English, drops into Hindi mid-sentence, and ends with Arabic hashtags. Standard detection libraries pick the dominant language and misclassify everything else. We learned to run detection at the sentence level, not the document level. It added processing time, but our downstream classification accuracy jumped by double digits.”

— Alex Yudin, Head of Data Engineering, GroupBWT

Emoji and Slang Interpretation

A single 🔥 emoji can mean “this is great,” “this is controversial,” or “this is literally on fire” depending on context. Non-Latin hashtags add complexity — concatenated words, dialectal spelling, and transliteration all affect accuracy. Custom pipelines need dedicated emoji and slang mapping for their target domains.

Bias and Model Accuracy

User-generated reviews carry an inherent negativity bias — people are far more motivated to write when something went wrong. If your system relies only on UGC data, results will skew negative.

A product with 4.2-star ratings can appear majority-negative if the analysis only processes written review text (which skews toward complaints). Teams acting on this distorted signal may pull successful products or redirect resources to non-issues. The fix: establish baselines from curated sources (rating databases, expert indices) and use UGC sentiment as a dynamic modifier. This hybrid approach gives a far more accurate picture than raw reviews alone.

“I’ve sat in boardrooms where teams presented sentiment reports that showed overwhelmingly negative perception for a product line that was actually growing 20% year over year. The data wasn’t wrong — it just only captured the vocal minority. Once we layered in aggregate rating baselines and purchase data as a counterweight, the picture flipped entirely. Uncorrected negativity bias doesn’t just distort analysis; it drives real budget reallocation in the wrong direction.”

— Oleg Boyko, COO, GroupBWT

Data Quality and Reliability

Classification accuracy means nothing if the underlying data is unreliable. Bot-generated content and coordinated spam campaigns flood datasets with synthetic posts that carry no genuine sentiment signal — without filtering, they inflate mention counts and distort scores. Platform API rate limits create a different kind of gap: if a pipeline hits its daily quota before collecting all relevant posts, the analysis is built on incomplete data.

Schema drift is equally disruptive. Social platforms update API structures without notice — a field that existed last month may be renamed or removed. Pipelines without schema validation checks process malformed records silently, producing outputs that look normal but contain corrupted data. If one data source goes offline and the system does not flag it, a drop in mentions may be interpreted as a sentiment shift when it is actually an infrastructure failure.

GroupBWT addresses these through automated monitoring at each pipeline stage: ingestion volume checks, schema validation, bot-detection filters, and alerting on anomalous drops in data flow.

Best Practices for Accurate Sentiment Analysis

Continuous Model Training

Any social media sentiment analysis model cannot be configured once and left to run indefinitely. Language changes — slang terms cycle in and out, platform conventions shift, and content formats evolve. A model trained on last year’s data will show measurably lower accuracy on current posts.

Domain adaptation is equally important. General-purpose models struggle with specialized terminology. For verticals like sleep technology, automotive, or fintech, fine-tuning on domain-specific data is essential. Teams running sentiment analysis on social media data across multiple verticals should budget for separate training cycles per domain.

Combining Quantitative and Qualitative Analysis

Quantitative sentiment scores need qualitative context to be useful. Sample posts, representative user quotes, and trend explanations give stakeholders the narrative behind the numbers.

In one GroupBWT travel platform engagement, baseline ratings from authoritative sources (curated databases, safety indices, expert reviews) served as the quantitative foundation, with UGC-derived sentiment layered as a qualitative modifier. Neither source alone gave a complete picture; the combination made the analysis reliable.

Ethical and Legal Considerations

GDPR, CCPA, and platform terms of service all constrain what data can be collected, stored, and for how long. Is web scraping for commercial use? It depends on the platform’s ToS and applicable regional regulations. Best practice: anonymize and aggregate wherever possible, maintain clear data retention policies, and document collection methods.

Social Media Sentiment Analysis Use Cases

Brand Monitoring

The most common starting point: tracking audience perception over time. The cosmetics CDP project described earlier is a clear example — real-time sentiment tracking across 13+ retail partners, matched to individual product SKUs, with alerts triggered by Net Sentiment Score thresholds.

Product Feedback Analysis

Sentiment analysis on social media and review platforms reveals patterns invisible in raw form. Processing scraping reviews from Trustpilot, Amazon, and comparable sources surfaces what customers consistently praise, what generates frustration, and where unmet demand exists. A critical technical step: deduplication — grouping semantically similar reviews so the same complaint phrased ten different ways counts as one signal, not ten.

Crisis Detection

A sudden spike in negative sentiment is often the earliest warning of a brewing crisis — before media coverage, before support queues flood. Real-time monitoring pipelines that trigger automated alerts on negative spikes give crisis teams the head start they need. Organizations with mature sentiment infrastructure can detect and respond to reputational threats within hours rather than days.

Competitive Intelligence

Sentiment analysis on social media extends beyond your own brand. Monitoring competitor sentiment surfaces market opportunities and validates positioning. One GroupBWT client — a financial analytics platform — correlated consumer sentiment from review platforms with stock price movements, using sentiment as competitive intelligence analysis and data sources.

When to Build Custom Sentiment Infrastructure

For organizations whose social media sentiment analysis needs outgrow SaaS dashboards, the decision to build rather than buy typically hinges on specificity, not volume. SaaS tools handle general-purpose sentiment monitoring adequately for most use cases. The inflection point arrives when an organization needs category-level sentiment across multiple dimensions per entity, domain-adapted classifiers chained with relevance gates, or direct integration into existing BI and CRM systems through fixed data schemas.

“The breaking point is usually not volume — it’s specificity. SaaS tools handle 80% of general sentiment well enough. But the moment you need category-level sentiment across five dimensions per entity, or you need to chain a relevance gate with a domain-adapted classifier and pipe results into a client’s existing BI stack in a fixed JSON schema — that’s when you’re building, not buying. And once you cross that line, there’s no going back to dashboards.”

— Alex Yudin, Head of Data Engineering, GroupBWT

The ROI Case for Custom Infrastructure

Consider an organization processing 1 million social media posts per month. Under a two-stage architecture, roughly 70% of incoming posts are filtered by the relevance gate, meaning only 300,000 records reach the LLM stage. That filtering reduces monthly NLP costs from approximately $8,000–$12,000 (if every post hit a full LLM) to $2,000–$3,500. Over twelve months, the infrastructure pays for itself through processing savings alone — before accounting for faster crisis detection or better product decisions.

Custom development follows a defined sequence: corpus collection, model fine-tuning, evaluation against domain benchmarks, and ongoing drift monitoring. Enterprise implementations typically span 12 to 26 months. For organizations where sentiment intelligence directly informs revenue-affecting decisions, GroupBWT’s project data shows consistent positive ROI across verticals.

Final Thoughts

Across 15+ sentiment deployments, one pattern has been consistent: the organizations that extract the most value from sentiment data are not the ones with the most sophisticated models. They are the ones that connected sentiment output to operational workflows — product decisions, crisis protocols, campaign adjustments — so that a shift in score triggers a specific action within hours, not weeks.

The technology to build this exists today and is accessible at multiple price points. What separates organizations that use it effectively from those that do not is rarely budget or tooling. It is the upfront work of defining what the data needs to answer and building the infrastructure to act on it.

GroupBWT builds that infrastructure: data collection pipelines, NLP classification systems, two-stage architectures, and BI integrations. Organizations evaluating whether to build or buy sentiment capabilities, or those whose current tooling has reached its limits, can reach out to discuss specific requirements on web scraping services.

FAQ

-

What is sentiment analysis and why does it matter for social media?

Sentiment analysis uses NLP and machine learning to classify social media posts, comments, and reviews as positive, negative, or neutral. Standard engagement metrics — impressions, shares, click-through rates — confirm that a conversation is happening but reveal nothing about its emotional direction. Sentiment analysis fills that gap. With this data available in real time, crisis teams can catch threats earlier, product teams can distinguish isolated complaints from systemic issues, and marketing can measure whether a campaign landed the way it was intended to.

-

How to perform social media sentiment analysis without coding experience?

Coding is not required. SaaS platforms like Brandwatch, Sprout Social, and Hootsuite Insights include built-in sentiment classification. The setup involves connecting your social accounts and defining keywords to track; most platforms begin generating reports within the same day. For teams that want more control over classification logic without writing code, MonkeyLearn offers a visual drag-and-drop interface for building custom classifiers. The comparison table earlier in this guide breaks down differences in language support, accuracy, and pricing.

-

What is the difference between sentiment analysis and social media analytics?

Social media analytics tracks quantitative metrics: impressions, clicks, shares, click-through rates. Sentiment analysis evaluates the emotional tone of the interactions behind those numbers. A post with 50,000 impressions and 2,000 comments looks strong by analytics standards — but if those comments are predominantly frustrated or mocking, the business signal is negative, not positive. Analytics tools have no mechanism for surfacing that distinction. Effective social media strategies use both: analytics for reach and distribution, sentiment analysis for audience reaction.

-

What are the biggest technical challenges when analyzing social media sentiment?

Sarcasm remains the most persistent problem. “Love how this update broke everything” reads as negative to a human, but many classifiers register “love” as positive and misclassify it. Even current LLMs have not fully resolved this. Multilingual content adds difficulty — a word that is mildly colloquial in Castilian Spanish can be offensive in Latin American usage, and posts that code-switch between languages within a single sentence confuse most detection systems. Emoji interpretation depends heavily on context (🔥 can mean admiration, controversy, or a literal event), and negativity bias in UGC skews raw datasets toward complaints. Language itself also shifts. Slang evolves, platform norms change, and models trained six months ago can show measurable accuracy drops without retraining.

-

How much does it cost to implement sentiment analysis for social platforms?

The range depends on scope. Open-source Python libraries (NLTK, VADER, Hugging Face Transformers) carry no licensing fees — the cost is in engineering time to build and maintain the pipeline. SaaS platforms like Brandwatch and Sprout Social typically run $100–$1,000+/month depending on platform coverage and team size. Custom enterprise implementations — bespoke model training, multilingual pipelines, dedicated infrastructure — generally require $50K–$150K+ over a 12-to-26-month engagement. A startup tracking one brand on two channels has different needs than an enterprise monitoring sentiment across 30+ markets. The comparison table in the tools section provides a more detailed breakdown.